China’s DeepSeek has released two powerful AI models—R1 and V3.1—that match or exceed the performance of leading Western models while operating at a fraction of the cost. These open-source releases are reshaping the global AI landscape and offering startups an accessible alternative to expensive proprietary systems.

DeepSeek R1 and V3.1: How China’s Open Models Are Challenging Global Leaders represents a significant shift in artificial intelligence development. Released in early 2025, these models have demonstrated that cutting-edge AI capabilities no longer require massive budgets or exclusive access to proprietary systems. Instead, developers worldwide can now leverage reasoning-intensive models that compete directly with ChatGPT, Claude, and other established players.

- Key Takeaways

- What Makes DeepSeek R1 and V3.1 Different from Other AI Models?

- How Does DeepSeek R1 Compare to Claude and Qwen3 on Reasoning Benchmarks?

- What Are the Cost Advantages of Using DeepSeek for Startup AI Applications?

- How Can Developers Access and Deploy DeepSeek R1 and V3.1?

- What Are the Technical Innovations Behind DeepSeek's Cost Efficiency?

- How Does DeepSeek's Open-Source Approach Challenge Proprietary AI Leaders?

- What Are the Limitations and Security Considerations for DeepSeek Models?

- What Does the Future Hold for DeepSeek and Open-Source AI Competition?

- FAQ

- Conclusion

- References

Key Takeaways

- DeepSeek R1 matched or surpassed advanced foundation models on reasoning tasks at launch, particularly excelling in coding and mathematics benchmarks[1]

- DeepSeek V3.1 features a 685-billion parameter architecture that activates only 37 billion parameters per token, enabling cost-efficient inference[2]

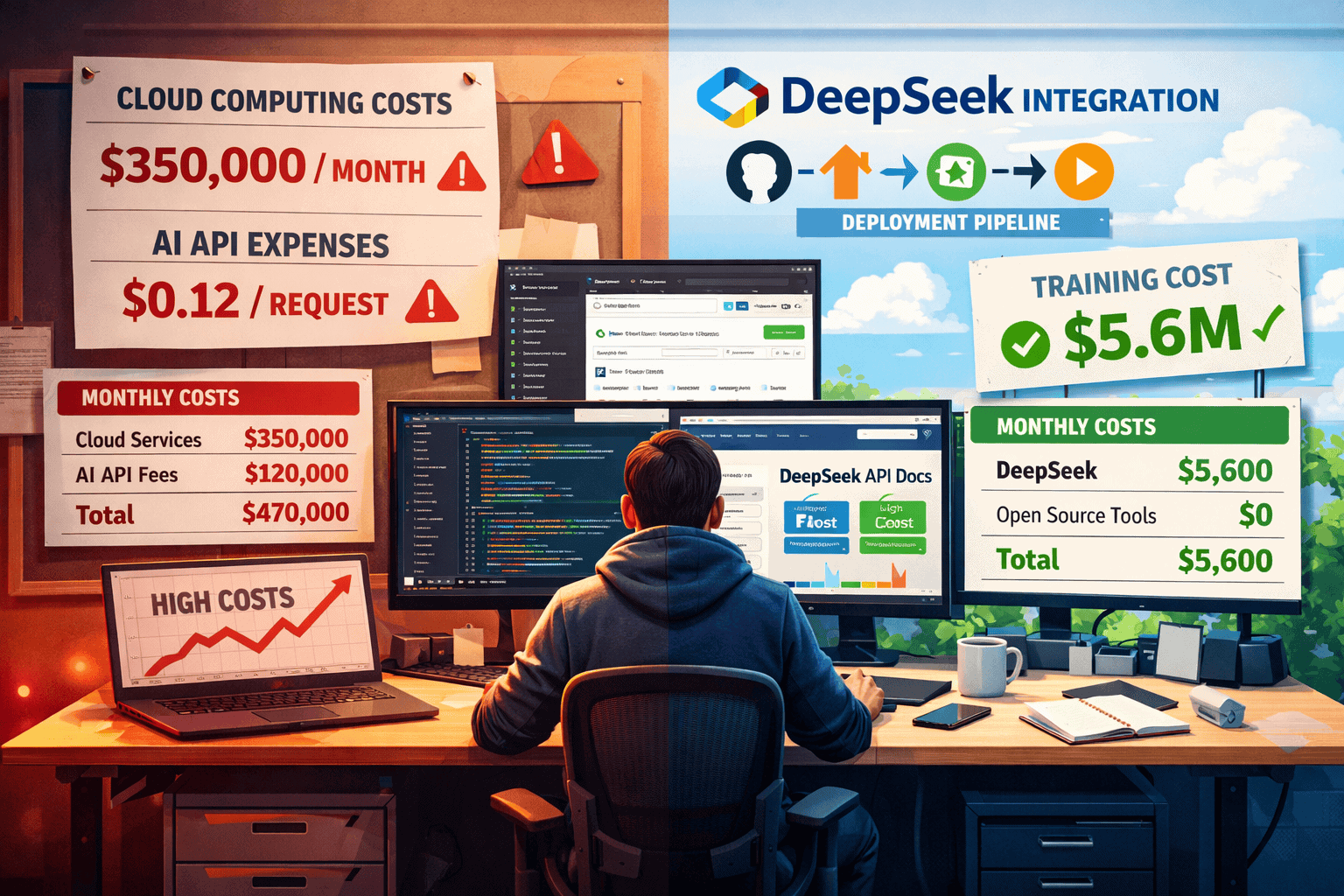

- Training costs were dramatically lower than Western competitors, with DeepSeek-V2 requiring only $5.6 million compared to hundreds of millions for equivalent models[2]

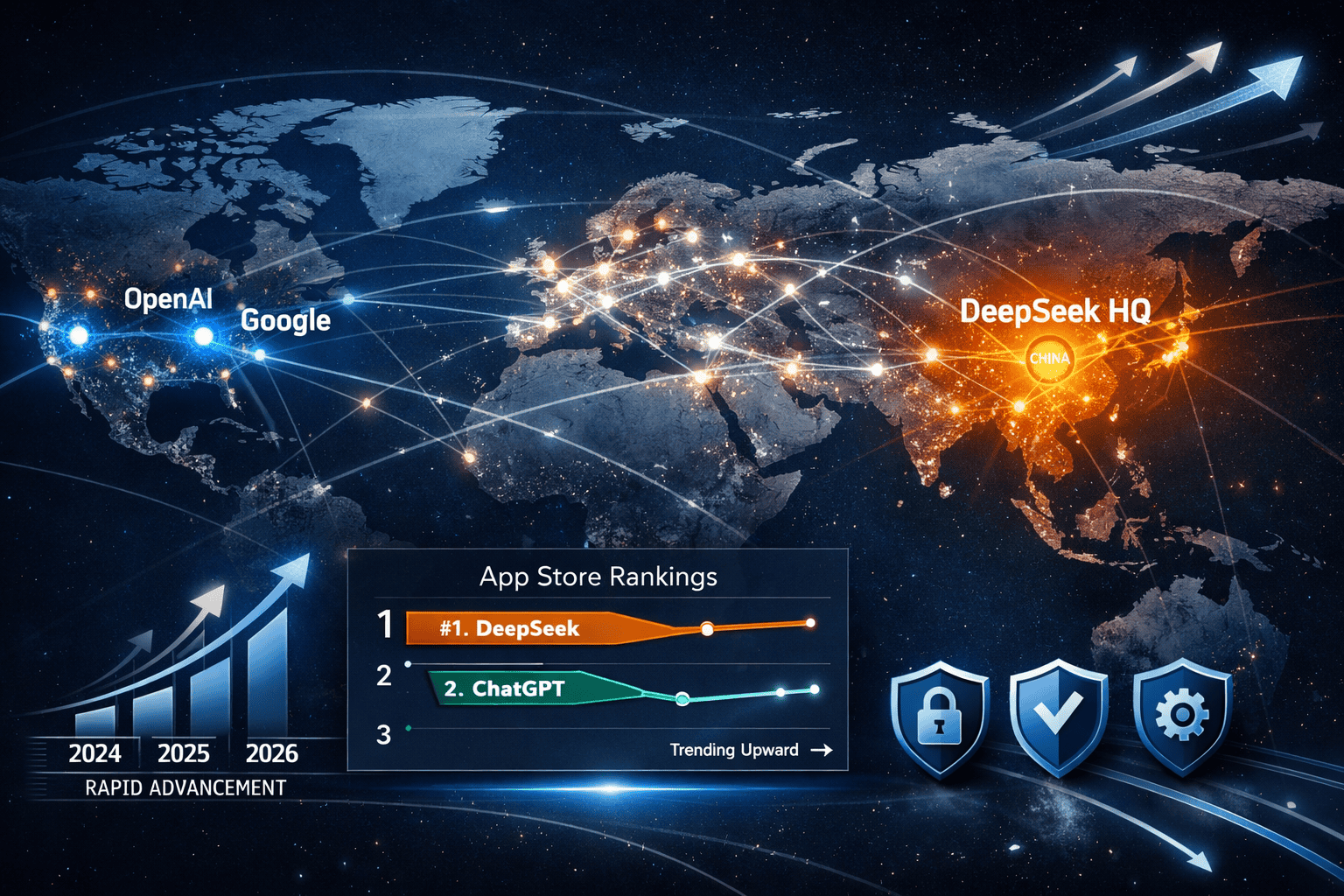

- The DeepSeek chatbot reached #1 on Apple App Store after launch, dethroning ChatGPT and demonstrating mainstream appeal[1]

- Both models are open-source, giving startups and developers accessible alternatives to expensive proprietary APIs

What Makes DeepSeek R1 and V3.1 Different from Other AI Models?

DeepSeek R1 and V3.1 stand out because they deliver competitive performance at dramatically reduced costs through innovative architecture and training methods. Unlike proprietary models that require expensive API subscriptions, these open-source alternatives give developers full control and transparency.

R1’s reasoning advantage: This model employed reinforcement learning and supervised fine-tuning with a distinctive “cold start” phase using carefully crafted Chain-of-Thought (CoT) examples, followed by iterative refinement phases with reward systems[1]. The result is exceptional performance on reasoning-intensive tasks involving well-defined problems with clear solutions.

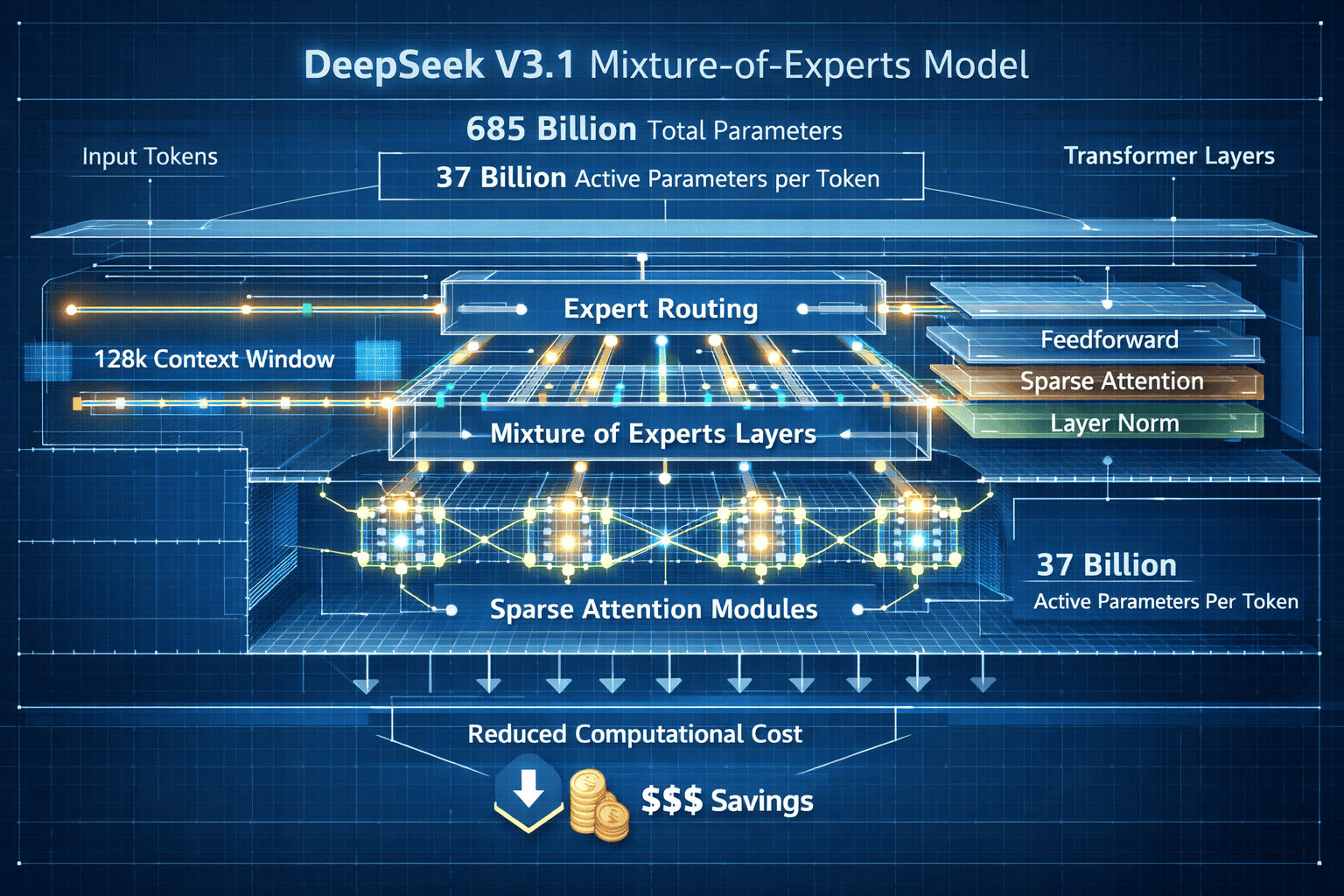

V3.1’s efficiency breakthrough: The model uses a Mixture-of-Experts (MoE) design with integrated reasoning, non-reasoning, tool usage, and native coding capabilities in a single hybrid model[2]. This architecture allows the system to maintain massive capacity (685 billion parameters) while activating only what’s needed for each specific task (37 billion parameters per token).

Key architectural features include:

- 128,000-token context window matching other leading open models like OpenAI’s gpt-oss and Google’s Gemma 3[2]

- Sparse Attention technology in the V3.1-Terminus experimental release (September 30, 2025) to reduce computational costs[3]

- Hybrid architecture combining multiple capabilities in one unified model rather than requiring separate specialized systems

- Open-source licensing allowing developers to inspect, modify, and deploy without vendor lock-in

The cost differential is substantial. While Western labs spend hundreds of millions training comparable models, DeepSeek-V2 required only $5.6 million for training[2]. This efficiency extends to inference costs, making these models particularly attractive for startups building AI applications on limited budgets.

Choose DeepSeek if: You need strong reasoning capabilities for coding or mathematics, want to avoid vendor lock-in, or require cost-efficient deployment at scale. Avoid if: You need the absolute cutting edge in conversational AI or require enterprise support contracts.

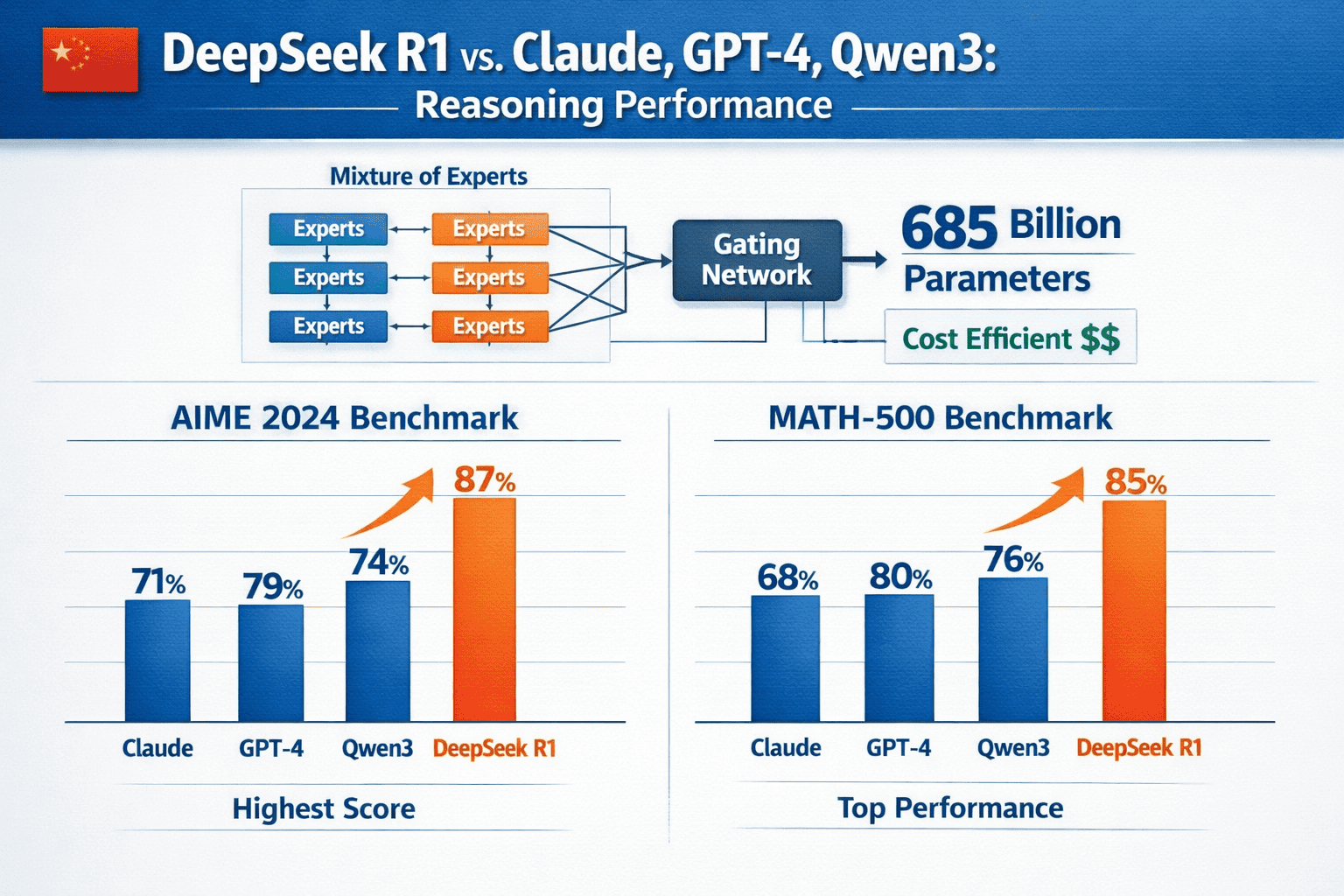

How Does DeepSeek R1 Compare to Claude and Qwen3 on Reasoning Benchmarks?

DeepSeek R1 demonstrates competitive or superior performance on mathematical and coding benchmarks compared to Claude and Qwen3, particularly excelling in structured problem-solving tasks. At its January 2025 release, R1 matched or surpassed the reasoning capabilities of advanced foundation models at a fraction of operating costs[1].

Benchmark performance highlights:

- Coding tasks: R1 beat rivals on nearly all coding benchmarks tested[1]

- Mathematics: Exceptional performance on AIME and MATH-500 benchmark suites, showing strong reasoning abilities and mathematical skills[2]

- Logic problems: Early testers reported success on complex logic problems requiring multi-step reasoning[2]

- Overall intelligence metrics: V3.1-Terminus ranks below ChatGPT-5, Grok, and Claude but is tied with OpenAI’s gpt-oss-120b on intelligence metrics[3]

The comparison reveals an important pattern: DeepSeek models excel particularly at reasoning-intensive tasks involving well-defined problems with clear solutions[1]. This makes them ideal for:

- Software development and code generation

- Mathematical problem-solving

- Structured data analysis

- Scientific reasoning tasks

- Technical documentation generation

However, the models show different strengths than conversational AI systems like Claude. While Claude may offer more nuanced dialogue and creative writing capabilities, DeepSeek’s focus on reasoning and structured tasks gives it an edge in technical applications.

Common mistake: Assuming all AI models are interchangeable. DeepSeek R1 and V3.1 are optimized for reasoning and coding, not general conversation or creative writing. Match the model to your specific use case rather than expecting universal excellence.

For startups: The combination of strong reasoning performance and low operating costs makes DeepSeek particularly attractive for applications like automated code review, mathematical tutoring systems, data analysis tools, and technical support automation.

What Are the Cost Advantages of Using DeepSeek for Startup AI Applications?

DeepSeek offers startups dramatic cost savings—potentially 90% or more compared to proprietary API services—while maintaining competitive performance on reasoning-intensive tasks. The $5.6 million training cost for DeepSeek-V2 demonstrates efficiency that translates directly to lower inference costs[2].

Cost comparison breakdown:

| Cost Factor | Traditional Proprietary APIs | DeepSeek Models |

|---|---|---|

| Training cost | $100M-$500M+ | $5.6M (V2)[2] |

| API pricing model | Per-token usage fees | Self-hosted or free tier |

| Vendor lock-in | High (proprietary) | None (open-source) |

| Scaling costs | Linear increase | Reduced via MoE architecture |

| Customization | Limited or expensive | Full control |

Real-world cost advantages:

- Self-hosting option: Deploy on your own infrastructure to eliminate per-token API fees entirely

- Efficient inference: The MoE architecture activates only 37 billion of 685 billion parameters per token, reducing computational requirements[2]

- Free tier access: DeepSeek offers free access to V3.2 as of February 2026[5], allowing experimentation without upfront costs

- No vendor markup: Open-source licensing means no premium pricing for enterprise features

Budget planning for startups:

- Initial testing phase: Use free DeepSeek API to validate your use case (cost: $0)

- Small-scale deployment: Self-host on cloud infrastructure ($500-$2,000/month depending on usage)

- Medium-scale production: Optimized cloud deployment with load balancing ($2,000-$10,000/month)

- Large-scale operations: Dedicated infrastructure with custom optimizations (variable, but still significantly cheaper than proprietary alternatives)

Edge case: If your application requires the absolute latest conversational AI capabilities or you need guaranteed SLA support, proprietary services may still be worth the premium. But for reasoning-intensive applications like code generation, data analysis, or mathematical problem-solving, DeepSeek offers exceptional value.

The cost efficiency extends beyond direct API fees. Startups gain:

- Transparency: Full visibility into model behavior for debugging and optimization

- Customization: Ability to fine-tune models for specific domains without vendor approval

- Data privacy: Option to keep all data on-premises rather than sending to third-party APIs

- Negotiating leverage: Open-source alternatives provide bargaining power with proprietary vendors

For more information on how AI tools can enhance your business operations, visit our features page.

How Can Developers Access and Deploy DeepSeek R1 and V3.1?

Developers can access DeepSeek models through free API access, self-hosted deployment, or integration with popular AI frameworks. As of February 2026, DeepSeek offers free access to V3.2 as their latest model[5], making experimentation straightforward.

Access methods ranked by ease:

Free API access (easiest)

- Visit the official DeepSeek website[5]

- Create a free account

- Obtain API credentials

- Start making requests immediately

- Best for: Initial testing, prototyping, low-volume applications

Cloud-hosted deployment (moderate difficulty)

- Deploy on AWS, Google Cloud, or Azure using container services

- Use pre-built Docker images or deployment scripts

- Configure scaling and load balancing

- Best for: Production applications with moderate traffic

Self-hosted infrastructure (advanced)

- Download model weights from official repositories

- Set up GPU infrastructure (minimum 8x A100 or equivalent recommended)

- Configure inference optimization tools

- Best for: High-volume applications, data privacy requirements, maximum cost control

Step-by-step deployment for startups:

Step 1: Evaluate your use case and expected volume

- Prototype with free API first

- Measure token usage patterns

- Estimate monthly costs for different deployment options

Step 2: Choose your deployment strategy

- Use free API if monthly costs stay under $500

- Move to cloud-hosted if you need better performance or SLA

- Consider self-hosting only if monthly API costs would exceed $5,000

Step 3: Implement and optimize

- Start with standard configurations

- Monitor performance metrics (latency, throughput, accuracy)

- Optimize based on actual usage patterns

Step 4: Plan for scaling

- Set up monitoring and alerting

- Implement caching for common queries

- Consider hybrid approaches (API for spikes, self-hosted for baseline)

Integration with popular frameworks:

- Python: Native support through official SDK and popular libraries

- JavaScript/Node.js: REST API integration with standard HTTP clients

- LangChain: Compatible with LangChain framework for building AI applications

- HuggingFace: Model weights available through HuggingFace repositories

Common deployment mistakes:

- Over-provisioning resources: Start small and scale based on actual demand

- Ignoring context window limits: The 128,000-token limit is generous but not unlimited[2]

- Skipping optimization: Use batching, caching, and prompt engineering to reduce costs

- Neglecting monitoring: Track performance metrics from day one to catch issues early

Security consideration: While DeepSeek models offer powerful capabilities, security researchers have identified potential vulnerabilities in AI systems generally[4]. Implement proper input validation, output filtering, and access controls regardless of which model you choose.

For businesses looking to integrate AI solutions effectively, our pricing options provide flexible solutions for different scales.

What Are the Technical Innovations Behind DeepSeek’s Cost Efficiency?

DeepSeek achieves cost efficiency through three primary innovations: Mixture-of-Experts architecture, sparse attention mechanisms, and optimized training methods. These technical advances allow the models to maintain competitive performance while dramatically reducing computational requirements.

Mixture-of-Experts (MoE) architecture:

The V3.1 model contains 685 billion total parameters but activates only 37 billion parameters per token[2]. This selective activation means:

- Reduced memory bandwidth: Only a fraction of the model needs to be accessed for each inference

- Lower energy consumption: Fewer active parameters means less computational work

- Faster inference: Smaller active parameter sets process more quickly

- Maintained capability: The full 685-billion parameter capacity remains available when needed

How MoE works in practice:

- Input token arrives at the model

- Routing mechanism determines which “experts” (parameter subsets) are relevant

- Only selected experts activate for processing

- Results combine to produce the output

- Inactive experts consume minimal resources

Sparse Attention technology:

The V3.1-Terminus experimental release (September 30, 2025) incorporates sparse attention to reduce computational costs[3]. Traditional attention mechanisms in transformers examine every token relationship, creating quadratic computational complexity. Sparse attention:

- Focuses on relevant relationships: Not every token needs to attend to every other token

- Reduces memory requirements: Fewer attention calculations mean less memory usage

- Enables longer context windows: The 128,000-token context becomes computationally feasible[2]

- Maintains quality: Careful design ensures important relationships aren’t missed

Optimized training methods:

In January 2026, DeepSeek published a new AI training method for scaling large language models that analysts describe as a “breakthrough,” though technical details remain unreleased[6]. The earlier training approach for R1 included:

- Reinforcement learning with human feedback (RLHF): Iterative improvement based on quality assessments

- Supervised fine-tuning: Targeted training on specific task types

- “Cold start” phase: Carefully crafted Chain-of-Thought examples to establish baseline reasoning patterns[1]

- Reward system optimization: Efficient feedback mechanisms that reduce training iterations

Comparison: Traditional vs. DeepSeek approach

| Aspect | Traditional Models | DeepSeek Innovation |

|---|---|---|

| Parameter activation | All parameters active | Only 5.4% active (37B of 685B)[2] |

| Attention mechanism | Full quadratic attention | Sparse attention[3] |

| Training cost | $100M-$500M+ | $5.6M (V2)[2] |

| Inference efficiency | Standard | Optimized via MoE |

| Context handling | Memory-intensive | Efficient sparse processing |

Why this matters for developers:

These technical innovations translate directly to practical benefits. A startup running DeepSeek on cloud infrastructure might pay $2,000/month for usage that would cost $20,000/month with a traditional model of similar capability. The efficiency gains compound at scale.

Edge case: For extremely high-throughput applications (millions of requests per day), the MoE routing overhead can become significant. In these scenarios, careful optimization of the routing mechanism and potentially custom hardware acceleration may be necessary.

To learn more about how innovative technology solutions can benefit your organization, check out our about page.

How Does DeepSeek’s Open-Source Approach Challenge Proprietary AI Leaders?

DeepSeek’s open-source strategy disrupts the AI market by democratizing access to competitive models, forcing proprietary vendors to justify premium pricing, and accelerating innovation through community collaboration. The DeepSeek chatbot reaching #1 on Apple App Store after launch, dethroning ChatGPT[1], demonstrates mainstream appeal that challenges the dominance of closed systems.

Market disruption mechanisms:

- Price pressure: When open-source alternatives offer 90%+ cost savings, proprietary vendors must either reduce prices or clearly differentiate their value proposition

- Transparency advantage: Developers can inspect and verify model behavior, building trust that black-box systems cannot match

- Customization freedom: Organizations can fine-tune and adapt models without vendor permission or additional fees

- Ecosystem development: Open-source models enable third-party tools, optimizations, and integrations that proprietary systems restrict

Strategic implications for AI leaders:

OpenAI and ChatGPT:

- Must emphasize superior conversational capabilities and user experience

- Face pressure to reduce API pricing or offer more generous free tiers

- Need to accelerate innovation to maintain technical leadership

Google and Gemini:

- Can leverage integration with Google Cloud and services as differentiator

- Must justify premium pricing with enterprise features and support

- Face competition in developer mindshare from accessible alternatives

Anthropic and Claude:

- Constitutional AI and safety features become key differentiators

- Enterprise support and reliability guarantees justify higher costs

- Research leadership in AI safety provides unique positioning

The open-source advantage:

DeepSeek benefits from community contributions that proprietary labs cannot easily replicate:

- Rapid bug discovery: Thousands of developers testing and reporting issues

- Optimization techniques: Community-developed inference optimizations and deployment tools

- Use case expansion: Developers apply models to novel problems, discovering new capabilities

- Educational resources: Tutorials, guides, and best practices emerge organically

Competitive response patterns:

Proprietary AI leaders are responding through:

- Selective open-sourcing: Releasing older models as open-source (e.g., Meta’s Llama series)

- Hybrid licensing: Offering open weights with usage restrictions

- Enterprise differentiation: Emphasizing support, SLAs, and integration features

- Accelerated innovation: Increasing research investment to maintain technical edge

Global competition dynamics:

DeepSeek R1 and V3.1 represent China’s growing AI capabilities and challenge Western dominance in foundation models. This geopolitical dimension adds complexity:

- Technology sovereignty: Countries and organizations seek AI independence from foreign vendors

- Regulatory divergence: Different regions may favor local or open-source alternatives

- Talent competition: Open-source projects attract researchers who value transparency and academic freedom

- Investment patterns: Venture capital and government funding increasingly support open alternatives

Common misconception: Open-source models are always inferior to proprietary alternatives. DeepSeek demonstrates that well-designed open models can match or exceed proprietary systems on specific tasks, particularly reasoning-intensive applications[1].

For organizations evaluating AI solutions, the open-source challenge means more options and better pricing. But it also requires more technical sophistication to evaluate trade-offs and manage deployment complexity.

If you’re interested in exploring how different technology solutions compare, our contact page can connect you with experts who can help.

What Are the Limitations and Security Considerations for DeepSeek Models?

DeepSeek models face limitations in conversational AI quality compared to top proprietary systems, and security researchers have identified potential vulnerabilities that require careful mitigation. While V3.1-Terminus ranks competitively, it still falls below ChatGPT-5, Grok, and Claude on overall intelligence metrics[3].

Performance limitations:

- Conversational quality: DeepSeek excels at reasoning and coding but may produce less natural dialogue than Claude or ChatGPT in open-ended conversations

- Creative writing: Structured tasks outperform creative or artistic applications

- Multilingual capabilities: Primary optimization for English and Chinese; other languages may show reduced performance

- Domain-specific knowledge: General-purpose training may lack depth in specialized fields compared to fine-tuned proprietary models

Security vulnerabilities:

Security researchers have identified potential flaws in DeepSeek R1[4]. While specific technical details vary, common AI security concerns include:

- Prompt injection attacks: Malicious inputs that manipulate model behavior

- Data extraction: Attempts to retrieve training data or sensitive information

- Bias exploitation: Triggering unintended or harmful outputs

- Denial of service: Inputs designed to consume excessive computational resources

Mitigation strategies:

For input security:

- Implement strict input validation and sanitization

- Use content filtering before passing queries to the model

- Set rate limits and authentication requirements

- Monitor for suspicious query patterns

For output security:

- Filter model outputs before displaying to users

- Implement safety classifiers to catch harmful content

- Log outputs for audit and review

- Use confidence thresholds to flag uncertain responses

For deployment security:

- Keep model weights and infrastructure access restricted

- Implement proper authentication and authorization

- Use encryption for data in transit and at rest

- Regularly update dependencies and security patches

Privacy considerations:

Open-source deployment offers privacy advantages but requires careful management:

- Self-hosting benefits: Complete data control, no third-party access, compliance flexibility

- Self-hosting responsibilities: Secure infrastructure management, access logging, data retention policies

- API usage risks: Free or third-party APIs may log queries or responses

- Fine-tuning data: Custom training data must be protected from unauthorized access

Operational limitations:

- Support: No enterprise SLA or dedicated support team (community-based assistance only)

- Documentation: May lag behind proprietary systems in completeness and polish

- Integration: Fewer pre-built connectors and integrations than established platforms

- Stability: Rapid development may introduce breaking changes or compatibility issues

When to choose alternatives:

Consider proprietary systems if you need:

- Guaranteed uptime and enterprise support contracts

- The absolute cutting edge in conversational AI

- Extensive pre-built integrations with business tools

- Compliance certifications that require vendor attestations

- Minimal internal AI expertise for deployment and management

Edge case: Highly regulated industries (healthcare, finance, government) may face additional compliance requirements that favor established proprietary vendors with formal certification processes, even if open-source models offer technical advantages.

Risk management framework:

- Assess: Evaluate your specific security and compliance requirements

- Test: Run security audits and penetration testing on your deployment

- Monitor: Implement continuous monitoring for unusual activity

- Update: Stay current with security patches and best practices

- Document: Maintain clear records of security measures for compliance

For organizations navigating these considerations, understanding the full landscape of available solutions is critical. Our terms of service outline how we approach technology partnerships responsibly.

What Does the Future Hold for DeepSeek and Open-Source AI Competition?

The future trajectory for DeepSeek and open-source AI competition points toward continued innovation in efficiency, broader model capabilities, and intensifying competition with proprietary systems. DeepSeek’s January 2026 breakthrough in AI training methods for scaling large language models[6] suggests ongoing technical advancement that could further challenge global leaders.

Near-term developments (2026-2027):

V3.2 and beyond: DeepSeek released V3.2 in February 2026 with free access[5], though detailed specifications remain unreleased. Expect continued iteration with improved performance and efficiency.

Training method disclosure: The breakthrough training method announced in January 2026[6] may be published, potentially enabling other researchers to replicate DeepSeek’s cost advantages.

Multimodal expansion: Following industry trends, future releases likely will incorporate vision, audio, and other modalities beyond text.

Enterprise features: To compete for business users, expect improved deployment tools, monitoring capabilities, and documentation.

Medium-term trends (2027-2029):

Efficiency race: The competition will increasingly focus on cost per capability rather than raw performance. Innovations to watch:

- Quantization advances: Running large models on smaller hardware

- Distillation techniques: Creating smaller models that retain larger model capabilities

- Hardware co-design: Optimizing models for specific chip architectures

- Dynamic routing: More sophisticated MoE systems that adapt to query complexity

Ecosystem maturation: Open-source AI will develop supporting infrastructure comparable to proprietary platforms:

- Deployment platforms: Managed services for open-source model hosting

- Fine-tuning marketplaces: Pre-trained adaptations for specific industries

- Monitoring tools: Observability and debugging solutions

- Security frameworks: Standardized approaches to AI security and safety

Geopolitical factors:

The competition between Chinese and Western AI systems reflects broader technology rivalry:

- Technology decoupling: Countries may favor domestic or allied AI systems for strategic applications

- Export controls: Restrictions on AI technology transfer could fragment the global market

- Talent mobility: Immigration policies and research collaboration affect innovation pace

- Investment patterns: Government funding and private capital increasingly support regional champions

Scenarios for market evolution:

Scenario 1: Open-source dominance

- Open models match proprietary systems across all dimensions

- Proprietary vendors shift to service and integration businesses

- Commodity AI enables explosion of specialized applications

- Probability: Moderate (35-40%)

Scenario 2: Hybrid market

- Open models excel at specific tasks (reasoning, coding)

- Proprietary systems maintain edge in others (conversation, creativity)

- Organizations use mixed deployments based on use case

- Probability: High (50-55%)

Scenario 3: Proprietary resurgence

- Proprietary labs achieve breakthrough capabilities

- Regulatory requirements favor established vendors

- Enterprise features and support justify premium pricing

- Probability: Low-moderate (10-15%)

Implications for startups and developers:

Strategic positioning:

- Build on open foundations: Use open-source models for core capabilities to avoid vendor lock-in

- Plan for hybrid: Design architectures that can incorporate multiple models

- Invest in expertise: Develop internal AI capabilities rather than relying solely on external APIs

- Monitor actively: The landscape changes rapidly; quarterly reassessment is prudent

Capability roadmap:

Expect DeepSeek and competitors to target these capability gaps:

- Longer context windows: Beyond 128k tokens to handle entire codebases or documents[2]

- Better tool use: More reliable function calling and API integration

- Improved reasoning: Advances in multi-step planning and complex problem-solving

- Specialized variants: Domain-specific models for medicine, law, science, etc.

Wild cards:

Several factors could dramatically shift the competitive landscape:

- Regulatory intervention: Governments may restrict or mandate certain AI approaches

- Breakthrough architectures: New model designs that obsolete current approaches

- Energy constraints: Power consumption concerns could favor efficiency over raw capability

- Safety incidents: High-profile failures could trigger market or regulatory shifts

Actionable predictions for 2026-2027:

✅ Likely:

- DeepSeek releases at least two major model updates

- Open-source models capture 30%+ of developer mindshare

- Proprietary vendors reduce API pricing 20-40%

- Hybrid deployments become standard practice

❓ Possible:

- Open-source models achieve parity on conversational AI

- Major enterprise adopts DeepSeek for production systems

- New Chinese AI labs emerge with competitive open models

- Western labs release truly open-source frontier models

❌ Unlikely:

- Complete market dominance by either open or proprietary approaches

- Significant reduction in AI investment or innovation pace

- Global consensus on AI regulation and standards

The competition between DeepSeek R1 and V3.1 and global AI leaders represents more than technical rivalry. It reflects fundamental questions about AI development: Should advanced AI be open or controlled? Should efficiency or capability be prioritized? Can smaller budgets produce competitive results?

For now, the answer appears to be “both/and” rather than “either/or.” The market has room for proprietary systems offering premium capabilities and support alongside open alternatives providing accessibility and transparency. Developers and organizations benefit from this competition through better technology, lower costs, and more choices.

To stay informed about evolving AI solutions and how they can benefit your organization, visit our home page.

FAQ

Q: Can DeepSeek R1 and V3.1 replace ChatGPT for my business applications?

For reasoning-intensive tasks like coding, mathematics, and structured data analysis, DeepSeek models can effectively replace ChatGPT at significantly lower cost[1]. However, for general conversation, creative writing, or applications requiring the most polished user experience, ChatGPT may still offer advantages. Evaluate based on your specific use case rather than assuming universal equivalence.

Q: How much does it cost to run DeepSeek models compared to OpenAI’s API?

DeepSeek offers free API access to V3.2[5], making initial costs zero. Self-hosted deployment typically costs $500-$10,000/month depending on scale, compared to potentially $20,000+ monthly for equivalent OpenAI API usage in production applications. The exact savings depend on your query volume and optimization efforts.

Q: Are DeepSeek models safe to use in production applications?

DeepSeek models can be used safely in production with proper security measures including input validation, output filtering, access controls, and monitoring[4]. However, they lack enterprise SLAs and dedicated support, so organizations must take responsibility for security implementation and incident response.

Q: What programming languages and frameworks support DeepSeek integration?

DeepSeek models support integration through standard REST APIs, making them compatible with Python, JavaScript, Java, and other major languages. Popular frameworks like LangChain and libraries from HuggingFace provide additional integration options. The open-source nature means community-developed tools continue to expand compatibility.

Q: How does the 128,000-token context window compare to competitors?

DeepSeek’s 128,000-token context window[2] matches leading open models and exceeds many proprietary systems. This allows processing of approximately 96,000 words or 300+ pages of text in a single query, sufficient for most applications including full codebase analysis, long document processing, and extended conversations.

Q: Can I fine-tune DeepSeek models for my specific industry or use case?

Yes, the open-source nature of DeepSeek models allows full fine-tuning and customization without vendor restrictions. You can adapt the models to specific domains, terminology, or tasks using your own training data. This flexibility is a key advantage over proprietary systems that limit or charge premium fees for customization.

Q: What hardware do I need to self-host DeepSeek V3.1?

Due to the 685-billion parameter size[2], self-hosting V3.1 requires substantial GPU resources—typically 8x A100 GPUs or equivalent for reasonable inference speed. However, the MoE architecture’s efficiency (only 37 billion active parameters per token) makes it more feasible than traditional models of similar size. Cloud deployment is more practical for most organizations than purchasing dedicated hardware.

Q: How often does DeepSeek release model updates?

DeepSeek has shown rapid iteration, with R1 in January 2025, V3.1-Terminus in September 2025[3], and V3.2 in February 2026[5]. This suggests major releases every 3-6 months, though the pace may vary. The open-source community also produces optimizations and variants between official releases.

Q: Are there any restrictions on commercial use of DeepSeek models?

DeepSeek models are released as open-source, generally permitting commercial use without licensing fees. However, specific license terms should be reviewed for each model version, as some open-source AI licenses include usage restrictions or attribution requirements. The lack of commercial restrictions is a major advantage over proprietary alternatives.

Q: How does DeepSeek handle multiple languages besides English?

DeepSeek models are optimized primarily for English and Chinese, with varying performance on other languages. For applications requiring strong multilingual capabilities across many languages, specialized multilingual models or proprietary systems may offer better performance. Test thoroughly with your target languages before committing to production deployment.

Q: What’s the difference between DeepSeek R1 and V3.1?

R1 focuses specifically on reasoning capabilities using reinforcement learning and Chain-of-Thought training[1], excelling at coding and mathematics. V3.1 is a more general-purpose model with a larger Mixture-of-Experts architecture (685 billion parameters)[2] that integrates reasoning, conversation, tool use, and coding in a single hybrid system. Choose R1 for pure reasoning tasks and V3.1 for broader applications.

Q: Can DeepSeek models access the internet or use external tools?

DeepSeek V3.1 includes native tool usage capabilities[2], allowing integration with external APIs and services when properly configured. However, the models don’t have automatic internet access—developers must explicitly implement and authorize any external connections. This gives you control over what data the model can access and how it interacts with other systems.

Conclusion

DeepSeek R1 and V3.1 represent a fundamental shift in the AI landscape, demonstrating that open-source models can compete with proprietary systems while offering dramatic cost advantages and deployment flexibility. With R1 matching advanced foundation models on reasoning tasks[1] and V3.1 delivering 685-billion parameter capability at a fraction of traditional costs[2], these Chinese models challenge the assumption that cutting-edge AI requires massive budgets and vendor lock-in.

The implications extend beyond technical specifications. DeepSeek’s success—reaching #1 on Apple App Store[1] and training models for just $5.6 million[2]—proves that innovation in AI efficiency can be as valuable as raw capability improvements. For startups and developers, this opens new possibilities for building sophisticated AI applications without prohibitive costs or dependency on expensive proprietary APIs.

Key strategic takeaways:

- Reasoning excellence: DeepSeek models excel at coding, mathematics, and structured problem-solving, making them ideal for technical applications

- Cost efficiency: Mixture-of-Experts architecture and optimized training deliver competitive performance at 90%+ cost savings

- Open-source advantage: Transparency, customization freedom, and community innovation provide benefits that proprietary systems cannot match

- Practical limitations: Conversational quality and enterprise support lag behind top proprietary alternatives, requiring careful use case matching

- Security responsibility: Open deployment requires implementing proper security measures and monitoring without vendor support

Actionable next steps:

- Experiment with free access: Test DeepSeek V3.2 through the free API[5] to evaluate performance on your specific use cases

- Benchmark against alternatives: Compare DeepSeek results to proprietary systems on representative tasks before committing

- Start small: Deploy in non-critical applications first to build expertise and identify optimization opportunities

- Plan for hybrid: Design architectures that can incorporate multiple models, allowing you to use the best tool for each task

- Monitor developments: Follow DeepSeek releases and the broader open-source AI ecosystem for new capabilities and improvements

- Build internal expertise: Invest in team knowledge of AI deployment, security, and optimization rather than relying solely on external vendors

The competition between DeepSeek and global AI leaders benefits everyone in the ecosystem. Proprietary vendors must innovate faster and price more competitively. Open-source alternatives become more capable and accessible. Developers gain more choices and better economics. Organizations can build AI applications that were previously too expensive or technically complex.

As DeepSeek continues advancing—with the January 2026 training breakthrough[6] suggesting further efficiency gains ahead—the gap between open and proprietary models may narrow further. The question for developers and organizations is not whether to pay attention to open-source AI, but how quickly to incorporate these alternatives into their technology strategies.

The future of AI appears increasingly open, efficient, and competitive. DeepSeek R1 and V3.1 are not just challenging global leaders—they’re reshaping the rules of competition and expanding what’s possible for organizations of all sizes.

Ready to explore how AI solutions can transform your business? Learn more about our approach to technology innovation on our privacy policy page or connect with our team to discuss your specific needs.

References

[1] Deepseek R1 – https://builtin.com/artificial-intelligence/deepseek-r1

[2] Deepseek V31 Is Here Heres What You – https://bdtechtalks.substack.com/p/deepseek-v31-is-here-heres-what-you

[3] Chinas Deepseek Unveils New Ai Model Here Is Everything We Know About It – https://www.euronews.com/next/2025/09/30/chinas-deepseek-unveils-new-ai-model-here-is-everything-we-know-about-it

[4] Deepseek R1 Security Flaws – https://www.kelacyber.com/blog/deepseek-r1-security-flaws/

[5] deepseek – https://www.deepseek.com/en/

[6] Deepseek New Ai Training Models Scale Manifold Constrained Analysts China 2026 1 – https://www.businessinsider.com/deepseek-new-ai-training-models-scale-manifold-constrained-analysts-china-2026-1