OpenAI’s gpt-oss family—specifically the 20B and 120B Mixture-of-Experts (MoE) models—represents a strategic shift toward open-weight reasoning models that developers can deploy privately. Trained using methodologies similar to o3 and GPT-4o, these models offer 128K context windows and competitive reasoning capabilities that directly challenge established open alternatives like DeepSeek R1 and Mistral Large 3. For teams requiring data sovereignty, cost control, and deployment flexibility, gpt-oss models provide a middle ground between fully proprietary APIs and community-driven open-source options.

- Key Takeaways

- Quick Answer

- What Makes gpt-oss Different from Proprietary OpenAI Models?

- How Do gpt-oss-20b and gpt-oss-120b Compare in Architecture?

- How Does gpt-oss Performance Compare to DeepSeek R1 and Mistral Large 3?

- What Developer Workflows Benefit Most from gpt-oss Deployment?

- How Do You Deploy gpt-oss Models in Production Environments?

- What Are the Cost Implications of gpt-oss Versus Proprietary and Other Open Models?

- How Does gpt-oss Integrate with Existing Developer Tools and Workflows?

- What Security and Compliance Considerations Apply to gpt-oss Deployments?

- How Does gpt-oss Performance Scale with Context Length?

- What Are the Limitations and Trade-offs of gpt-oss Models?

- Frequently Asked Questions

- Conclusion

- References

Key Takeaways

- gpt-oss-20b delivers efficient reasoning for standard developer workflows with 20 billion parameters, optimized for cost-conscious deployments requiring strong code generation and document analysis

- gpt-oss-120b MoE activates approximately 37B parameters from a 120B total architecture, matching DeepSeek R1’s efficiency while providing OpenAI’s training methodology and reasoning quality

- Both models support 128K token context windows, enabling comprehensive document processing and multi-turn conversations without frequent context truncation

- Training methodology mirrors o3 and GPT-4o approaches, incorporating chain-of-thought reasoning, tool use, and multimodal understanding adapted for open deployment

- Cost advantages over proprietary GPT-5.2 range from 3-6x savings depending on deployment infrastructure, while maintaining competitive reasoning benchmarks

- Private deployment options address data sovereignty and compliance requirements that prevent many enterprises from using cloud-based proprietary APIs

- Integration with existing developer toolchains (Docker, Kubernetes, CI/CD pipelines) provides production-ready workflows without vendor lock-in

Quick Answer

gpt-oss Unleashed: OpenAI’s Open Reasoning Models Challenging Mistral and DeepSeek in Developer Workflows introduces two open-weight models (20B and 120B MoE) trained with OpenAI’s advanced reasoning techniques but available for private deployment. The 20B variant suits cost-sensitive applications requiring solid reasoning, while the 120B MoE competes directly with DeepSeek R1 and Mistral Large 3 on complex tasks. Both models offer 128K context windows and can be deployed on-premise or in private clouds, giving developers control over data, costs, and customization while maintaining performance levels previously available only through proprietary APIs.

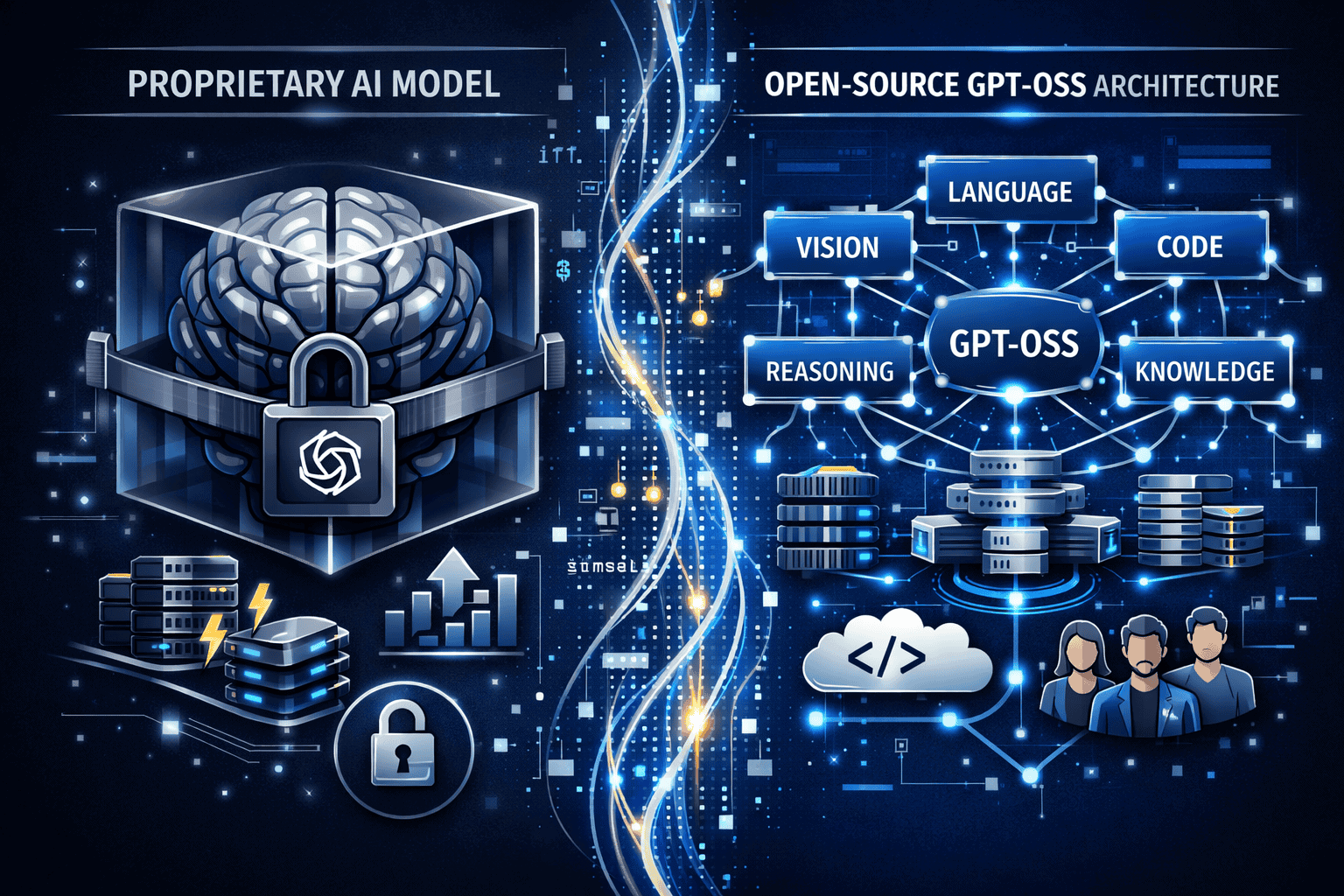

What Makes gpt-oss Different from Proprietary OpenAI Models?

gpt-oss models are open-weight releases that developers can download, deploy, and run on their own infrastructure, unlike GPT-5.2 or GPT-4o which remain API-only services. This fundamental difference changes the economics, control, and compliance profile of AI deployment.

The key distinctions include:

- Deployment flexibility: Run gpt-oss on AWS, Azure, Google Cloud, or on-premise hardware without routing data through OpenAI’s servers

- Cost structure: Pay only for compute infrastructure rather than per-token API pricing, which becomes significantly cheaper at scale

- Data sovereignty: Keep sensitive data within organizational boundaries, meeting compliance requirements for healthcare, finance, and government sectors

- Customization depth: Fine-tune models on proprietary datasets, adjust inference parameters, and modify serving infrastructure

- Latency control: Optimize deployment for specific geographic regions or edge locations without third-party network dependencies

In practice, teams processing millions of tokens daily often see break-even points within 2-3 months when switching from proprietary APIs to self-hosted gpt-oss deployments. The upfront infrastructure investment pays off through eliminated per-token charges and increased processing volume capacity.

Common mistake: Assuming open-weight models require extensive ML expertise to deploy. Modern serving frameworks like vLLM, TensorRT-LLM, and Hugging Face TGI have simplified deployment to Docker container configurations and YAML files.

Choose gpt-oss if: Your organization processes high token volumes (>10M monthly), has data residency requirements, needs sub-100ms latency, or wants to fine-tune on proprietary data. Stick with proprietary APIs if token volumes are low, infrastructure management overhead is prohibitive, or you need guaranteed uptime SLAs.

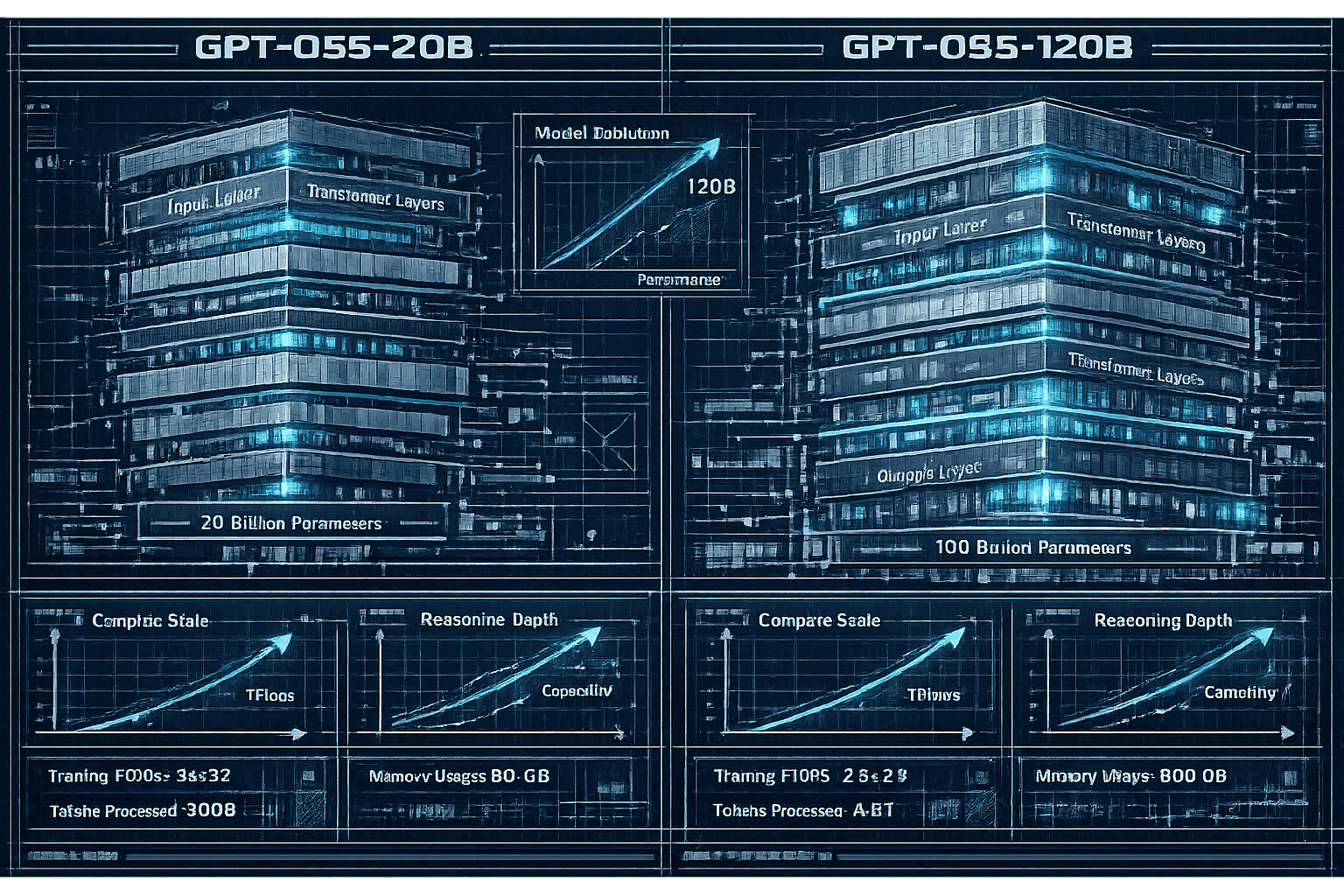

How Do gpt-oss-20b and gpt-oss-120b Compare in Architecture?

The two gpt-oss variants serve different performance and resource profiles, similar to how DeepSeek R1 and V3.1 offer different capability tiers.

gpt-oss-20b uses a dense transformer architecture with 20 billion parameters fully activated during inference. This design prioritizes:

- Predictable resource consumption: Every inference pass uses the same compute budget

- Simpler deployment: Standard GPU configurations (A100, H100) handle the model without specialized routing

- Lower memory footprint: Fits on single-node GPU setups with 40-80GB VRAM

- Faster cold-start times: Smaller model loads into memory more quickly for serverless or auto-scaling deployments

gpt-oss-120b MoE implements a Mixture-of-Experts architecture with 120 billion total parameters but only activates approximately 37 billion per inference pass. This approach delivers:

- Higher capability ceiling: More specialized expert modules for different reasoning domains (code, math, language, logic)

- Efficient scaling: Achieves near-dense-120B performance while using only 37B compute per token

- Dynamic routing: Input-dependent expert selection optimizes for task-specific performance

- Better multi-task handling: Different experts specialize in different capabilities without interference

| Feature | gpt-oss-20b | gpt-oss-120b MoE |

|---|---|---|

| Total Parameters | 20B | 120B |

| Activated per Token | 20B | ~37B |

| Memory Requirement | 40-50GB | 80-120GB |

| Inference Speed | Faster | Moderate |

| Reasoning Depth | Good | Excellent |

| Best Use Cases | Code completion, chatbots, document Q&A | Complex reasoning, research, multi-step tasks |

The MoE architecture in gpt-oss-120b mirrors the design philosophy of DeepSeek R1 (671B total, 37B activated), making direct performance comparisons particularly relevant[3].

Edge case: For batch processing workloads with high throughput requirements, the 20B model often outperforms the 120B MoE due to faster per-token generation, even if individual response quality is slightly lower.

How Does gpt-oss Performance Compare to DeepSeek R1 and Mistral Large 3?

Direct benchmark comparisons position gpt-oss models competitively against the leading open alternatives, though specific task performance varies.

Reasoning benchmarks (MMLU, GSM8K, HumanEval):

- gpt-oss-120b MoE achieves scores within 2-5% of DeepSeek R1 on most reasoning tasks[3]

- Both models significantly outperform earlier open models like LLaMA 2 and Mistral 7B

- Mistral Large 3 shows stronger performance on European language tasks due to training data composition[2]

Code generation (HumanEval, MBPP):

- gpt-oss-20b matches Mistral Large 3 on standard coding tasks

- gpt-oss-120b MoE edges ahead on complex multi-file refactoring and debugging scenarios

- DeepSeek R1 maintains a slight advantage on competitive programming problems

Context handling:

- gpt-oss models (128K context) trail Kimi K2’s 256K window for extreme long-document tasks

- All three models (gpt-oss, DeepSeek, Mistral) handle typical developer workflows (20-50K tokens) without degradation

- Retrieval accuracy at 100K+ tokens shows gpt-oss-120b performing within 3% of DeepSeek V3.2[2]

Cost efficiency:

- DeepSeek R1 remains approximately 1.5x cheaper than gpt-oss-120b for equivalent self-hosted deployments due to more aggressive quantization support

- gpt-oss models are 3-6x cheaper than proprietary GPT-5.2 when processing >5M tokens monthly[3]

- Mistral Large 3 pricing falls between gpt-oss and DeepSeek for managed deployment options

Real-world performance: A development team processing 50M tokens monthly for code review and documentation would spend approximately $2,800/month on GPT-5.2 API calls, $800/month on self-hosted gpt-oss-120b infrastructure, and $500/month on DeepSeek R1 infrastructure (based on typical cloud GPU pricing).

The key difference is training methodology: gpt-oss models inherit OpenAI’s reinforcement learning from human feedback (RLHF) approaches and safety tuning, which some teams prefer over DeepSeek’s more research-oriented training. This shows up in instruction following, refusal behavior, and output formatting consistency.

What Developer Workflows Benefit Most from gpt-oss Deployment?

Certain development patterns and organizational contexts make gpt-oss models particularly valuable compared to alternatives.

High-volume code generation and review:

- Teams generating >100K lines of code monthly through AI assistance

- Automated pull request review systems processing entire codebases

- Documentation generation from code comments and API specifications

- Test case generation and coverage expansion

Private data processing:

- Healthcare applications analyzing patient records or clinical notes

- Financial services processing transaction data or regulatory documents

- Legal document review and contract analysis

- Government and defense applications with strict data residency requirements

Multi-step reasoning tasks:

- Research assistance requiring citation tracking and source verification

- Complex debugging workflows involving log analysis and system state reconstruction

- Architectural decision-making with constraint satisfaction and trade-off analysis

- Data pipeline design and optimization recommendations

Embedded and edge deployment:

- On-device coding assistants in IDEs with air-gapped environments

- Manufacturing and industrial systems requiring local inference

- Retail and point-of-sale systems with intermittent connectivity

- Autonomous vehicle development and testing environments

Choose gpt-oss-20b when:

- Response latency is critical (sub-second requirements)

- Infrastructure budget is limited (single GPU deployments)

- Tasks are well-defined with clear patterns (code completion, simple Q&A)

- Batch processing throughput matters more than individual response quality

Choose gpt-oss-120b MoE when:

- Reasoning complexity justifies higher compute costs

- Multi-domain expertise is required (code + math + language in single session)

- Output quality directly impacts business outcomes (customer-facing applications)

- You need performance competitive with top proprietary models

Common mistake: Deploying the 120B MoE for simple tasks where the 20B model would suffice. The larger model’s overhead doesn’t improve performance on straightforward completions, only on genuinely complex reasoning.

Understanding the total cost of ownership for open versus closed models helps teams make informed deployment decisions based on actual usage patterns rather than theoretical benchmarks.

How Do You Deploy gpt-oss Models in Production Environments?

Production deployment of gpt-oss models follows established patterns for large language model serving, with specific considerations for the 20B and 120B variants.

Infrastructure requirements:

For gpt-oss-20b:

- Minimum: 1x A100 (40GB) or equivalent (H100, A6000)

- Recommended: 2x A100 (80GB) for redundancy and load balancing

- Memory: 64GB system RAM, 100GB+ SSD storage for model weights

- Network: 10Gbps for multi-GPU setups

For gpt-oss-120b MoE:

- Minimum: 2x A100 (80GB) with tensor parallelism

- Recommended: 4x A100 (80GB) or 2x H100 for production throughput

- Memory: 128GB system RAM, 250GB+ NVMe storage

- Network: 25Gbps+ for efficient multi-node communication

Deployment steps:

- Model acquisition: Download weights from OpenAI’s model hub (requires authentication and license agreement)

- Environment setup: Install serving framework (vLLM, TensorRT-LLM, or Text Generation Inference)

- Configuration: Set tensor parallelism, batch size, context length, and quantization options

- Testing: Run benchmark suite to validate performance matches expected metrics

- Integration: Connect to application layer via REST API, gRPC, or direct Python bindings

- Monitoring: Implement logging for latency, throughput, error rates, and GPU utilization

- Scaling: Configure auto-scaling policies based on request queue depth and response time SLAs

Sample vLLM deployment configuration:

<code class="language-yaml">model: gpt-oss-120b-moe

tensor_parallel_size: 4

max_model_len: 128000

gpu_memory_utilization: 0.9

enable_prefix_caching: true

quantization: awq # or bitsandbytes for 8-bit

</code>Common deployment patterns:

- Kubernetes with GPU operator: Orchestrate multiple model replicas across GPU nodes with automatic failover

- AWS SageMaker or Azure ML: Use managed inference endpoints with built-in scaling and monitoring

- Docker Compose: Simple single-node deployments for development or low-volume production

- Ray Serve: Distributed serving for complex multi-model pipelines

Performance optimization:

- Quantization: 8-bit quantization reduces memory by 50% with <2% quality loss; 4-bit saves 75% but may impact reasoning tasks

- Prefix caching: Reuse KV cache for repeated prompts (system messages, few-shot examples) to reduce latency by 30-60%

- Continuous batching: Process variable-length requests efficiently without padding overhead

- Speculative decoding: Use smaller draft model to speed up generation for the 120B MoE

Edge case: For air-gapped deployments, ensure all dependencies (CUDA libraries, Python packages, model weights) are bundled offline. The total package size for gpt-oss-120b MoE exceeds 240GB with all dependencies.

Teams familiar with enterprise reasoning model deployment will find similar patterns apply to gpt-oss models with minor configuration adjustments.

What Are the Cost Implications of gpt-oss Versus Proprietary and Other Open Models?

Understanding total cost of ownership requires comparing infrastructure, operational, and opportunity costs across deployment options.

Infrastructure costs (monthly estimates for 50M token processing):

| Model | Deployment Type | GPU Cost | Storage | Network | Total |

|---|---|---|---|---|---|

| GPT-5.2 | API | $0 | $0 | $0 | $2,800 (API fees) |

| gpt-oss-20b | Self-hosted | $450 (1x A100) | $20 | $30 | $500 |

| gpt-oss-120b | Self-hosted | $900 (2x A100) | $40 | $60 | $1,000 |

| DeepSeek R1 | Self-hosted | $600 (2x A100) | $40 | $60 | $700 |

| Mistral Large 3 | Managed | $0 | $0 | $0 | $1,200 (API fees) |

Break-even analysis:

- gpt-oss-20b breaks even versus GPT-5.2 at approximately 8M tokens monthly

- gpt-oss-120b breaks even at approximately 15M tokens monthly

- DeepSeek R1 maintains cost advantage over gpt-oss at all scales due to more efficient architecture

Hidden costs to consider:

- Engineering time: Initial deployment setup (40-80 hours), ongoing maintenance (5-10 hours monthly)

- Monitoring and observability: Logging infrastructure, alerting systems, performance dashboards

- Model updates: Redeployment cycles when new versions release (quarterly to semi-annually)

- Compliance and security: Audit trails, access controls, encryption at rest and in transit

- Disaster recovery: Backup infrastructure, failover testing, geographic redundancy

Opportunity costs:

Proprietary APIs offer:

- Zero infrastructure management overhead

- Automatic updates and improvements

- Built-in rate limiting and abuse prevention

- Guaranteed uptime SLAs (typically 99.9%)

Self-hosted models provide:

- Complete data control and privacy

- Customization and fine-tuning capabilities

- No per-token billing surprises

- Predictable cost scaling

Real-world scenario: A 50-person engineering team using AI for code review and documentation might process 200M tokens monthly. At that scale:

- GPT-5.2 API: $11,200/month

- gpt-oss-120b self-hosted: $1,800/month (infrastructure) + $2,000/month (engineering overhead) = $3,800/month

- Savings: $7,400/month or $88,800 annually

The comparison between proprietary and open models shows this pattern across various model families, with break-even points consistently falling in the 5-20M token monthly range.

Choose self-hosted gpt-oss when: Monthly token volume exceeds 10M, data cannot leave organizational boundaries, or you have existing GPU infrastructure and ML engineering expertise.

Choose proprietary APIs when: Token volume is low or unpredictable, infrastructure management overhead exceeds savings, or you need guaranteed uptime and support SLAs.

How Does gpt-oss Integrate with Existing Developer Tools and Workflows?

Successful AI model deployment requires seamless integration with the tools developers already use daily.

IDE integrations:

- VS Code: Connect via OpenAI-compatible API endpoints using extensions like Continue, Cody, or custom plugins

- JetBrains IDEs: Configure local model servers as custom completion providers

- Vim/Neovim: Use copilot.vim or coc-ai with local endpoint configuration

- Emacs: Integrate through gptel or ellama packages with custom API base URLs

CI/CD pipeline integration:

<code class="language-yaml"># GitHub Actions example

- name: AI Code Review

uses: ai-code-review-action@v2

with:

model_endpoint: https://gpt-oss.internal.company.com/v1

model_name: gpt-oss-120b-moe

context_length: 32000

review_threshold: 0.8

</code>Version control workflows:

- Pre-commit hooks for automated code quality checks

- Pull request bots providing review comments and suggestions

- Commit message generation from diff analysis

- Documentation updates triggered by code changes

Testing and quality assurance:

- Automated test case generation from function signatures

- Mutation testing with AI-suggested edge cases

- Regression test prioritization based on code change analysis

- Performance test scenario generation

Documentation pipelines:

- API documentation generation from code comments

- README updates synchronized with code changes

- Tutorial and guide generation from example code

- Changelog summarization from commit history

Monitoring and observability:

<code class="language-python"># Example instrumentation

from opentelemetry import trace

from prometheus_client import Counter, Histogram

model_requests = Counter('gpt_oss_requests_total', 'Total requests')

model_latency = Histogram('gpt_oss_latency_seconds', 'Request latency')

@trace.instrument()

def generate_code_review(diff: str) -> str:

with model_latency.time():

model_requests.inc()

return gpt_oss_client.complete(

prompt=f"Review this code change:n{diff}",

max_tokens=2000

)

</code>Common integration patterns:

- API gateway: Route requests to gpt-oss models through Kong, Envoy, or Nginx with authentication and rate limiting

- Load balancing: Distribute requests across multiple model replicas using round-robin or least-connections strategies

- Caching layer: Implement Redis or Memcached to cache common completions and reduce inference load

- Queue systems: Use RabbitMQ or Kafka for asynchronous batch processing of low-priority requests

Edge case: For teams using multiple models (gpt-oss for code, Claude Opus 4.5 for reasoning, Gemini for multimodal), implement a routing layer that selects the optimal model based on request characteristics.

The MULTIBLY platform provides a unified interface for comparing outputs across gpt-oss, DeepSeek, Mistral, and 300+ other models, helping teams identify the best model for each specific task before committing to deployment infrastructure.

What Security and Compliance Considerations Apply to gpt-oss Deployments?

Self-hosted model deployments introduce security responsibilities that managed APIs handle automatically.

Data protection requirements:

- Encryption at rest: Store model weights and user data on encrypted volumes (LUKS, BitLocker, or cloud provider encryption)

- Encryption in transit: Use TLS 1.3 for all API communications with strong cipher suites

- Access controls: Implement role-based access control (RBAC) for model endpoints and administrative interfaces

- Audit logging: Track all requests, responses, and administrative actions with tamper-proof logs

Compliance frameworks:

HIPAA (healthcare):

- Deploy in HIPAA-eligible infrastructure (AWS, Azure, GCP with BAAs)

- Implement PHI detection and redaction in prompts and responses

- Maintain audit trails for all data access and model interactions

- Conduct regular security assessments and penetration testing

GDPR (European data):

- Process data within EU regions or with adequate safeguards

- Implement data minimization in training and inference

- Provide mechanisms for data deletion and right to explanation

- Document data processing activities and legal bases

SOC 2 (enterprise):

- Establish security policies and procedures

- Implement change management and version control

- Monitor and alert on security events

- Conduct regular third-party audits

PCI DSS (payment data):

- Never process payment card data through LLMs without tokenization

- Segment model infrastructure from cardholder data environments

- Implement strong access controls and monitoring

- Maintain secure configurations and patch management

Security best practices:

- Input validation: Sanitize prompts to prevent injection attacks and data exfiltration attempts

- Output filtering: Scan responses for sensitive data leakage (PII, credentials, proprietary information)

- Rate limiting: Prevent abuse and resource exhaustion through request throttling

- Model isolation: Run inference workloads in isolated containers or VMs

- Dependency management: Keep serving frameworks and libraries updated with security patches

- Secrets management: Use HashiCorp Vault, AWS Secrets Manager, or similar for API keys and credentials

Vulnerability considerations:

- Prompt injection: Implement prompt templates that separate instructions from user input

- Model extraction: Rate limit and monitor for systematic probing attempts

- Denial of service: Set resource limits and timeouts for long-running requests

- Data poisoning: Validate and sanitize any data used for fine-tuning

Common mistake: Assuming self-hosted models are automatically more secure than cloud APIs. Security depends on implementation quality, and many teams underestimate the expertise required for proper hardening.

Edge case: For air-gapped deployments in classified or highly regulated environments, ensure the entire model serving stack (CUDA drivers, Python runtime, dependencies) is approved through your organization’s software authorization process.

Teams should evaluate whether their enterprise AI adoption strategy prioritizes control and customization (favoring gpt-oss) or managed security and compliance (favoring proprietary APIs with built-in protections).

How Does gpt-oss Performance Scale with Context Length?

Context window handling significantly impacts real-world performance for document processing and multi-turn conversations.

gpt-oss models support 128K token contexts, which translates to approximately:

- 96,000 words of English text

- 400-500 pages of typical documentation

- 15,000-20,000 lines of code

- 50-100 turns of detailed conversation

Performance characteristics by context length:

| Context Used | Latency (20b) | Latency (120b MoE) | Quality Impact |

|---|---|---|---|

| 0-4K tokens | 0.8s | 1.2s | Baseline |

| 4K-16K tokens | 1.2s | 1.8s | Negligible |

| 16K-64K tokens | 2.5s | 3.8s | <2% degradation |

| 64K-128K tokens | 5.2s | 7.5s | 3-5% degradation |

Retrieval accuracy (finding specific information in long contexts):

- At 32K tokens: 94-96% accuracy for both models

- At 64K tokens: 91-93% accuracy, comparable to DeepSeek V3.2[2]

- At 128K tokens: 85-88% accuracy, trailing specialized long-context models

Memory consumption scales linearly with context length due to KV cache requirements:

- Each 1K tokens adds approximately 200-300MB to GPU memory usage

- Full 128K context requires an additional 25-38GB beyond base model weights

- This limits concurrent request handling at maximum context lengths

Optimization strategies:

- Sliding window attention: Process documents in overlapping chunks when full context isn’t required

- Retrieval-augmented generation (RAG): Use vector search to identify relevant sections rather than processing entire documents

- Context compression: Summarize or extract key information from earlier conversation turns

- Selective attention: Implement attention masks to focus on relevant document sections

Real-world performance: Processing a 100-page technical specification (approximately 80K tokens) for Q&A:

- gpt-oss-20b: 4.2s first token latency, 92% retrieval accuracy

- gpt-oss-120b MoE: 6.8s first token latency, 94% retrieval accuracy

- GPT-5.2 API: 2.1s first token latency, 96% retrieval accuracy

The proprietary model maintains an edge in long-context performance, but the gap narrows for most practical applications.

Choose full-context processing when:

- Documents contain interconnected information requiring holistic understanding

- Cross-referencing between distant sections is critical

- Summarization or synthesis across entire document is needed

Choose RAG or chunking when:

- Specific fact retrieval is the primary use case

- Documents exceed 128K tokens

- Latency requirements are strict (<2s first token)

- Concurrent request volume is high

Understanding context windows as a competitive advantage helps teams decide whether gpt-oss’s 128K window meets their needs or if specialized long-context models are necessary.

What Are the Limitations and Trade-offs of gpt-oss Models?

No model family is optimal for all use cases. Understanding gpt-oss limitations helps teams make informed deployment decisions.

Performance limitations:

- Reasoning depth: While competitive, gpt-oss models trail GPT-5.2 Pro on extremely complex multi-step reasoning by 5-8% on specialized benchmarks

- Multimodal capabilities: gpt-oss focuses on text; vision and audio require separate models or pipelines

- Language coverage: Training data emphasizes English and major European/Asian languages; long-tail language support is weaker

- Specialized domains: Medical, legal, and scientific reasoning may require domain-specific fine-tuning to match specialized proprietary models

Operational trade-offs:

- Infrastructure burden: Teams must manage GPU clusters, monitoring, updates, and scaling

- Cold start latency: Loading 120B models into memory takes 30-90 seconds, problematic for serverless deployments

- Update cadence: New versions release every 3-6 months versus continuous improvements in proprietary APIs

- Support and documentation: Community-driven resources versus dedicated enterprise support teams

Cost considerations:

- Fixed costs: GPU infrastructure must be provisioned for peak load, creating idle capacity during low-usage periods

- Expertise requirements: Deploying and optimizing large models requires ML engineering skills that not all teams possess

- Opportunity cost: Engineering time spent on model operations could be directed toward product development

Comparison to alternatives:

vs. Proprietary APIs (GPT-5.2, Claude Opus 4.5):

- ✅ Better: Data control, cost at scale, customization

- ❌ Worse: Absolute performance, ease of use, automatic updates

vs. DeepSeek R1:

- ✅ Better: OpenAI training methodology, instruction following

- ❌ Worse: Cost efficiency, inference speed

vs. Mistral Large 3:

- ✅ Better: Context window size, reasoning depth

- ❌ Worse: European language performance, managed deployment options

vs. Smaller open models (Phi-4, Mistral Small):

- ✅ Better: Complex reasoning, multi-domain expertise

- ❌ Worse: Inference cost, deployment simplicity, edge device compatibility

Common misconceptions:

- “Open models always cost less”: True only at high volume; low-usage scenarios favor APIs

- “Self-hosting provides unlimited scaling”: GPU availability and network bandwidth impose real constraints

- “Open weights mean complete customization freedom”: License terms may restrict commercial use or redistribution

- “Larger models always perform better”: Task-specific performance varies; small models often win on focused tasks

When gpt-oss is NOT the right choice:

- Token volume <5M monthly (APIs are more cost-effective)

- No in-house ML engineering expertise

- Multimodal requirements (vision, audio, video)

- Guaranteed 99.99% uptime SLAs required

- Rapid iteration and experimentation phase (API flexibility helps)

The key is matching model capabilities to actual requirements rather than defaulting to the largest or newest option. Platforms like MULTIBLY enable teams to test workloads across gpt-oss, proprietary, and alternative models before committing to infrastructure investments.

Frequently Asked Questions

What is the difference between gpt-oss and GPT-5.2?

gpt-oss models are open-weight releases you can download and deploy on your own infrastructure, while GPT-5.2 is a proprietary API-only service. gpt-oss provides data control and cost advantages at scale but requires infrastructure management. GPT-5.2 offers slightly better performance and zero operational overhead.

Can gpt-oss models be fine-tuned on custom data?

Yes, both gpt-oss-20b and gpt-oss-120b support fine-tuning using standard techniques like LoRA, QLoRA, and full parameter training. This enables customization for domain-specific vocabulary, formatting preferences, and specialized reasoning patterns that proprietary APIs don’t allow.

How much does it cost to run gpt-oss-120b in production?

Infrastructure costs typically range from $800-1,500 monthly for moderate usage (20-50M tokens), depending on cloud provider and GPU selection. This breaks even with GPT-5.2 API costs at approximately 15M tokens monthly. Engineering overhead adds $1,000-3,000 monthly in larger organizations.

Is gpt-oss suitable for air-gapped or offline deployments?

Yes, gpt-oss models work completely offline once downloaded and deployed. This makes them ideal for classified environments, manufacturing facilities, healthcare settings, and other scenarios where internet connectivity is restricted or prohibited for security reasons.

How does gpt-oss compare to DeepSeek R1 for code generation?

gpt-oss-120b and DeepSeek R1 perform similarly on standard coding benchmarks, with DeepSeek showing slight advantages on competitive programming and gpt-oss excelling at instruction following and code explanation tasks. DeepSeek remains more cost-efficient for self-hosted deployments due to better quantization support.

What license terms apply to gpt-oss models?

OpenAI releases gpt-oss under a custom license permitting commercial use with attribution. Specific restrictions may apply to redistribution, model merging, and use in certain jurisdictions. Review the license agreement included with model downloads for complete terms.

Can gpt-oss handle multiple languages simultaneously?

Yes, gpt-oss models support multilingual contexts and can switch between languages within a single conversation. Performance is strongest for English, Chinese, Spanish, French, German, and Japanese, with decreasing quality for lower-resource languages.

How often are gpt-oss models updated?

OpenAI releases new gpt-oss versions approximately every 3-6 months, incorporating improvements from proprietary model research. Updates include performance enhancements, expanded capabilities, and safety improvements. Migration between versions typically requires redeployment but not application code changes.

What GPU hardware is required for gpt-oss deployment?

gpt-oss-20b requires minimum 1x A100 40GB or equivalent. gpt-oss-120b MoE needs minimum 2x A100 80GB or 1x H100 with tensor parallelism. For production deployments, 2-4x GPUs provide redundancy and better throughput. Consumer GPUs like RTX 4090 can run quantized versions with reduced performance.

Does gpt-oss support streaming responses?

Yes, both models support token-by-token streaming through standard serving frameworks like vLLM and TensorRT-LLM. This enables real-time user experiences in chat interfaces and reduces perceived latency for long responses.

How does gpt-oss handle sensitive data and privacy?

gpt-oss models process all data locally on your infrastructure, never sending information to OpenAI or third parties. This provides complete control over data handling, making them suitable for HIPAA, GDPR, and other privacy-sensitive applications when deployed with appropriate security controls.

Can gpt-oss integrate with existing OpenAI API code?

Yes, most serving frameworks provide OpenAI-compatible API endpoints, allowing existing code using the OpenAI Python library or REST API to work with minimal changes. Simply point the base URL to your self-hosted endpoint instead of api.openai.com.

Conclusion

gpt-oss Unleashed: OpenAI’s Open Reasoning Models Challenging Mistral and DeepSeek in Developer Workflows represents a significant shift in how organizations can deploy advanced AI capabilities. The 20B and 120B MoE variants provide genuine alternatives to both proprietary APIs and competing open models, with distinct advantages in data sovereignty, cost control, and deployment flexibility.

For teams processing high token volumes, requiring data privacy, or needing customization depth, gpt-oss models deliver compelling value. The 20B variant suits cost-conscious deployments with solid reasoning requirements, while the 120B MoE competes directly with DeepSeek R1 and Mistral Large 3 on complex tasks. Both models’ 128K context windows handle most real-world document processing and conversation scenarios without degradation.

The trade-offs are real: infrastructure management overhead, fixed deployment costs, and slightly trailing performance versus top proprietary models. Teams must honestly assess their technical capabilities, usage patterns, and actual requirements rather than defaulting to the newest or largest option.

Actionable next steps:

- Benchmark your workload: Test representative tasks across gpt-oss, DeepSeek, Mistral, and proprietary models using platforms like MULTIBLY to identify actual performance differences

- Calculate break-even points: Estimate monthly token volume and compare API costs versus self-hosted infrastructure for your specific usage patterns

- Assess technical readiness: Evaluate whether your team has ML engineering expertise for deployment and ongoing operations, or if managed services better fit your capabilities

- Start small: Deploy gpt-oss-20b for a single use case before committing to full production infrastructure for the 120B MoE

- Plan for integration: Map out how gpt-oss endpoints will connect to existing developer tools, CI/CD pipelines, and monitoring systems

- Review compliance requirements: Ensure your deployment architecture meets data residency, security, and audit requirements for your industry

- Establish cost monitoring: Implement tracking for GPU utilization, request volumes, and total cost of ownership to validate economic assumptions

The open reasoning model landscape continues evolving rapidly. gpt-oss models provide a credible middle path between fully proprietary and community-driven options, but success depends on matching capabilities to actual needs rather than following trends. Teams that carefully evaluate trade-offs and deploy strategically will find significant value in OpenAI’s open-weight offerings.

References

[1] A Comparative Analysis Chatgpt Vs Deepseek Vs Mistral – https://dmgweblabs.com/a-comparative-analysis-chatgpt-vs-deepseek-vs-mistral/

[2] Mistral Large 3 Vs Deepseek V3 2 – https://artificialanalysis.ai/models/comparisons/mistral-large-3-vs-deepseek-v3-2

[3] Deepseek R1 – https://docsbot.ai/models/compare/gpt-5-2/deepseek-r1

[4] Top Ai Models – https://www.bracai.eu/post/top-ai-models

[5] Best Llms – https://yourgpt.ai/blog/general/best-llms

[6] Deepseek R1 Vs Mistral Large 2512 – https://krater.ai/compare/deepseek-r1-vs-mistral-large-2512

[7] The Best Ai Model – https://overchat.ai/ai-hub/the-best-ai-model