xAI’s Grok 4 represents a fundamental shift in how organizations approach AI deployment—combining uncensored reasoning capabilities with real-time data access from X (formerly Twitter) to power dynamic, context-aware applications. Understanding xAI’s Grok 4 Uncensored: Real-World Applications and Ethical Deployment Strategies in 2026 means grasping both the technical advantages of its training approach and the governance frameworks needed to deploy it responsibly across enterprise environments.

The model’s public beta launch in February 2026[3] marked a turning point for teams seeking AI systems that can process nuanced, real-world scenarios without excessive content filtering while maintaining accountability. For organizations evaluating whether Grok 4 fits their needs, the key question isn’t just about capability—it’s about matching the right deployment strategy to specific use cases while establishing clear ethical guardrails.

- Key Takeaways

- Quick Answer

- What Makes Grok 4's Uncensored Approach Different from Traditional AI Models?

- How Does Real-Time X Data Integration Enable Dynamic Applications?

- What Are the Most Effective Real-World Applications for xAI's Grok 4 Uncensored in 2026?

- How Should Organizations Approach Ethical Deployment of Grok 4?

- What Deployment Strategies Work Best for Agentic Workflows?

- How Does Grok 4 Compare to Other Leading Models for Specific Use Cases?

- What Are the Key Challenges and Limitations of Deploying Grok 4?

- FAQ

- Conclusion

- References

Key Takeaways

- Grok 4’s uncensored training enables more nuanced reasoning on controversial or complex topics compared to heavily filtered models, making it valuable for research, journalism, and policy analysis

- Real-time X data integration provides unique advantages for social listening, trend analysis, and applications requiring current event awareness beyond static training cutoffs

- The 2-million token context window allows processing of extensive documents, multi-turn conversations, and complex agentic workflows without losing coherence[1]

- Ethical deployment requires layered controls—combining model capabilities with application-level filtering, human oversight, and clear use-case boundaries

- Best fit for organizations that need transparent reasoning on sensitive topics, real-time social intelligence, or long-context document processing

- Not suitable for consumer-facing applications requiring strict content moderation or industries with zero-tolerance compliance requirements

- Deployment success depends on establishing clear governance frameworks, training teams on responsible use, and implementing monitoring systems

- Cost considerations favor teams already invested in the X ecosystem or those requiring capabilities unavailable in more restrictive models

Quick Answer

xAI’s Grok 4 Uncensored delivers practical value through three core advantages: uncensored reasoning that handles nuanced topics without excessive filtering, real-time access to X platform data for current awareness, and a massive 2-million token context window for complex workflows. Organizations deploy it successfully by establishing clear ethical boundaries at the application layer—using the model’s capabilities where transparency matters (research, analysis, content creation) while adding appropriate guardrails based on specific use cases. The key is matching Grok 4’s strengths to problems where other models fall short, not using it as a general-purpose replacement.

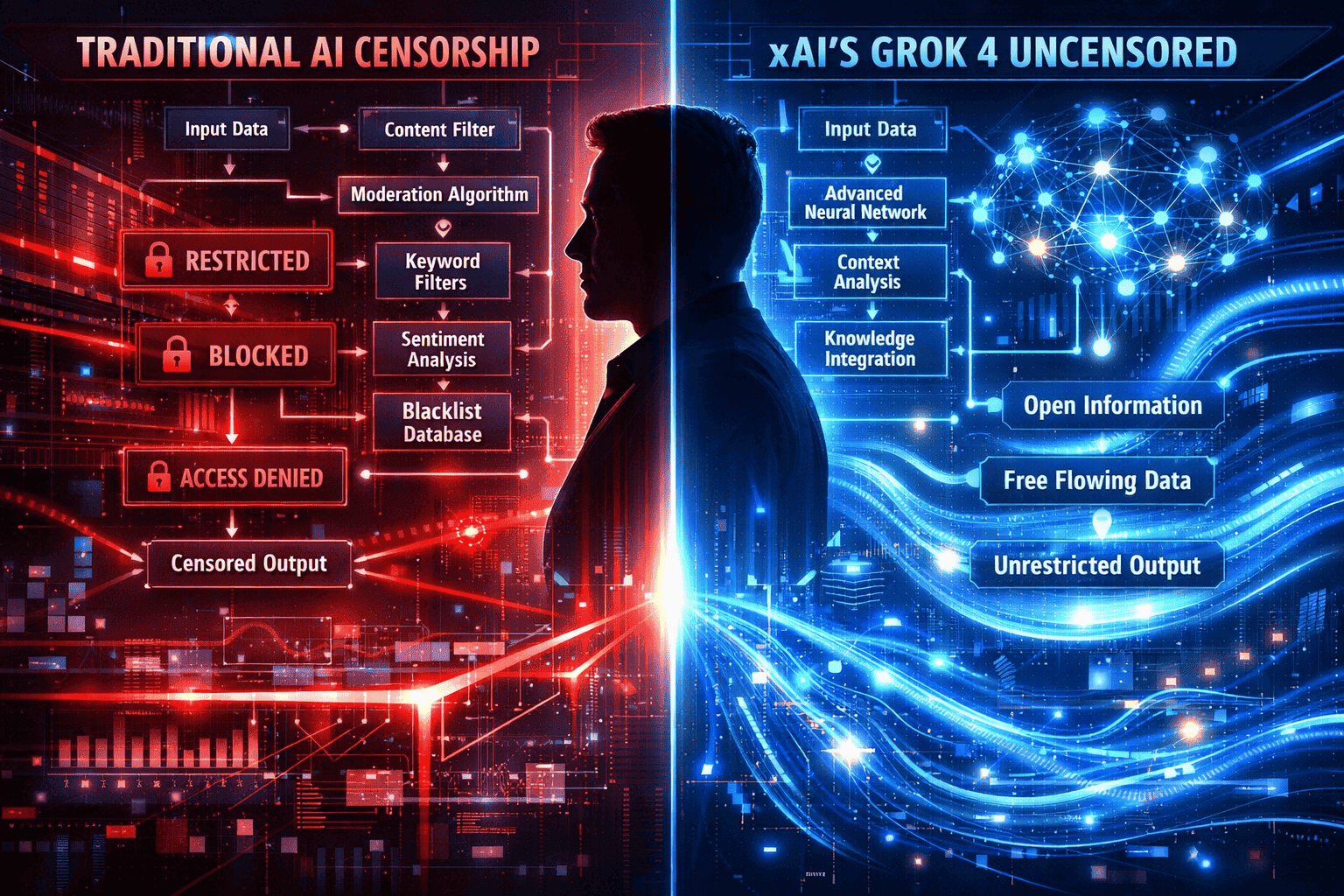

What Makes Grok 4’s Uncensored Approach Different from Traditional AI Models?

Grok 4’s uncensored training philosophy means the model learns from a broader range of perspectives and content types without aggressive pre-filtering or alignment techniques that can limit reasoning about controversial topics. This creates an AI system that provides more direct responses to sensitive queries while maintaining factual grounding.

In practice, traditional models like GPT-4 or Claude apply extensive content policies during training and inference, often refusing to engage with topics deemed controversial. Grok 4 takes a different path—it will reason through complex scenarios involving politics, ethics, or contested facts without defaulting to refusal responses.

Key differences include:

- Response directness: Grok 4 answers questions about controversial topics with analysis rather than deflection

- Reasoning transparency: The model explains multiple perspectives on contested issues instead of presenting a single “safe” viewpoint

- Reduced refusal rates: Fewer instances of “I cannot help with that” responses for legitimate research or analysis queries

- Training data diversity: Exposure to wider opinion ranges from X platform discussions and real-world discourse

When this matters most:

Choose Grok 4 when your use case requires analyzing polarizing topics, understanding diverse viewpoints, or researching subjects where other models consistently refuse engagement. For example, policy researchers analyzing public sentiment on contentious legislation benefit from a model that can process and summarize opposing arguments without bias toward “safe” middle-ground positions.

Common mistake to avoid:

Don’t confuse “uncensored” with “unfiltered misinformation.” Grok 4 still maintains factual grounding and doesn’t fabricate false claims—it simply engages with topics more directly. Teams still need application-layer controls for consumer-facing deployments.

How Does Real-Time X Data Integration Enable Dynamic Applications?

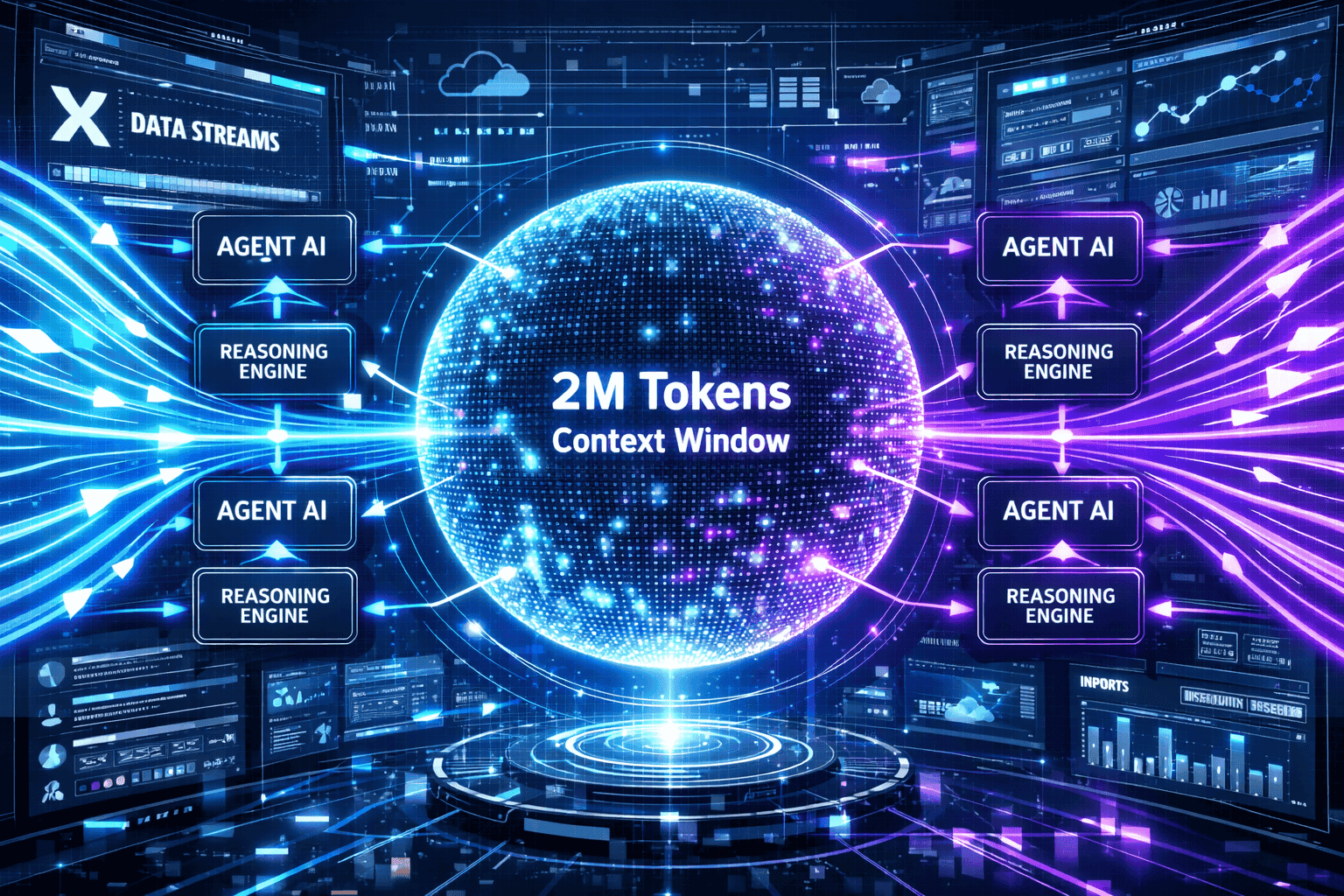

Grok 4’s direct connection to X platform data streams provides access to conversations, trends, and events happening right now—not months ago when training data was collected. This real-time awareness transforms applications that depend on current information.

The integration works by allowing Grok 4 to query recent posts, trending topics, and user discussions as part of its reasoning process. When you ask about breaking news or current sentiment on an issue, the model can reference actual recent posts rather than relying solely on historical training data.

Practical applications include:

- Social listening dashboards: Track brand mentions, competitor activity, and customer sentiment in real-time with AI-powered analysis

- Trend forecasting: Identify emerging topics before they reach mainstream coverage by analyzing early-stage discussions

- Crisis monitoring: Detect and assess developing situations through rapid analysis of relevant conversation clusters

- Content timing optimization: Understand what topics are gaining traction to inform publishing schedules

- Competitive intelligence: Monitor industry discussions, product launches, and market reactions as they unfold

Implementation example:

A media organization uses Grok 4 to power a newsroom assistant that monitors X for breaking stories in specific beats. When unusual activity spikes around keywords like “FDA approval” or “merger announcement,” the system alerts reporters with summarized context and relevant source threads—cutting research time from hours to minutes.

Decision criteria:

Choose Grok 4 for real-time applications if your use case benefits from current awareness and you can leverage X as a primary data source. If your application requires broader social media coverage or doesn’t need minute-by-minute updates, models with different data integrations may fit better.

Edge case to consider:

Real-time data access means the model may surface unverified claims or early speculation. Build verification steps into workflows that require factual accuracy—treat initial Grok 4 analysis as rapid intelligence gathering, not final fact-checking.

For teams building real-time AI applications, Grok 4’s X integration offers unique advantages unavailable in models trained on static datasets.

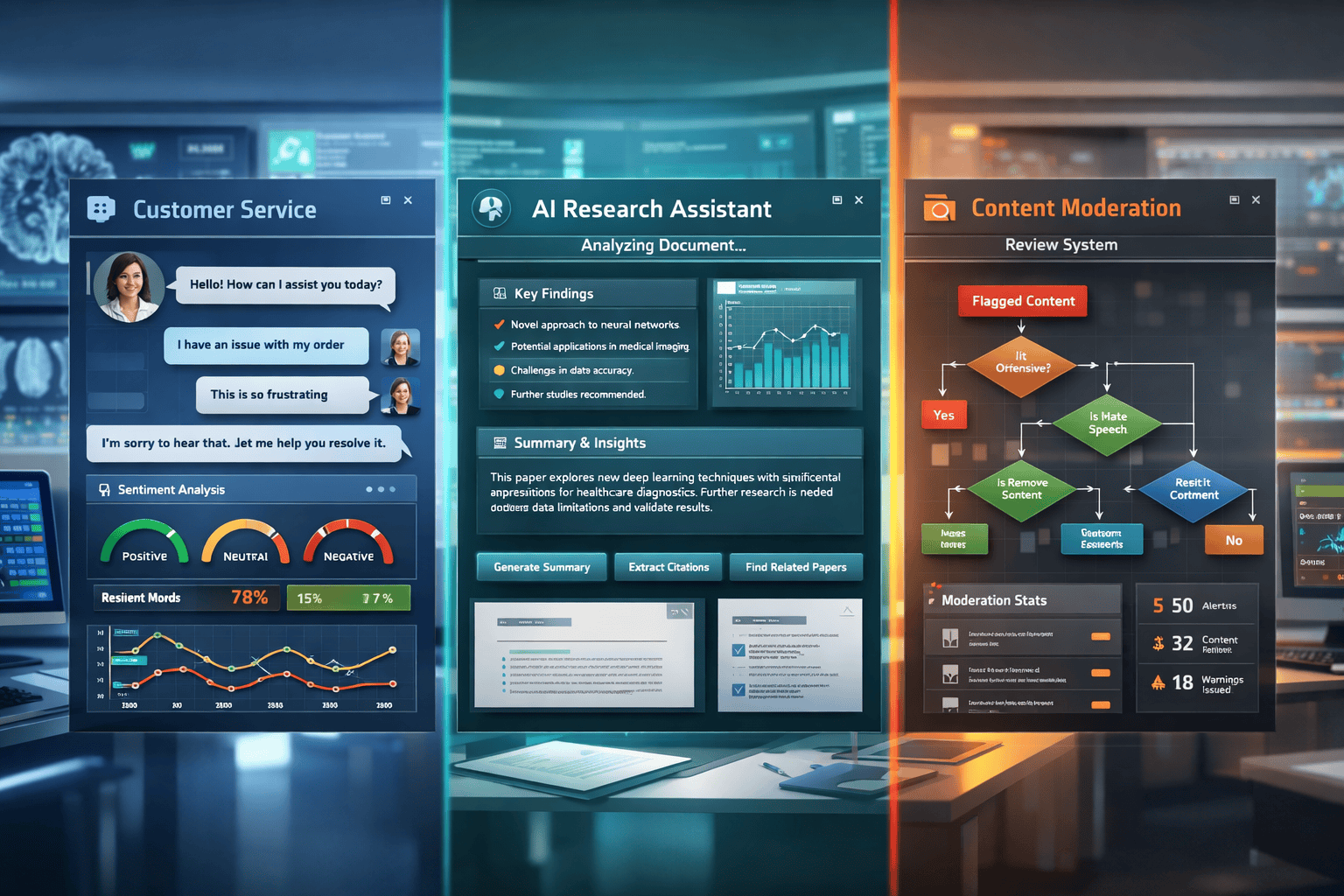

What Are the Most Effective Real-World Applications for xAI’s Grok 4 Uncensored in 2026?

Organizations successfully deploy Grok 4 across use cases where uncensored reasoning, real-time awareness, or extensive context handling provide clear advantages over alternative models. The most effective applications match the model’s strengths to specific business problems.

Research and Analysis

Academic researchers, policy analysts, and think tanks use Grok 4 to explore controversial topics without encountering constant refusal responses. The model processes multiple viewpoints on contested issues, helping researchers understand the full spectrum of arguments.

Specific use cases:

- Political science analysis of polarizing policy debates

- Sociological research on online discourse patterns

- Media studies examining content moderation approaches

- Ethical research exploring AI alignment challenges

Journalism and Content Creation

News organizations and content teams leverage Grok 4’s real-time X access and uncensored reasoning for story development, fact-checking assistance, and audience analysis.

Specific use cases:

- Breaking news monitoring and initial research

- Op-ed drafting on controversial topics requiring balanced perspective presentation

- Audience sentiment analysis for story angle refinement

- Source discovery through X conversation mapping

Customer Intelligence

Brands deploy Grok 4 for social listening applications that require understanding nuanced customer sentiment—including negative feedback, competitive comparisons, and unfiltered opinions.

Specific use cases:

- Crisis detection through sentiment shift monitoring

- Product feedback analysis without sanitized filtering

- Competitive positioning assessment based on real customer discussions

- Influencer identification and relationship mapping

Legal and Compliance Research

Law firms and compliance teams use Grok 4’s extensive context window and uncensored reasoning for document analysis and regulatory research.

Specific use cases:

- Multi-document contract review with 2-million token context capacity

- Regulatory impact analysis across lengthy policy documents

- Case law research requiring processing of extensive legal precedents

- Due diligence document processing for M&A transactions

Selection framework:

| Use Case Type | Choose Grok 4 If… | Consider Alternatives If… |

|---|---|---|

| Research | Topic is controversial or requires diverse perspectives | Need strictly neutral academic tone |

| Content Creation | Real-time awareness matters or topic is sensitive | Consumer-facing with strict brand safety |

| Customer Intelligence | Need unfiltered sentiment from X platform | Require multi-platform social coverage |

| Document Processing | Documents exceed 500K tokens or need real-time context | Standard contracts under 100K tokens |

Common deployment mistake:

Teams sometimes deploy Grok 4 for general-purpose tasks where its unique capabilities don’t add value. If your application doesn’t specifically benefit from uncensored reasoning, real-time X data, or massive context windows, simpler models may deliver better cost-performance ratios.

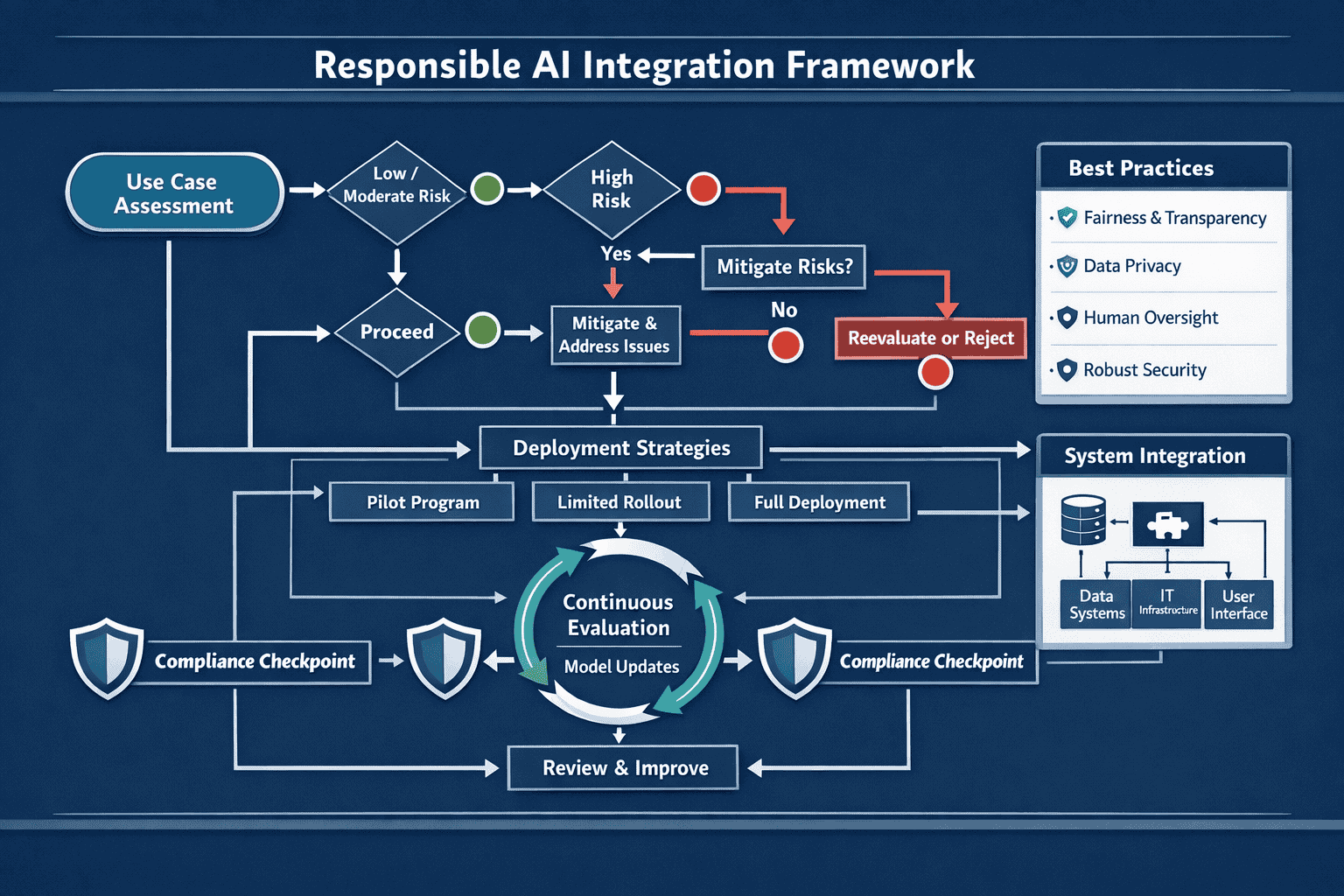

How Should Organizations Approach Ethical Deployment of Grok 4?

Responsible deployment of xAI’s Grok 4 Uncensored requires establishing clear governance frameworks that balance the model’s capabilities with appropriate controls based on specific use cases and risk profiles. The uncensored nature demands more thoughtful application-layer safeguards than heavily filtered alternatives.

Core ethical deployment principles:

1. Use-Case Risk Assessment

Before deployment, categorize your application by risk level based on potential harm from unfiltered outputs. High-risk applications (consumer-facing, regulated industries, vulnerable populations) require stricter controls than internal research tools.

Risk levels:

- Low risk: Internal research, analysis tools for trained professionals, academic applications

- Medium risk: Content creation requiring editorial review, B2B customer intelligence, professional services

- High risk: Consumer-facing chatbots, healthcare advice, financial recommendations, content for minors

2. Layered Control Architecture

Don’t rely solely on the model’s training. Build multiple control layers appropriate to your risk level:

- Input filtering: Screen user queries for prohibited use cases before they reach the model

- Output review: Implement automated scanning for harmful content patterns in responses

- Human oversight: Require expert review for high-stakes applications before publishing or acting on outputs

- Audit logging: Track all interactions for compliance review and continuous improvement

3. Transparency and Disclosure

Users interacting with Grok 4-powered applications deserve to understand they’re engaging with an AI system and what that means for response characteristics.

Best practices:

- Clearly label AI-generated content in all user-facing applications

- Explain the model’s capabilities and limitations in documentation

- Disclose when real-time X data informs responses

- Provide feedback mechanisms for users to report problematic outputs

4. Continuous Monitoring and Improvement

Ethical deployment isn’t a one-time setup—it requires ongoing assessment and refinement as you learn how the model performs in production.

Monitoring framework:

- Track output quality metrics and user feedback patterns

- Review flagged interactions weekly with cross-functional teams

- Update filtering rules based on observed edge cases

- Conduct quarterly ethical audits of deployment practices

5. Team Training and Guidelines

Ensure everyone working with Grok 4 understands both its capabilities and the organization’s ethical standards for use.

Training components:

- Model capability overview and appropriate use cases

- Organization-specific ethical guidelines and boundaries

- Escalation procedures for edge cases or concerning outputs

- Regular refreshers as deployment evolves

Implementation checklist:

✅ Document acceptable use cases and explicit prohibitions

✅ Establish review processes appropriate to risk level

✅ Configure technical controls (input/output filtering)

✅ Set up audit logging and monitoring dashboards

✅ Train all users on ethical guidelines

✅ Create escalation paths for edge cases

✅ Schedule regular ethical audits

✅ Maintain incident response procedures

Common ethical pitfall:

Organizations sometimes assume that because Grok 4 is “uncensored,” they can deploy it without controls. This misunderstands the model—uncensored training doesn’t eliminate the need for application-appropriate safeguards. The model provides capabilities; your deployment architecture determines responsible use.

For context on how other organizations approach enterprise AI adoption, comparing deployment strategies across models helps inform governance decisions.

What Deployment Strategies Work Best for Agentic Workflows?

Grok 4’s combination of massive context windows, real-time data access, and uncensored reasoning makes it particularly effective for complex agentic workflows where AI systems need to make decisions, take actions, and adapt based on evolving information.

Agentic workflows involve AI systems that can plan multi-step processes, use tools, query information sources, and adjust strategies based on results—going beyond simple question-answering to autonomous task completion.

Key deployment patterns for agentic applications:

Pattern 1: Research Agent with Real-Time Context

Deploy Grok 4 as a research agent that combines document analysis with current X discussions to provide comprehensive topic briefs.

Workflow steps:

- Agent receives research query on specific topic

- Searches and processes relevant documents using long context window

- Queries X for current discussions and recent developments

- Synthesizes historical knowledge with real-time insights

- Produces comprehensive brief with sources and current context

Best for: Competitive intelligence, policy research, market analysis

Pattern 2: Content Pipeline Orchestrator

Use Grok 4 to manage multi-stage content creation workflows that adapt based on audience response and trending topics.

Workflow steps:

- Monitor X trends to identify emerging topics in target domain

- Generate content briefs based on trend analysis and brand guidelines

- Draft initial content incorporating current discussions

- Route to human editors with context about why topic is trending

- Monitor published content performance and adjust future recommendations

Best for: Media organizations, content marketing teams, thought leadership programs

Pattern 3: Customer Intelligence Coordinator

Deploy Grok 4 as a coordinator that routes customer feedback to appropriate teams based on sentiment, urgency, and context.

Workflow steps:

- Continuously monitor brand mentions and customer discussions on X

- Analyze sentiment and categorize issues by type and severity

- Enrich feedback with historical context from previous interactions

- Route to appropriate teams with recommended response strategies

- Track resolution and feed learnings back into future analysis

Best for: Customer success teams, brand management, crisis response

Pattern 4: Document Processing Pipeline

Leverage the 2-million token context window for complex document workflows requiring cross-referencing and synthesis.

Workflow steps:

- Ingest multiple related documents (contracts, regulations, reports)

- Extract key terms, obligations, and relationships across documents

- Identify conflicts, gaps, or compliance issues

- Generate summary reports with specific citations

- Flag items requiring human expert review

Best for: Legal teams, compliance departments, due diligence processes

Technical implementation considerations:

- State management: Track conversation history and context across multi-step workflows

- Tool integration: Connect Grok 4 to databases, APIs, and internal systems through function calling

- Error handling: Build fallback strategies when real-time data is unavailable or responses need refinement

- Cost optimization: Cache common queries and use streaming responses to manage token costs

- Performance monitoring: Track completion rates, accuracy metrics, and user satisfaction

When to choose agentic deployment:

Select agentic workflows when tasks require multiple steps, decision-making, and adaptation based on intermediate results. If your use case involves straightforward question-answering or single-step generation, simpler deployment patterns may be more efficient.

Edge case:

Agentic systems with Grok 4 can sometimes pursue unexpected reasoning paths due to the uncensored training. Build in checkpoints where the system explains its planned approach before taking actions, allowing human operators to redirect if needed.

Teams interested in agentic AI workflows can learn from deployment patterns across different model architectures.

How Does Grok 4 Compare to Other Leading Models for Specific Use Cases?

Choosing the right model for your application means understanding where Grok 4’s unique capabilities provide advantages and where alternative models may fit better. No single model wins across all use cases—the key is matching strengths to requirements.

Grok 4 vs. GPT-4o: Content Moderation and Real-Time Awareness

Choose Grok 4 when:

- You need less restrictive content policies for research or analysis

- Real-time X data provides unique value for your application

- Processing very long documents (approaching 2M tokens)

Choose GPT-4o when:

- Consumer-facing applications require strict content safety

- Multimodal capabilities (vision, audio) are essential

- You need broader general knowledge without real-time requirements

Grok 4 vs. Claude Opus 4.5: Reasoning and Analysis

Choose Grok 4 when:

- Topic requires uncensored exploration of controversial angles

- Real-time social intelligence matters more than pure reasoning depth

- X platform data is your primary information source

Choose Claude Opus 4.5 when:

- Complex reasoning and problem-solving are top priorities

- You need industry-leading performance on analytical benchmarks

- Enterprise safety and compliance are non-negotiable

Grok 4 vs. Open-Source Alternatives (DeepSeek R1, Qwen3)

Choose Grok 4 when:

- Real-time X integration provides competitive advantage

- You value xAI’s infrastructure and support

- Deployment speed matters more than infrastructure control

Choose open-source models when:

- Full control over deployment environment is required

- Cost optimization through self-hosting is priority

- Customization through fine-tuning is essential

For detailed comparisons of open versus closed model economics, consider total cost of ownership beyond just API pricing.

Decision Matrix

| Priority | Best Fit Model | Reasoning |

|---|---|---|

| Real-time social intelligence | Grok 4 | Native X integration |

| Maximum reasoning capability | Claude Opus 4.5 | Benchmark leadership |

| Multimodal processing | GPT-4o | Vision, audio, text |

| Cost optimization at scale | Open-source | Self-hosting options |

| Uncensored research | Grok 4 | Training philosophy |

| Enterprise compliance | Claude Opus 4.5 | Safety track record |

| Long-context documents | Grok 4 | 2M token window |

Platform advantage:

Rather than committing to a single model, platforms like MULTIBLY give teams access to 300+ models including Grok 4, Claude, GPT-4o, and open-source alternatives—allowing you to compare responses side by side and choose the best model for each specific task. This approach eliminates vendor lock-in and lets you optimize for both quality and cost.

Common selection mistake:

Teams sometimes choose models based on marketing claims or benchmark performance rather than actual fit for their specific use case. Run pilot tests with real workflows before committing to deployment at scale.

What Are the Key Challenges and Limitations of Deploying Grok 4?

Understanding Grok 4’s limitations helps teams set realistic expectations and build appropriate mitigations into deployment strategies. No model is perfect for all scenarios—successful deployment means working within constraints.

Challenge 1: Content Moderation Complexity

The uncensored training that makes Grok 4 valuable for research also means outputs may include content inappropriate for some contexts without application-layer filtering.

Mitigation strategies:

- Implement robust output filtering for consumer-facing applications

- Establish clear use-case boundaries during deployment planning

- Train teams to review outputs before publication in sensitive contexts

- Build escalation procedures for edge cases

Challenge 2: Real-Time Data Reliability

While X integration provides current awareness, social media data can include misinformation, speculation, and unverified claims that the model may surface.

Mitigation strategies:

- Treat real-time insights as signals requiring verification, not final facts

- Build fact-checking steps into workflows where accuracy is critical

- Cross-reference X data with authoritative sources for important decisions

- Clearly label AI analysis based on social media as preliminary intelligence

Challenge 3: X Platform Dependency

Applications relying on real-time X data create dependency on that platform’s availability, API access, and data quality.

Mitigation strategies:

- Design fallback modes that function without real-time data

- Monitor X API status and build alerts for outages

- Consider whether your use case truly requires real-time access or if periodic updates suffice

- Evaluate alternative data sources for critical applications

Challenge 4: Context Window Management

While the 2-million token window enables powerful applications, managing such large contexts requires careful prompt engineering and cost management.

Mitigation strategies:

- Implement smart chunking strategies for very large documents

- Cache common context elements to reduce token usage

- Monitor token consumption and optimize prompts based on actual usage patterns

- Consider whether shorter-context models can handle specific tasks more efficiently

Challenge 5: Ethical Boundary Definition

The lack of heavy-handed content filtering means organizations must define their own ethical boundaries rather than relying on model defaults.

Mitigation strategies:

- Document explicit acceptable use policies before deployment

- Establish cross-functional ethics review boards for edge cases

- Conduct regular audits of actual usage patterns

- Update guidelines based on observed challenges

Challenge 6: Limited Multimodal Capabilities

Unlike GPT-4o or Gemini, Grok 4 focuses primarily on text, limiting applications requiring vision or audio processing.

Mitigation strategies:

- Use specialized models for multimodal tasks and route to Grok 4 for text reasoning

- Consider multi-model architectures that leverage each system’s strengths

- Evaluate whether text-only capabilities meet your core requirements before deployment

Troubleshooting common issues:

Issue: Outputs occasionally include inappropriate content

Solution: Strengthen output filtering rules and review processes for your specific use case

Issue: Real-time data queries return irrelevant or low-quality information

Solution: Refine search parameters and add result filtering based on engagement metrics or source credibility

Issue: Token costs exceed budget projections

Solution: Implement prompt compression, caching strategies, and context window optimization

Issue: Users uncertain when to trust model outputs

Solution: Build confidence indicators based on source quality and provide transparency about reasoning process

FAQ

Is Grok 4 suitable for customer-facing chatbots?

Grok 4 can power customer-facing applications, but requires more robust application-layer content filtering than heavily moderated alternatives like Claude or GPT-4. For consumer applications with strict brand safety requirements, consider using more restricted models or implementing comprehensive output review systems before deploying Grok 4.

How much does Grok 4 access cost compared to other premium models?

Pricing varies based on usage patterns and access methods. Organizations can access Grok 4 through xAI’s API or platforms like MULTIBLY that bundle multiple premium models for a single subscription, often reducing total cost when teams need access to several different AI systems.

Can Grok 4 be fine-tuned for specific industry applications?

As of March 2026, xAI has not publicly announced fine-tuning capabilities for Grok 4. Organizations requiring customization should explore prompt engineering, retrieval-augmented generation (RAG), or consider open-source alternatives that support fine-tuning if model customization is essential.

Does Grok 4’s real-time X access work in all languages?

The model’s real-time capabilities reflect X platform content, which varies significantly by language and region. English content dominates X discussions, so applications requiring real-time intelligence in other languages may find limited coverage. For multilingual AI applications, consider models specifically optimized for non-English languages.

How does the 2-million token context window affect response speed?

Larger context windows generally increase processing time and latency. For applications requiring very fast responses, consider whether you actually need the full context window or if shorter contexts would suffice. Optimize prompts to include only essential context rather than maximizing token usage.

What happens if X platform data becomes unavailable?

Grok 4 retains its core language capabilities even without real-time X access—it simply loses current awareness features. Design applications with graceful degradation so they can function (perhaps with reduced capabilities) if real-time data becomes temporarily unavailable.

Is Grok 4 appropriate for healthcare or medical applications?

Healthcare applications face strict regulatory requirements and liability concerns that make uncensored models challenging to deploy. Medical use cases typically require models with extensive safety testing and content moderation. Consult legal and compliance teams before deploying Grok 4 in healthcare contexts.

How does Grok 4 handle bias in X platform data?

Like all models trained on social media data, Grok 4 may reflect biases present in X discussions. Applications requiring balanced perspectives should implement bias detection and mitigation strategies at the application layer, including diverse source checking and perspective balancing in prompts.

Can Grok 4 replace traditional search engines for research?

Grok 4 complements rather than replaces search engines. It excels at synthesizing information and providing analysis but may not surface all relevant sources or provide the breadth of coverage that comprehensive search delivers. Use Grok 4 for analysis and synthesis after gathering sources through traditional search.

What’s the best way to get started testing Grok 4 for my use case?

Start with a focused pilot project that tests Grok 4’s unique capabilities (uncensored reasoning, real-time X data, or long-context processing) against your specific requirements. Platforms like MULTIBLY allow you to compare Grok 4 responses against other leading models side by side, helping you evaluate fit before committing to full deployment.

How frequently is Grok 4 updated with new capabilities?

xAI has not published a fixed update schedule. The model entered public beta in February 2026[3], suggesting active development continues. Monitor xAI announcements for capability updates and new features.

Does using Grok 4 require technical AI expertise?

Basic usage through API interfaces requires moderate technical skills similar to other AI models. Complex deployments involving agentic workflows, custom filtering, or integration with existing systems benefit from dedicated AI engineering resources. Non-technical teams can access Grok 4 through user-friendly platforms that abstract technical complexity.

Conclusion

xAI’s Grok 4 Uncensored: Real-World Applications and Ethical Deployment Strategies in 2026 center on matching the model’s unique strengths—uncensored reasoning, real-time X integration, and massive context windows—to specific use cases where these capabilities provide clear advantages. Organizations succeed with Grok 4 by establishing thoughtful governance frameworks that balance capability with responsibility, implementing application-layer controls appropriate to their risk profile, and choosing deployment strategies that leverage the model’s differentiators.

The most effective Grok 4 deployments share common characteristics: clear use-case definition, layered ethical controls, continuous monitoring, and realistic expectations about both capabilities and limitations. Teams that treat the model as one tool in a broader AI toolkit—using it where it excels and routing other tasks to better-suited alternatives—achieve better outcomes than those seeking a single model for all applications.

Actionable next steps:

- Assess your use cases against Grok 4’s core strengths (uncensored reasoning, real-time social intelligence, long-context processing) to identify high-value applications

- Conduct risk evaluation for each potential application to determine appropriate ethical controls and deployment architecture

- Run focused pilots testing Grok 4 against current solutions or alternative models on real workflows before scaling

- Establish governance frameworks including acceptable use policies, review processes, and monitoring systems before production deployment

- Consider multi-model strategies that leverage Grok 4’s strengths alongside other models optimized for different tasks

- Explore platforms like MULTIBLY that provide access to Grok 4 and 300+ other models for comprehensive comparison and flexible deployment

The future of enterprise AI isn’t about finding one perfect model—it’s about building the expertise to choose the right tool for each specific job. Grok 4 represents a valuable option for teams whose requirements align with its capabilities, particularly those needing transparent reasoning on complex topics or real-time social intelligence. Success comes from deploying it thoughtfully within a broader AI strategy that prioritizes both capability and responsibility.

References

[1] Watch – https://www.youtube.com/watch?v=bUVnnqZcKQs

[2] Best Uncensored Ai Models March 2026 – https://www.mangomindbd.com/blog/best-uncensored-ai-models-march-2026

[3] K14xh19q – https://unifuncs.com/s/K14xH19q