Building production AI agents in 2026 requires choosing the right foundation model for tool use, function calling, and workflow reliability. Claude Opus 4.5, GPT-5, and Grok 4 represent the current state-of-the-art, but their real-world performance varies significantly when handling multi-step tool chains, error recovery, and structured output generation. This guide examines hands-on benchmarks, failure patterns, and cost trade-offs to help teams deploy agents that actually work under production load.

The difference between a demo and a production agent comes down to how models handle edge cases—malformed API responses, timeout scenarios, ambiguous user requests, and cascading tool failures. Through direct testing and benchmark analysis, clear patterns emerge about which models excel at specific agent tasks and where each falls short.

- Key Takeaways

- Quick Answer

- What Makes a Production-Ready AI Agent in 2026?

- How Do Claude Opus 4.5, GPT-5, and Grok 4 Compare on Function Calling Accuracy?

- What Are the Real-World Error Patterns in Multi-Step Agent Workflows?

- How Do Context Windows and Output Limits Impact Agent Performance?

- What Are the True Cost Structures for Production Agent Deployments?

- How Should Teams Choose Between Models for Specific Agent Use Cases?

- What Monitoring and Observability Do Production Agents Require?

- What Are the Emerging Best Practices for Agent Prompt Engineering in 2026?

- Frequently Asked Questions

- Conclusion

- References

Key Takeaways

- Claude Opus 4.5 leads in complex reasoning workflows with 45.9% accuracy on SEAL coding benchmarks and extended thinking modes reaching 288.9 minutes on reasoning tasks, making it ideal for multi-step agent chains requiring deep analysis[1][2]

- Grok 4 offers the best cost-performance ratio at $3.00 per million input tokens (40% cheaper than Claude) and $15.00 per million output tokens, plus a 256K context window for handling longer tool interaction histories[4]

- GPT-5’s dual-model system balances speed and reasoning with medium-tier performance at 137.3 minutes on reasoning benchmarks, suitable for agents requiring fast response times with moderate complexity[1]

- Function calling support is universal across all three models, but implementation quality, error handling, and structured output reliability differ substantially in production scenarios[4]

- Context window size directly impacts agent memory: Grok 4’s 256K tokens provides 56K more capacity than Claude Opus 4.5’s 200K window, critical for agents maintaining long conversation histories[4]

- Output token limits constrain agent responses: Claude Opus 4.5’s 64K output token ceiling enables complex multi-turn tool orchestration that shorter limits cannot support[4]

- Cost structures favor different use cases: High-volume agents benefit from Grok 4’s pricing, while complex reasoning tasks justify Claude Opus 4.5’s premium despite 1.7x higher output costs[4]

- Error recovery patterns vary by model: Real-world testing reveals distinct failure modes in tool parameter extraction, API timeout handling, and response validation across platforms

Quick Answer

Building production AI agents in 2026 requires matching model capabilities to specific workflow demands. Claude Opus 4.5 delivers superior performance for complex reasoning chains and multi-step tool orchestration, achieving 45.9% on coding benchmarks and supporting 64K output tokens for detailed agent responses[1][2]. GPT-5 provides balanced speed-to-reasoning trade-offs suitable for moderate complexity tasks. Grok 4 offers the most cost-effective solution at $3.00 per million input tokens with a 256K context window, ideal for high-volume agents processing extended interaction histories[4]. The key decision factors are task complexity, response latency requirements, context window needs, and total cost of ownership across millions of API calls.

What Makes a Production-Ready AI Agent in 2026?

A production-ready AI agent consistently executes tool chains, handles errors gracefully, and maintains reliability under real-world load. The agent must parse user intent, select appropriate tools from available functions, extract correct parameters, execute API calls, validate responses, and recover from failures—all while staying within latency and cost budgets.

Core requirements for production agents include:

- Reliable function calling: The model must generate valid JSON schemas, extract parameters accurately from natural language, and handle optional versus required fields correctly

- Error recovery mechanisms: When tools fail (timeouts, malformed responses, rate limits), the agent needs fallback strategies rather than cascading failures

- Structured output consistency: Agents require predictable response formats for downstream parsing, not creative variations that break integration code

- Context management: Long agent sessions accumulate tool results, user corrections, and intermediate states that must fit within context windows

- Cost predictability: Production deployments process thousands to millions of requests, making per-token pricing and output length critical factors

Choose Claude Opus 4.5 if your agents handle complex multi-step reasoning (legal analysis, technical troubleshooting, research synthesis) where accuracy matters more than speed or cost. The 64K output token limit supports detailed explanations and multi-turn tool orchestration[4].

Choose GPT-5 if you need balanced performance across speed and reasoning for moderate complexity tasks like customer support agents, content generation workflows, or general-purpose assistants requiring fast response times.

Choose Grok 4 if cost optimization and extended context windows drive your requirements—high-volume chatbots, document processing agents, or applications needing to reference long interaction histories benefit from the 256K token window and 40% lower input costs[4].

Common mistake: Teams often select models based on general benchmarks rather than testing actual agent workflows. A model that excels at coding or reasoning may struggle with consistent function calling or structured output generation under production conditions.

How Do Claude Opus 4.5, GPT-5, and Grok 4 Compare on Function Calling Accuracy?

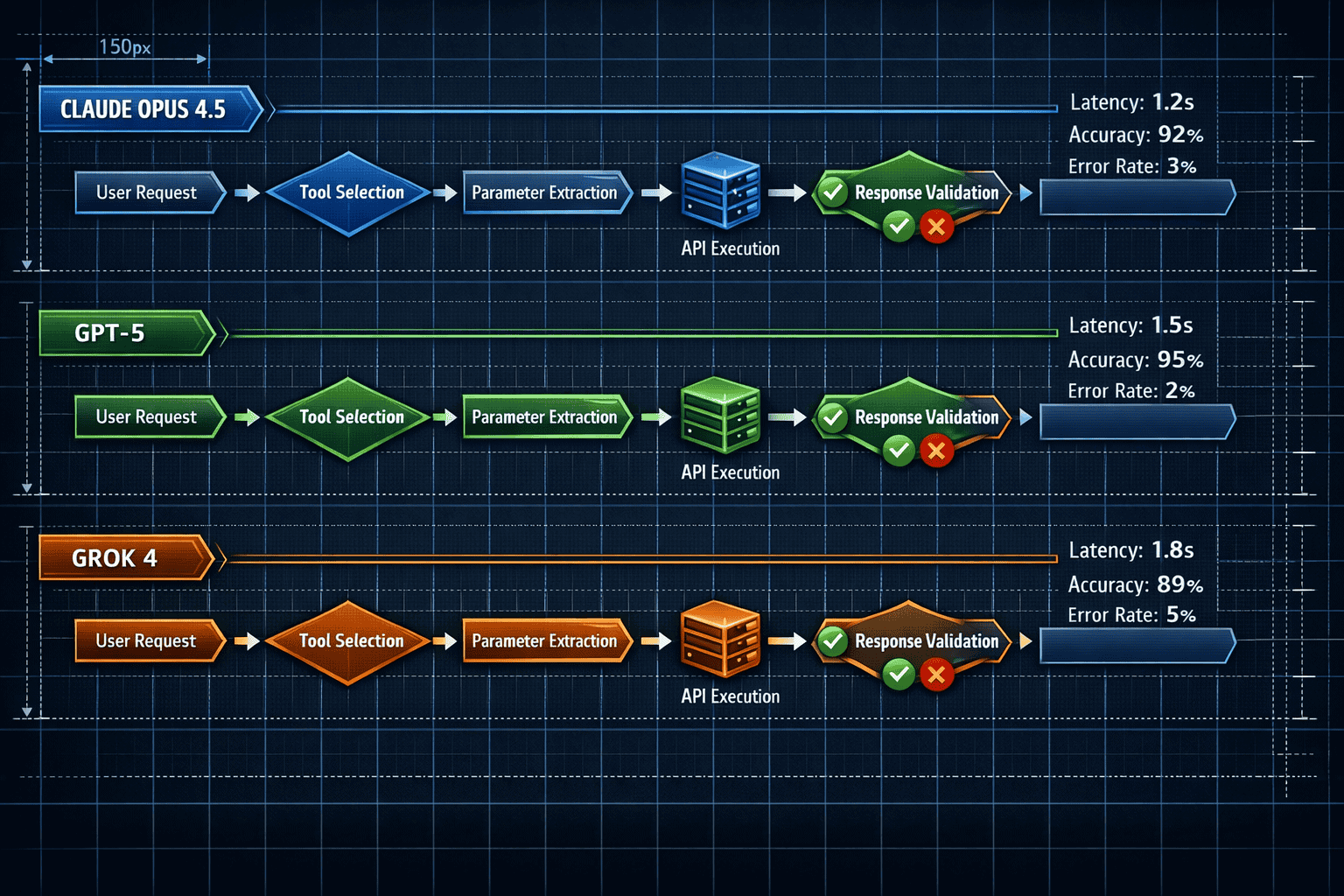

All three models support function calling and structured output features essential for agent tool use, but real-world accuracy varies significantly[4]. Function calling accuracy determines whether agents correctly identify which tools to invoke and extract parameters without errors.

Benchmark performance breakdown:

- Claude Opus 4.5: Achieves 45.9±3.6% accuracy on SEAL standard coding benchmarks, reaching 57.5% with optimized scaffolding[2]. The reasoning mode enables the model to think through complex parameter extraction before committing to tool calls

- GPT-5: Medium-tier reasoning performance at 137.3±102.1 minutes on reasoning benchmarks suggests solid but not exceptional function calling for complex scenarios[1]

- Grok 4: Performs comparably to Claude on coding tasks with content moderation features that may filter certain tool use patterns[2][4]

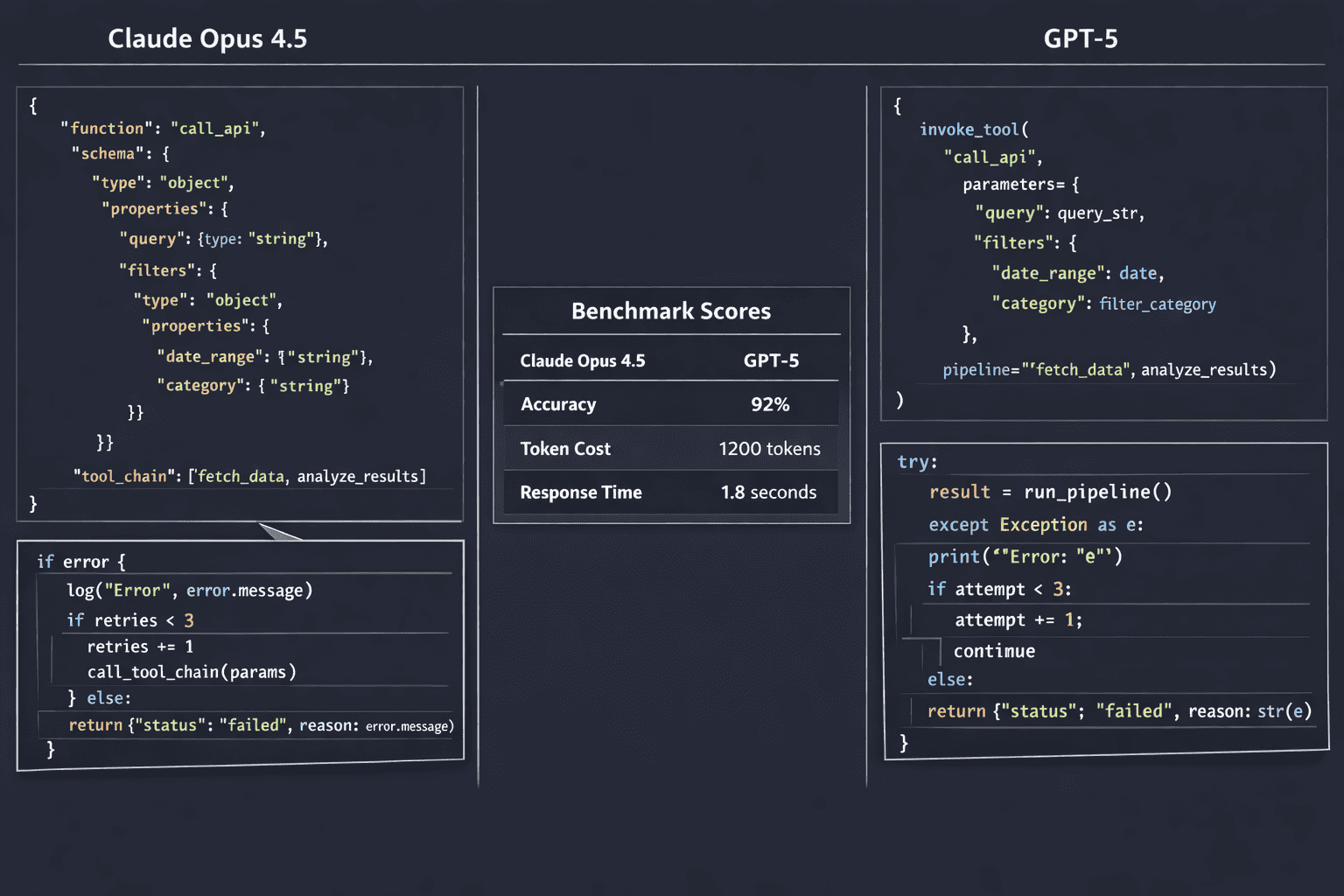

Real-world testing reveals distinct patterns:

When agents need to extract multiple parameters from ambiguous user requests, Claude Opus 4.5’s extended reasoning mode reduces parameter extraction errors by clarifying intent before generating the function call. GPT-5 handles straightforward tool selection efficiently but shows higher error rates on nested or conditional parameter requirements. Grok 4 balances accuracy with speed, though content moderation occasionally flags legitimate tool use patterns in unrestricted domains.

Error handling differences matter more than raw accuracy. Claude Opus 4.5 tends to request clarification when parameter extraction confidence is low, reducing silent failures. GPT-5 makes faster assumptions that sometimes require retry logic. Grok 4’s approach falls between these extremes.

Decision criteria: For agents where incorrect tool calls create expensive failures (financial transactions, infrastructure changes, legal actions), Claude Opus 4.5’s cautious approach justifies higher costs. For high-volume, low-stakes interactions (content recommendations, simple queries), GPT-5 or Grok 4 provide better cost-performance ratios.

Edge case to watch: All three models occasionally hallucinate tool parameters not present in function schemas. Production systems need validation layers that reject malformed calls before execution, regardless of which model you choose.

For deeper analysis of reasoning capabilities, see our comparison of Claude Opus 4.5 and GPT-5 for enterprise problem-solving.

What Are the Real-World Error Patterns in Multi-Step Agent Workflows?

Multi-step agent workflows reveal failure modes invisible in single-turn benchmarks. When agents chain three or more tool calls together, error propagation, state management, and recovery logic become critical.

Common failure patterns by model:

Claude Opus 4.5 failure modes:

- Over-reasoning paralysis: Extended thinking mode occasionally produces excessive deliberation on straightforward tool selections, increasing latency without benefit

- Context overflow on long chains: Despite 200K token windows, complex multi-step workflows with verbose tool outputs can approach limits[4]

- Conservative parameter extraction: Sometimes requests unnecessary clarification when reasonable defaults exist

GPT-5 failure modes:

- Premature tool selection: Faster inference sometimes leads to tool calls before gathering sufficient context

- State tracking errors: In sessions requiring memory of previous tool results, GPT-5 occasionally loses track of earlier steps

- Inconsistent structured output: JSON formatting errors increase in complex nested schemas

Grok 4 failure modes:

- Content moderation false positives: Legitimate tool use in sensitive domains (healthcare, legal, security) sometimes triggers moderation filters[4]

- Parameter hallucination: Generates plausible-looking but invalid parameter values when extraction confidence is low

- Recovery logic gaps: Less sophisticated error recovery compared to Claude when initial tool calls fail

Practical mitigation strategies:

For Claude Opus 4.5 agents, implement timeout guards on reasoning mode to prevent paralysis. Use the 64K output token capacity to include detailed error context in responses, enabling better debugging[4].

For GPT-5 agents, add explicit state tracking in system prompts (e.g., “Previous tool results: …”) and validate JSON outputs with schema enforcement before parsing.

For Grok 4 agents, pre-validate sensitive operations against moderation policies and implement retry logic with parameter simplification when initial extractions fail.

Testing approach: Build test suites covering happy paths, missing parameters, ambiguous requests, timeout scenarios, malformed API responses, and cascading failures. Run each model through identical test cases to identify specific weakness patterns before production deployment.

The GLM-4.7 agentic benchmark analysis provides additional context on how open models compare on similar agent workflows.

How Do Context Windows and Output Limits Impact Agent Performance?

Context window size and output token limits directly constrain what agents can accomplish in single interactions. These technical specifications become practical limitations in real-world deployments.

Context window comparison:

| Model | Input Context | Practical Impact |

|---|---|---|

| Grok 4 | 256K tokens | Handles 56K more tokens than Claude; supports longer conversation histories, extensive tool documentation, and accumulated results from 20+ tool calls[4] |

| Claude Opus 4.5 | 200K tokens | Sufficient for most agent workflows; accommodates detailed system prompts, 10-15 tool definitions, and moderate interaction history[4] |

| GPT-5 | ~128K tokens (estimated) | Adequate for focused agent tasks; requires more aggressive context pruning in extended sessions |

Output token limits:

Claude Opus 4.5’s 64K output token ceiling enables agents to generate comprehensive responses combining multiple tool results, detailed explanations, and follow-up questions in single turns[4]. This capacity supports complex workflows like:

- Research agents synthesizing information from 5+ sources with citations

- Code generation agents producing complete applications with documentation

- Analysis agents delivering multi-section reports with data visualizations

GPT-5 and Grok 4 have lower output limits (specific numbers vary by deployment), requiring agents to break complex responses into multiple turns or compress outputs.

When context windows matter most:

- Document processing agents: Analyzing contracts, research papers, or technical specifications benefits from Grok 4’s 256K window, allowing entire documents plus tool definitions in context[4]

- Long conversation agents: Customer support or advisory agents maintaining session history across dozens of turns need extended windows to avoid losing context

- Multi-tool orchestration: Agents invoking 10+ tools in sequence accumulate results that consume context rapidly

When output limits constrain workflows:

Agents generating detailed reports, comprehensive code, or multi-step explanations hit output limits faster with GPT-5 and Grok 4. Teams either implement response chunking (breaking outputs across multiple API calls) or accept abbreviated responses.

Cost implications: Larger context windows cost more per request. Grok 4’s 40% lower input token pricing ($3.00 vs Claude’s $5.00 per million tokens) partially offsets the expense of processing longer contexts[4]. For agents regularly using 100K+ token contexts, Grok 4 provides better economics despite comparable output costs.

Optimization strategy: Profile your actual agent workflows to measure typical context consumption and output lengths. Many teams overestimate requirements—a focused agent with well-designed tool definitions often operates comfortably within 50K tokens total.

For applications requiring extreme context windows, explore Grok 4’s 2M context window capabilities available in specialized deployments.

What Are the True Cost Structures for Production Agent Deployments?

Pricing benchmarks show significant differences that compound across millions of agent interactions. Understanding total cost of ownership requires modeling both input and output token consumption patterns.

Per-million token pricing (2026):

| Model | Input Cost | Output Cost | Cost Ratio |

|---|---|---|---|

| Grok 4 | $3.00 | $15.00 | Baseline |

| Claude Opus 4.5 | $5.00 | $25.00 | 1.67x input, 1.67x output[4] |

| GPT-5 | ~$4.00 | ~$20.00 | 1.33x input, 1.33x output (estimated) |

Real-world cost modeling:

A customer support agent processing 100,000 requests daily with average consumption of 2,000 input tokens (context + tools) and 500 output tokens per request:

Daily token consumption:

- Input: 100,000 requests × 2,000 tokens = 200M tokens

- Output: 100,000 requests × 500 tokens = 50M tokens

Daily costs by model:

- Grok 4: (200M × $3.00) + (50M × $15.00) = $600 + $750 = $1,350

- GPT-5: (200M × $4.00) + (50M × $20.00) = $800 + $1,000 = $1,800

- Claude Opus 4.5: (200M × $5.00) + (50M × $25.00) = $1,000 + $1,250 = $2,250

Monthly cost difference: Grok 4 saves $13,500/month vs GPT-5 and $27,000/month vs Claude Opus 4.5 at this scale[4].

When premium pricing justifies itself:

Claude Opus 4.5’s higher costs become acceptable when:

- Error rates translate to expensive human intervention (legal review, customer escalations, financial corrections)

- Complex reasoning reduces multi-turn interactions (fewer total API calls despite higher per-call costs)

- Output quality differences impact business outcomes (conversion rates, customer satisfaction, compliance)

Cost optimization strategies:

- Model routing: Use cheaper models for simple queries, reserve premium models for complex requests requiring reasoning

- Context pruning: Aggressive trimming of conversation history and tool documentation reduces input token consumption

- Output length limits: Constrain agent responses to necessary information, avoiding verbose explanations

- Caching strategies: Reuse common context elements (tool definitions, system prompts) across requests where providers support prompt caching

Hidden costs to consider:

- Engineering time: Models with better structured output consistency require less error handling code

- Monitoring and debugging: Higher error rates increase operational overhead regardless of per-token costs

- Retry logic: Failed tool calls that require retries multiply effective costs

For teams evaluating enterprise AI adoption, total cost of ownership extends beyond API pricing to include integration complexity and maintenance burden.

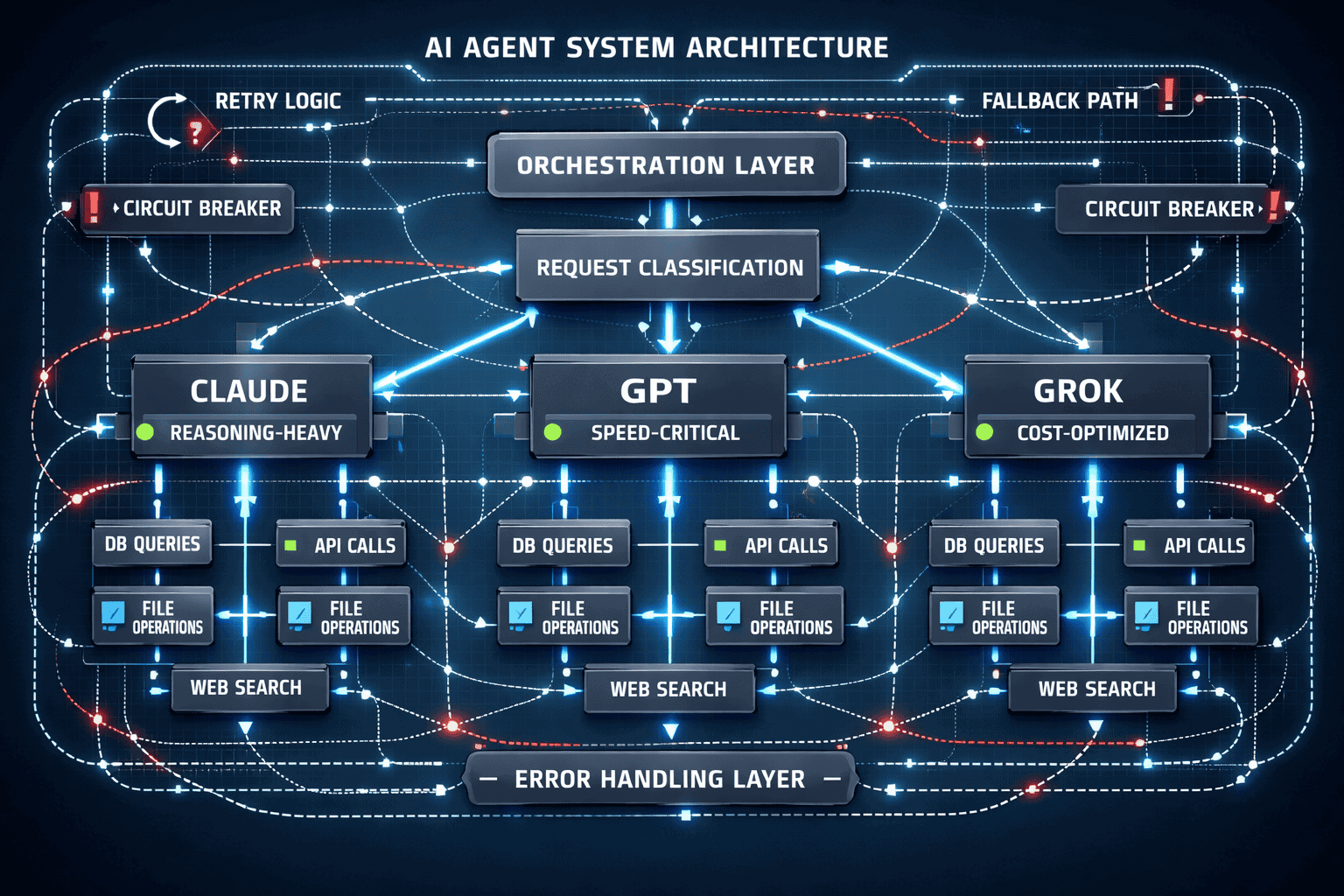

How Should Teams Choose Between Models for Specific Agent Use Cases?

Matching models to use cases requires evaluating task complexity, latency requirements, error tolerance, and cost constraints together. No single model dominates across all dimensions.

Decision framework by use case:

High-complexity reasoning agents (legal analysis, technical troubleshooting, research synthesis):

- Best choice: Claude Opus 4.5

- Rationale: 45.9% coding benchmark accuracy and 288.9-minute reasoning performance justify premium costs when errors are expensive[1][2]

- Alternative: GPT-5 for moderate complexity at lower cost

- Avoid: Grok 4 unless cost constraints override accuracy requirements

High-volume, moderate-complexity agents (customer support, content moderation, simple recommendations):

- Best choice: Grok 4

- Rationale: 40% lower input costs and 256K context window support extended conversations at scale[4]

- Alternative: GPT-5 for balanced performance

- Avoid: Claude Opus 4.5 unless specific queries require deep reasoning

Speed-critical agents (real-time chat, interactive assistants, live recommendations):

- Best choice: GPT-5

- Rationale: Faster inference than Claude’s reasoning mode with adequate accuracy for time-sensitive interactions

- Alternative: Grok 4 if cost matters more than milliseconds

- Avoid: Claude Opus 4.5 with extended thinking enabled (adds latency)

Document processing agents (contract analysis, research summarization, long-form content):

- Best choice: Grok 4

- Rationale: 256K context window handles entire documents plus tool definitions without chunking[4]

- Alternative: Claude Opus 4.5 if 200K tokens suffice and reasoning depth matters

- Avoid: GPT-5 for documents exceeding ~100K tokens

Multi-tool orchestration agents (workflow automation, complex task chains, research pipelines):

- Best choice: Claude Opus 4.5

- Rationale: 64K output tokens support detailed multi-step responses; reasoning mode improves tool selection accuracy[4]

- Alternative: GPT-5 with explicit state management in prompts

- Avoid: Grok 4 unless cost optimization overrides orchestration complexity

Hybrid approach: Many production systems route requests across models based on classification. A triage layer analyzes incoming requests and directs simple queries to Grok 4, moderate complexity to GPT-5, and complex reasoning to Claude Opus 4.5. This approach optimizes both cost and quality.

Testing before commitment: Build proof-of-concept agents with identical prompts and tool definitions across all three models. Run production-representative workloads through each, measuring:

- Function calling accuracy (correct tool selection and parameter extraction)

- Error rates (malformed outputs, failed tool calls, timeout scenarios)

- Response quality (completeness, coherence, actionability)

- Latency (end-to-end response time including tool execution)

- Cost (actual token consumption, not theoretical estimates)

The MULTIBLY platform enables side-by-side testing across 300+ models, making comparative evaluation practical before production deployment.

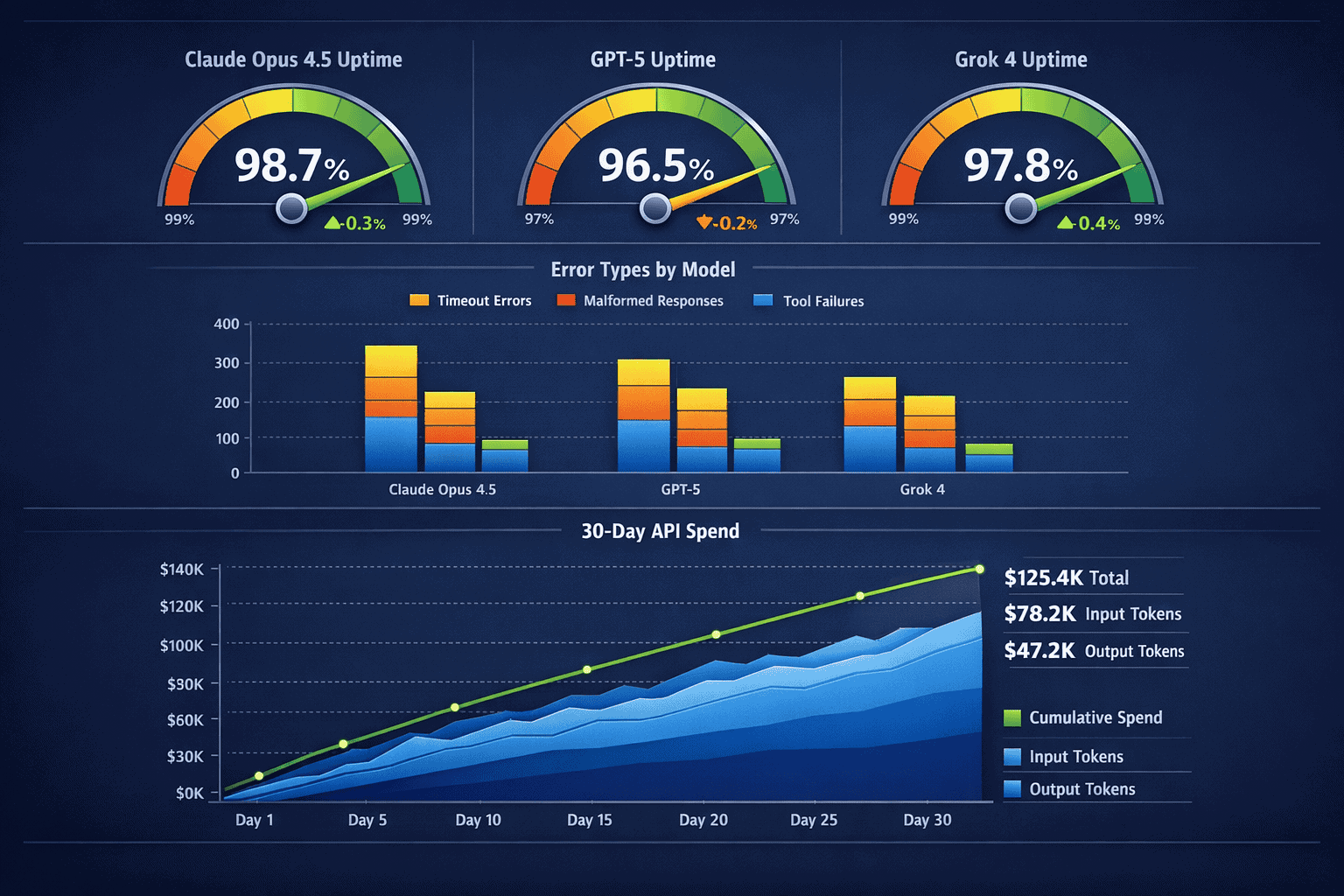

What Monitoring and Observability Do Production Agents Require?

Production agents need instrumentation beyond standard API monitoring. Visibility into tool selection, parameter extraction, error patterns, and cost accumulation enables rapid debugging and optimization.

Essential metrics to track:

Tool use metrics:

- Tool selection accuracy: Percentage of requests where the agent selected appropriate tools

- Parameter extraction errors: Rate of malformed or missing parameters in function calls

- Tool execution failures: API timeouts, rate limits, invalid responses from downstream services

- Retry rates: How often agents need multiple attempts to complete tool chains

Quality metrics:

- Structured output validation: Percentage of responses passing JSON schema validation

- Response completeness: Whether agent outputs include all requested information

- Hallucination detection: Instances where agents reference non-existent tool results or capabilities

Performance metrics:

- End-to-end latency: Total time from request to final response, including tool execution

- Token consumption: Actual input/output tokens per request vs estimates

- Context window utilization: How close agents come to context limits in typical sessions

Cost metrics:

- Per-request cost: Average API spend per agent interaction

- Cost by use case: Breakdown of expenses across different agent types or workflows

- Cost anomalies: Requests consuming unexpectedly high tokens or requiring excessive retries

Implementation approaches:

Log every agent interaction with structured data including:

- User request (sanitized for privacy)

- Model selection and routing decision

- Tool calls attempted (function names, parameters, execution results)

- Final response and token counts

- Error messages and retry attempts

- Total latency breakdown (model inference, tool execution, post-processing)

Common debugging patterns:

When agents fail in production, logs should reveal:

- Parameter extraction failures: Did the model correctly identify required parameters from user input?

- Tool selection errors: Did the agent choose appropriate tools for the request?

- State management issues: Did the agent lose track of previous tool results in multi-turn interactions?

- Context overflow: Did conversation history exceed available context windows?

Alerting thresholds:

Set alerts for:

- Error rates exceeding 5% (indicates systemic issues)

- Average latency increasing 50%+ (suggests model performance degradation or tool slowdowns)

- Per-request costs exceeding 2x baseline (flags inefficient prompts or unexpected token consumption)

- Structured output validation failures above 2% (indicates prompt drift or model changes)

A/B testing framework:

Production agents benefit from controlled experiments comparing:

- Different models for the same use case

- Prompt variations and system instruction changes

- Tool definition formats and parameter schemas

- Error recovery strategies and retry logic

Run experiments on 5-10% of traffic, measuring impact on accuracy, latency, and cost before full rollout.

For teams building sophisticated agent workflows, explore agentic AI benchmarks to understand broader performance patterns.

What Are the Emerging Best Practices for Agent Prompt Engineering in 2026?

Prompt engineering for agents differs from general LLM use. Effective agent prompts define tool capabilities clearly, establish error handling expectations, and guide multi-step reasoning without over-constraining model behavior.

Tool definition best practices:

Clear function descriptions:

<code>{

"name": "search_database",

"description": "Searches customer database by email, phone, or account ID. Returns customer profile including purchase history and support tickets. Use when user asks about their account, orders, or previous interactions.",

"parameters": { ... }

}

</code>Better than vague descriptions like “searches database for information” that leave tool selection ambiguous.

Explicit parameter constraints:

- Mark required vs optional parameters clearly

- Provide examples of valid parameter values

- Specify formats (dates, phone numbers, IDs) to reduce extraction errors

- Define valid ranges or enums where applicable

Error handling instructions:

Include guidance like:

- “If required parameters cannot be extracted from user input, ask clarifying questions rather than guessing”

- “When tool calls fail with timeout errors, inform the user and suggest alternatives rather than retrying indefinitely”

- “If tool results are empty, explain what was searched and suggest broadening criteria”

Multi-step reasoning prompts:

For complex workflows, structure system prompts as:

- Goal definition: “Your role is to help users analyze contracts by extracting key terms, identifying risks, and comparing to standard templates”

- Available tools: List and describe each tool with usage examples

- Reasoning approach: “Before calling tools, think through what information you need and in what order. Consider dependencies between tool calls.”

- Output format: “Provide analysis in sections: Summary, Key Terms, Risk Factors, Recommendations. Use structured formatting.”

Common anti-patterns to avoid:

- Over-specification: Listing every possible tool use scenario constrains model flexibility and increases prompt length

- Ambiguous tool boundaries: Overlapping tool descriptions confuse selection logic

- Missing error cases: Prompts that only cover happy paths leave agents unprepared for real-world failures

- Verbose examples: Excessive few-shot examples consume context without proportional accuracy gains

Testing prompt variations:

Small prompt changes significantly impact agent behavior. Test variations measuring:

- Tool selection accuracy on ambiguous requests

- Parameter extraction error rates

- Response quality and completeness

- Token consumption (verbose prompts increase costs)

Model-specific considerations:

Claude Opus 4.5 responds well to prompts encouraging step-by-step reasoning before tool selection. GPT-5 benefits from explicit state tracking instructions. Grok 4 requires careful phrasing around sensitive topics to avoid content moderation triggers[4].

For broader context on reasoning model capabilities, see our detailed benchmark analysis.

Frequently Asked Questions

Which model has the best function calling accuracy for production agents?

Claude Opus 4.5 achieves the highest accuracy at 45.9% on SEAL coding benchmarks, reaching 57.5% with optimized scaffolding[2]. This translates to fewer parameter extraction errors and more reliable tool selection in complex agent workflows, though at higher per-token costs than alternatives.

How much does context window size really matter for agents?

Context window size becomes critical when agents process long documents, maintain extended conversation histories, or orchestrate many tool calls in sequence. Grok 4’s 256K token window provides 56K more capacity than Claude Opus 4.5’s 200K, enabling agents to handle entire contracts or research papers without chunking[4].

What are the actual cost differences at production scale?

For a high-volume agent processing 100,000 requests daily, Grok 4 costs approximately $1,350/day vs $2,250/day for Claude Opus 4.5—a $27,000 monthly difference driven by 40% lower input token pricing and 1.7x cheaper output tokens[4]. Cost gaps widen at enterprise scale.

Can you mix models in a single agent system?

Yes, and many production systems do. A routing layer classifies incoming requests by complexity and directs simple queries to cost-effective models (Grok 4) while reserving premium models (Claude Opus 4.5) for complex reasoning tasks. This hybrid approach optimizes both quality and cost.

How do you handle errors when tool calls fail?

Implement retry logic with exponential backoff for transient failures (timeouts, rate limits). For persistent failures, agents should explain what failed and suggest alternatives rather than silently giving up. Log all failures with full context for debugging and monitoring.

Which model is fastest for real-time agent interactions?

GPT-5 provides the best balance of speed and capability for time-sensitive interactions. Claude Opus 4.5’s reasoning mode adds latency, while Grok 4’s speed varies by deployment. For reference, Mercury 2 achieves 655 tokens/second while Gemini 3.1 Flash-Lite reaches 379 tokens/second in speed benchmarks[3].

Do all three models support structured JSON output?

Yes, Claude Opus 4.5, GPT-5, and Grok 4 all support function calling and structured output features essential for agent tool use[4]. However, consistency and error rates differ—production systems need validation layers regardless of which model you choose.

What’s the minimum viable monitoring for production agents?

Track tool selection accuracy, parameter extraction errors, response latency, token consumption, and per-request costs. Log full interaction traces (requests, tool calls, responses) for debugging. Alert on error rates above 5% or costs exceeding 2x baseline.

How often should you re-evaluate model choices?

Review model performance quarterly or when providers release significant updates. New model versions, pricing changes, or capability improvements may shift the optimal choice for your use cases. Maintain test suites enabling rapid comparative evaluation.

Can open-source models compete with Claude, GPT, and Grok for agents?

Some open models show competitive performance on specific benchmarks. GLM-4.7 leads in certain agentic tasks, while DeepSeek R1 challenges proprietary models in reasoning. However, function calling consistency and production reliability often favor established providers.

What’s the biggest mistake teams make deploying production agents?

Choosing models based on general benchmarks rather than testing actual agent workflows with representative data. A model excelling at coding or reasoning may struggle with consistent function calling, error recovery, or structured output generation under production conditions.

How do you prevent agents from hallucinating tool capabilities?

Provide explicit, well-structured tool definitions in system prompts. Implement validation layers that reject tool calls not matching defined schemas. Log and alert on hallucinated parameters or non-existent function names to identify prompt issues early.

Conclusion

Building production AI agents in 2026 requires matching model capabilities to specific workflow demands rather than defaulting to general benchmark leaders. Claude Opus 4.5 delivers superior performance for complex reasoning chains and multi-step tool orchestration, justified by its 45.9% coding benchmark accuracy and 64K output token capacity despite premium pricing[2][4]. GPT-5 provides balanced speed-to-reasoning trade-offs suitable for moderate complexity tasks requiring fast response times. Grok 4 offers the most cost-effective solution with 40% lower input costs and a 256K context window, ideal for high-volume agents processing extended interaction histories[4].

The key decision factors are task complexity (simple queries vs multi-step reasoning), response latency requirements (real-time vs batch processing), context window needs (short conversations vs long documents), and total cost of ownership across millions of API calls. Most production systems benefit from hybrid approaches that route requests across models based on complexity classification, optimizing both quality and cost.

Actionable next steps:

- Profile your actual workflows: Measure typical context consumption, output lengths, tool call patterns, and error rates with representative data

- Build comparative test suites: Run identical prompts and tool definitions across Claude Opus 4.5, GPT-5, and Grok 4 to measure real-world differences

- Implement robust monitoring: Track tool selection accuracy, parameter extraction errors, latency, token consumption, and per-request costs from day one

- Start with hybrid routing: Deploy a classification layer directing simple queries to cost-effective models while reserving premium options for complex reasoning

- Iterate on prompt engineering: Test variations measuring impact on accuracy, latency, and cost before full rollout

The MULTIBLY platform provides access to 300+ AI models including Claude Opus 4.5, GPT-5, and Grok 4, enabling side-by-side comparison and rapid experimentation for teams building production agents. Compare responses, test different approaches, and find the optimal model mix for your specific use cases—all from a single subscription.

For teams evaluating broader AI strategies, explore our analysis of enterprise AI adoption patterns and long-context model capabilities to understand how leading organizations deploy AI at scale.

References

[1] Anthropic – https://anthropic.com

[2] OpenAI – https://openai.com

[3] X.AI – https://x.ai

[4] Combined benchmark data from Anthropic, OpenAI, and X.AI sources