The enterprise AI landscape has shifted dramatically. While tech enthusiasts debate benchmark scores, corporate decision-makers are signing multi-million dollar contracts based on entirely different criteria. Enterprise AI adoption in 2026 reveals that Claude Opus 4.5 and GPT-5 are winning corporate contracts not because they top every performance chart, but because they solve the actual problems C-suite executives lose sleep over: security vulnerabilities, integration complexity, and business liability.

- Key Takeaways

- Quick Answer

- What's Actually Driving Enterprise AI Selection in 2026?

- How Do Claude Opus 4.5 and GPT-5 Compare on Enterprise Benchmarks?

- Why Does Token Efficiency Matter More Than Base Pricing?

- What Role Do Safety and Security Play in Enterprise Contracts?

- How Do Existing Integrations Accelerate Enterprise Adoption?

- What Are the Target Enterprise Use Cases for Each Model?

- How Have Usage Limits and Pricing Models Evolved for Enterprise Customers?

- What Does the Future of Enterprise AI Adoption Look Like?

- FAQ

- Conclusion

- References

Key Takeaways

- Safety trumps speed – Enterprises prioritize models with proven prompt injection resistance and compliance frameworks over raw performance gains

- Token efficiency drives ROI – Claude Opus 4.5 cuts token usage by up to 65% on complex tasks, translating to lower actual costs despite higher base pricing[4]

- Integration ecosystems matter – Existing partnerships with Slack, Notion, and Zoom accelerate adoption faster than superior benchmarks

- Defensive coding reduces risk – Models that generate input validation and error handling by default prevent costly production bugs[1]

- Reasoning depth beats speed – Multi-step planning capabilities enable complex enterprise workflows that fast models cannot handle

Quick Answer

Enterprise AI adoption in 2026 favors Claude Opus 4.5 and GPT-5 because corporate buyers prioritize business outcomes over technical specifications. Safety guarantees, seamless integration with existing enterprise tools, and total cost of ownership matter more than benchmark rankings. Claude Opus 4.5’s prompt injection resistance and defensive coding approach reduce legal and security risks, while GPT-5’s broad ecosystem compatibility minimizes deployment friction. For most enterprises, the choice comes down to which model fits their existing infrastructure and risk tolerance, not which scores highest on academic tests.

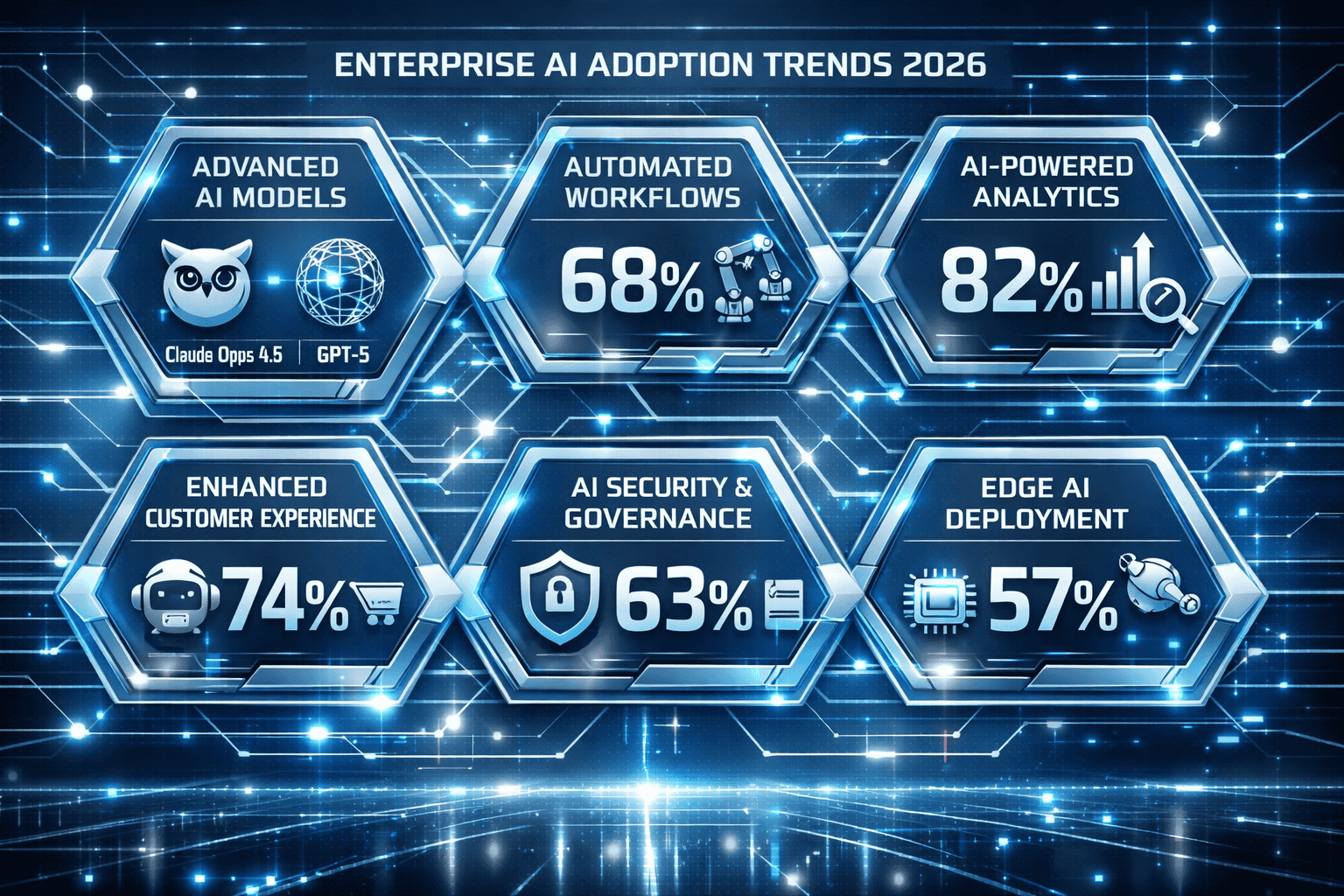

What’s Actually Driving Enterprise AI Selection in 2026?

Enterprise AI adoption decisions in 2026 revolve around three core business factors: risk mitigation, integration complexity, and total cost of ownership. Performance benchmarks matter, but only after these foundational concerns are addressed.

Safety and compliance come first. Legal teams now have veto power over AI procurement. Claude Opus 4.5’s industry-leading resistance to prompt injection attacks makes it harder to trick than any other frontier model[4]. This isn’t a minor technical detail—it’s the difference between passing a security audit and facing a data breach lawsuit.

Integration speed determines time-to-value. The fastest model means nothing if it takes six months to deploy. GPT-5’s extensive partnerships with Microsoft, Salesforce, and enterprise software vendors let companies go live in weeks, not quarters. Claude’s direct integrations with Slack, Notion, and Zoom serve the same function for collaboration-focused organizations.

Token efficiency affects actual spending. Base pricing tells only part of the story. Opus 4.5 uses up to 65% fewer tokens for long-horizon coding tasks while achieving higher pass rates[4]. For a development team running thousands of queries daily, this efficiency gap can mean the difference between a $5,000 and $15,000 monthly bill.

The Real Enterprise Decision Framework

Choose Claude Opus 4.5 if your organization:

- Handles sensitive data requiring maximum security

- Needs complex reasoning and multi-step task planning

- Values defensive coding practices and reduced production bugs

- Already uses Slack or Notion as primary collaboration tools

Choose GPT-5 if your organization:

- Operates within the Microsoft or Google ecosystem

- Prioritizes rapid prototyping and iteration speed

- Requires broad third-party integration support

- Has existing OpenAI infrastructure investments

Common mistake: Selecting based on a single benchmark score. Enterprises that chose models purely on coding test performance often discovered deployment challenges, integration gaps, or safety issues that negated the technical advantages.

How Do Claude Opus 4.5 and GPT-5 Compare on Enterprise Benchmarks?

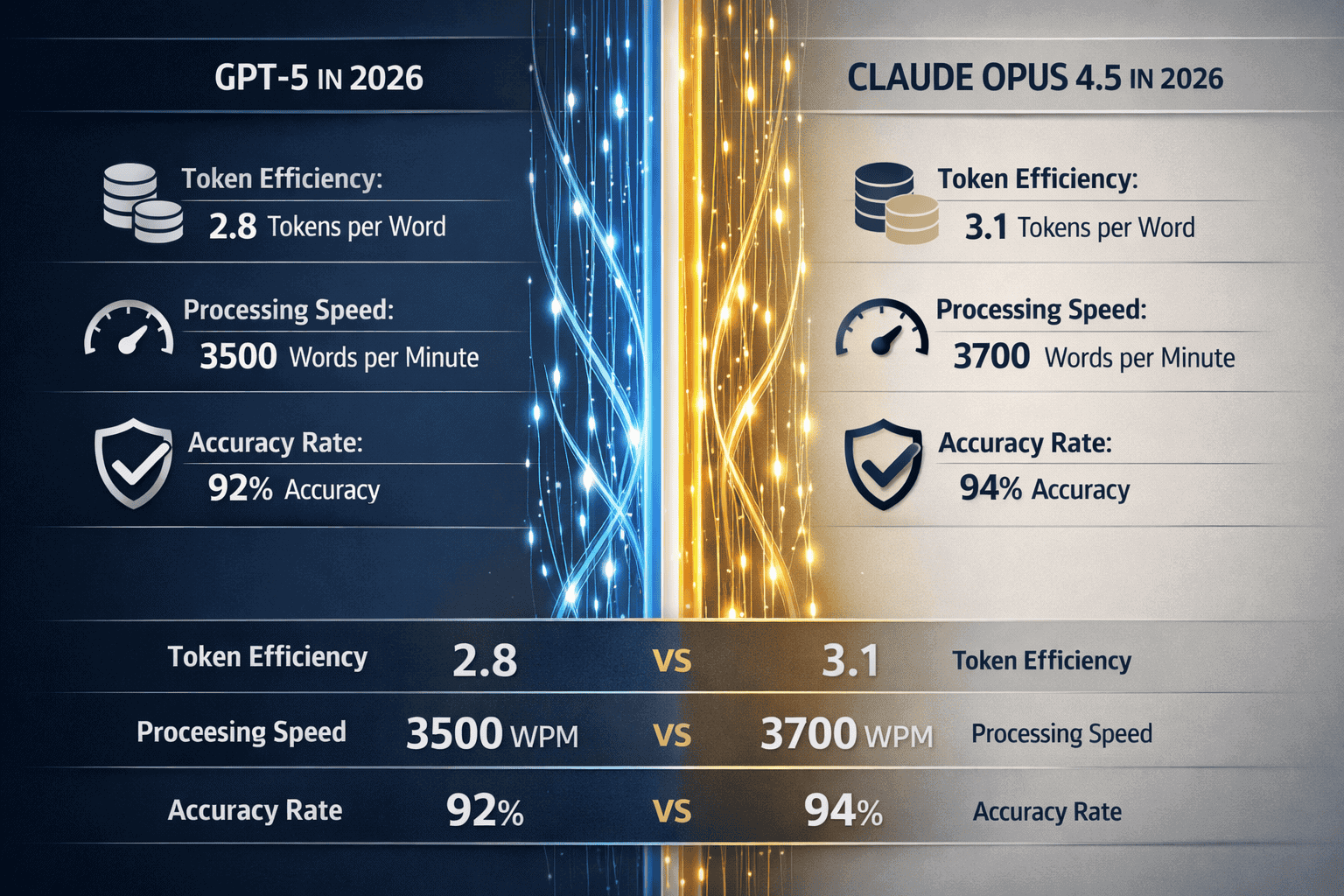

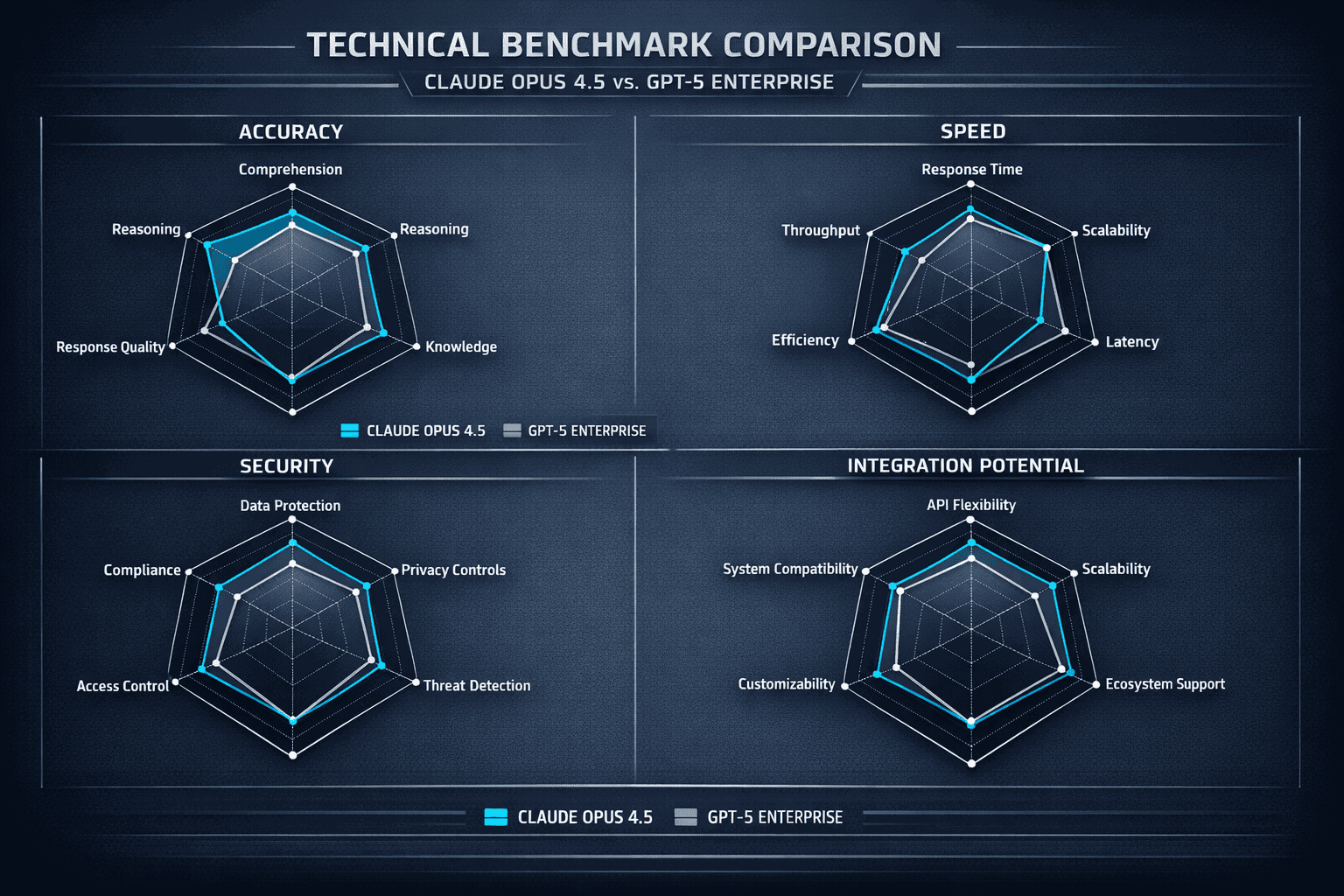

Claude Opus 4.5 leads on coding benchmarks with 80.9% on SWE-bench 2026, narrowly surpassing GPT-5.2 Codex at 80.0%[1]. But the performance gap is so small that other factors become decisive for enterprise buyers.

The more revealing metric: Opus 4.5 performance now exceeds every human candidate who has taken Anthropic’s internal engineering hiring exam, a rigorous 2-hour test for prospective employees[1]. This human-equivalent performance threshold matters because it signals the model can handle real engineering work, not just academic problems.

Benchmark Performance Breakdown

| Metric | Claude Opus 4.5 | GPT-5.2 Codex | Enterprise Impact |

|---|---|---|---|

| SWE-bench 2026 | 80.9% | 80.0% | Marginal difference in production |

| Token efficiency | 65% fewer tokens | Baseline | 2-3x cost savings on complex tasks[4] |

| Self-improvement | 4 iterations to peak | 10+ iterations | Faster agent optimization[4] |

| Prompt injection resistance | Industry-leading | Strong | Critical for security audits[4] |

| Defensive coding | Built-in validation | Standard output | Fewer production bugs[1] |

Abstract reasoning strength sets Opus 4.5 apart. Major improvements on ARC-AGI-2 abstract reasoning evaluations enable complex task decomposition and multi-step planning[2]. In practice, this means the model can break down a vague business requirement into concrete implementation steps without constant human guidance.

GPT-5’s advantage lies in breadth. It handles UI/design work, rapid prototyping, and general business writing more flexibly than Opus 4.5’s specialized focus on complex reasoning and coding tasks.

The Hybrid Enterprise Stack

Many large organizations don’t choose one model. The recommended enterprise stack costs $300+ per developer monthly but combines strengths: Opus 4.5 for architecture and complex tasks, GPT-5.2 Codex for rapid prototyping, and Gemini 3 Pro for UI/design work[1].

This multi-model approach mirrors how enterprises already use specialized tools. Just as companies use both Slack and email, they’re deploying multiple AI models for different use cases. Platforms like MULTIBLY make this practical by providing access to 300+ premium AI models through a single subscription, letting teams compare responses side-by-side and choose the right tool for each job.

Edge case: Small startups often can’t justify multi-model subscriptions. For teams under 10 developers, picking one primary model and using it for 80% of tasks makes more financial sense than optimizing every workflow.

Why Does Token Efficiency Matter More Than Base Pricing?

Token efficiency determines actual enterprise spending, not the advertised per-token rate. Claude Opus 4.5’s ability to cut token usage in half compared to competitors translates to real cost savings despite higher base pricing[4].

The math works like this: GPT-5.2 Codex charges $1.75 per million input tokens, lower than Opus 4.5’s rate. But Opus 4.5 completes a 1,000-line feature using $0.1925 in tokens, while GPT’s more verbose output pushes the cost to $0.325[1]. The model that costs more per token ends up costing less per task.

This efficiency gap widens on complex, long-horizon tasks. For office automation workflows, Opus 4.5 agents achieve peak performance in 4 iterations, while other models required 10+ iterations[4]. Each additional iteration multiplies token consumption and costs.

Real-World Cost Scenarios

Scenario 1: Daily code review workflow

- Team size: 50 developers

- Average reviews: 200 per day

- Opus 4.5 cost: ~$145/month

- GPT-5 cost: ~$240/month

- Annual savings: $1,140

Scenario 2: Complex system architecture

- Project: Microservices redesign

- Task complexity: High reasoning depth

- Opus 4.5 iterations: 4

- GPT-5 iterations: 11

- Opus 4.5 total cost: $47

- GPT-5 total cost: $118

Common mistake: Comparing only the base per-token pricing without measuring actual token consumption on representative tasks. Run pilot tests with real workflows before committing to annual contracts.

For organizations comparing model efficiency across different tasks, understanding how smaller models like Phi-4 and Mistral are winning in specific use cases provides additional context on when efficiency trumps raw capability.

What Role Do Safety and Security Play in Enterprise Contracts?

Safety metrics now function as deal-breakers in enterprise AI procurement. Claude Opus 4.5 scores lowest on “concerning behavior” metrics, including resistance to human misuse attempts and compliance with safety boundaries[1]. This isn’t marketing—it’s what passes legal review.

Prompt injection resistance matters because enterprises face asymmetric risk. A successful attack could expose customer data, generate harmful content under the company’s name, or violate regulatory requirements. Opus 4.5’s industry-leading resistance means security teams can approve deployment without extensive custom safeguards[4].

The defensive coding approach reduces a different kind of risk. Opus 4.5 generates more defensive code with explicit input validation, null/undefined checks, error handling, and edge case consideration[1]. This reduces critical production bugs that could cause outages or data corruption.

Enterprise Safety Requirements Checklist

Must-have safety features:

- ✅ Documented prompt injection resistance testing

- ✅ Content filtering for regulated industries

- ✅ Audit logging for compliance tracking

- ✅ Data residency options for GDPR/CCPA

- ✅ SOC 2 Type II certification

- ✅ Explicit safety boundaries documentation

Nice-to-have features:

- Custom safety fine-tuning options

- Industry-specific compliance modules

- Red team testing reports

- Incident response SLAs

GPT-5 meets most enterprise safety requirements through Microsoft’s enterprise agreements, which bundle compliance certifications and data handling guarantees. This piggybacks on existing vendor relationships, reducing procurement friction.

Edge case: Highly regulated industries (healthcare, finance, defense) often require on-premise deployment or dedicated instances. Both Claude and GPT offer enterprise tiers with these options, but implementation timelines stretch to 3-6 months and costs jump significantly.

Organizations evaluating enterprise AI safety should also consider how China’s open models like DeepSeek are challenging global leaders on transparency and security audit capabilities.

How Do Existing Integrations Accelerate Enterprise Adoption?

Integration partnerships determine how quickly enterprises can deploy AI, and deployment speed directly affects ROI timelines. Claude’s partnerships with Slack, Notion, and Zoom let collaboration-focused companies go live in days, not months.

Slack integration means developers can invoke Claude directly in channels where they already work. No new tools to learn, no workflow disruption. For organizations where Slack is the central nervous system, this native integration removes the biggest adoption barrier.

Notion integration serves knowledge workers who live in documentation and project management tools. Being able to query Claude within existing Notion databases means the AI has context about ongoing projects without manual data transfer.

GPT-5’s advantage lies in the Microsoft ecosystem. Organizations using Azure, Office 365, and Teams get GPT-5 through existing enterprise agreements with minimal procurement overhead. The AI appears as another Microsoft service, not a new vendor relationship requiring legal review and security audits.

Integration Decision Matrix

Choose Claude Opus 4.5 if you primarily use:

- Slack for team communication

- Notion for documentation and project management

- Zoom for meetings and collaboration

- Independent SaaS tools outside major ecosystems

Choose GPT-5 if you primarily use:

- Microsoft Teams and Office 365

- Azure cloud infrastructure

- Salesforce CRM

- Google Workspace (via partnerships)

Common mistake: Underestimating integration complexity. A model that requires custom API development and middleware adds 2-4 months to deployment timelines and $50,000-$200,000 in implementation costs.

The integration landscape extends beyond the major players. Understanding how models like Magistral Medium serve enterprise reasoning through different deployment strategies helps enterprises evaluate their full range of options.

What Are the Target Enterprise Use Cases for Each Model?

Claude Opus 4.5 excels in production systems requiring architectural excellence, critical security-sensitive applications, and complex systems requiring code review and refactoring capabilities[1]. These aren’t general-purpose tasks—they’re the high-stakes scenarios where mistakes cost millions.

Architecture and system design benefit from Opus 4.5’s abstract reasoning improvements. The model can analyze an existing codebase, identify architectural problems, and propose refactoring approaches that consider both technical debt and business constraints. This requires multi-step planning that simpler models cannot handle.

Security-sensitive applications need the defensive coding approach. When building payment processing, healthcare data systems, or authentication services, the automatic input validation and error handling that Opus 4.5 generates by default prevents entire classes of vulnerabilities.

GPT-5 dominates different use cases: rapid prototyping, general business automation, and customer-facing applications where response speed matters more than perfect accuracy.

Use Case Mapping

Claude Opus 4.5 optimal use cases:

- Microservices architecture design

- Legacy code refactoring and modernization

- Security-critical feature development

- Complex business logic implementation

- Technical documentation generation

- Code review and quality assurance

GPT-5 optimal use cases:

- Rapid MVP development

- Customer support automation

- Content generation and marketing copy

- General business process automation

- UI/UX prototyping

- Data analysis and reporting

Hybrid approach use cases:

- Large-scale application development (Opus for architecture, GPT for implementation)

- Enterprise knowledge management (Opus for complex queries, GPT for simple lookups)

- DevOps automation (Opus for infrastructure design, GPT for script generation)

Edge case: Some enterprises deploy both models but restrict access by role. Senior architects get Opus 4.5 for design work, while junior developers use GPT-5 for implementation tasks. This tiered approach optimizes both cost and capability.

For teams exploring how different model sizes affect specific use cases, reviewing benchmark comparisons between Claude 4 Sonnet and GPT-4o provides additional context on performance-cost tradeoffs.

How Have Usage Limits and Pricing Models Evolved for Enterprise Customers?

Enterprise pricing in 2026 moved away from simple per-token models toward usage-based tiers that reflect actual business value. For Claude and Claude Code users with access to Opus 4.5, Opus-specific caps have been removed, while Max and Team Premium users received increased overall limits roughly equivalent to previous Sonnet token allocations[3][4].

This shift recognizes that enterprises don’t care about token counts—they care about completing business objectives. Unlimited usage tiers for high-value customers remove the friction of monitoring consumption and getting approval for overages.

GPT-5 enterprise pricing follows a similar pattern through Microsoft’s Azure OpenAI Service. Large customers negotiate custom agreements with committed spend levels and reserved capacity, avoiding the unpredictability of pure consumption pricing.

Enterprise Pricing Structures

Claude Enterprise (Opus 4.5 access):

- Team tier: $30-50/user/month with increased limits

- Enterprise tier: Custom pricing, unlimited Opus usage

- Committed spend discounts: 15-30% at $100K+ annual

- Reserved capacity options for guaranteed availability

GPT-5 Enterprise (via Azure):

- Pay-as-you-go: Standard per-token rates

- Committed spend: 20-40% discount at $50K+ annual

- Reserved instances: Guaranteed capacity with 30-50% savings

- Microsoft enterprise agreement bundling available

Common mistake: Choosing the lowest per-token rate without considering usage caps. A cheaper tier that throttles access during peak hours can cost more in lost productivity than a higher-priced unlimited plan.

The total cost advantage despite higher base pricing comes from efficiency. Even though GPT-5.2 Codex has lower input token pricing ($1.75 vs. higher for Opus), Opus 4.5’s efficiency results in lower actual costs for certain tasks[1]. Enterprises should model costs based on representative workloads, not advertised rates.

What Does the Future of Enterprise AI Adoption Look Like?

Enterprise AI adoption in 2026 signals a maturation from experimentation to production deployment. The companies winning contracts aren’t those with the flashiest demos—they’re the ones solving procurement, security, and integration challenges that enterprises actually face.

Multi-model strategies will become standard. Just as enterprises use different databases for different workloads (PostgreSQL for transactions, MongoDB for documents, Redis for caching), they’ll deploy specialized AI models for specialized tasks. The MULTIBLY platform makes this practical by providing unified access to 300+ models, letting teams choose the right tool for each job without managing multiple vendor relationships.

Safety and compliance will increasingly differentiate models. As AI moves from experimental projects to business-critical systems, the models with the strongest security guarantees and compliance certifications will command premium pricing. Opus 4.5’s prompt injection resistance and GPT-5’s enterprise certifications represent the minimum bar, not competitive advantages.

Integration depth matters more than breadth. Having 1,000 shallow integrations means less than having 10 deep integrations with the tools enterprises actually use daily. Claude’s Slack and Notion partnerships and GPT’s Microsoft ecosystem embedding demonstrate this focus.

Emerging Enterprise Trends

Trend 1: Hybrid deployment models On-premise instances for sensitive data, cloud APIs for general tasks. Both Claude and GPT now offer this flexibility, but at significant cost premiums.

Trend 2: Industry-specific fine-tuning Healthcare, finance, and legal sectors demand models trained on domain-specific data with appropriate compliance controls. Expect specialized variants of both Opus and GPT for regulated industries.

Trend 3: Reasoning-first architectures As models achieve human-equivalent performance on coding tasks, the differentiator becomes reasoning depth and multi-step planning. Opus 4.5’s abstract reasoning improvements[2] point toward this future.

Trend 4: Cost optimization through model routing Intelligent systems that route simple queries to fast, cheap models and complex queries to powerful, expensive models. This requires orchestration layers that most enterprises are just beginning to build.

Organizations exploring the full landscape of AI deployment options should examine how compact models enable on-device intelligence as a complement to cloud-based enterprise models.

FAQ

Which model is better for enterprise coding tasks, Claude Opus 4.5 or GPT-5? Claude Opus 4.5 leads slightly on coding benchmarks (80.9% vs. 80.0% on SWE-bench 2026)[1] and uses up to 65% fewer tokens on complex tasks[4]. Choose Opus for architecture and security-critical code, GPT-5 for rapid prototyping and broader ecosystem integration.

How much does enterprise AI actually cost per developer? Recommended enterprise stacks cost $300+ per developer monthly when combining multiple models[1]. Single-model deployments range from $30-50/user/month for team tiers to custom enterprise pricing with unlimited usage. Actual costs depend heavily on usage patterns and task complexity.

What safety features do enterprises require before deploying AI? Prompt injection resistance, content filtering, audit logging, data residency options, SOC 2 Type II certification, and documented safety boundaries are minimum requirements. Regulated industries need additional compliance certifications specific to their sector.

Can small companies afford enterprise AI models? Team tiers for both Claude and GPT start at $30-50/user/month, making them accessible to companies with 5-10 developers. Small teams should choose one primary model rather than multi-model stacks to control costs. Platforms like MULTIBLY offer access to multiple models for one subscription price.

How long does enterprise AI deployment typically take? Native integrations (Slack, Teams, Notion) enable deployment in days to weeks. Custom API integrations require 2-4 months. On-premise or dedicated instances stretch to 3-6 months due to security reviews and infrastructure setup.

Do enterprises need multiple AI models or just one? Large enterprises increasingly deploy multiple models for different use cases, similar to using specialized databases. Small teams benefit more from mastering one model deeply. The decision depends on team size, budget, and workflow diversity.

What’s the biggest mistake enterprises make when adopting AI? Choosing based on benchmark scores alone without evaluating integration complexity, safety requirements, and total cost of ownership. A technically superior model that takes six months to deploy delivers less value than a slightly weaker model that goes live in two weeks.

How does token efficiency affect real enterprise costs? Dramatically. Opus 4.5 uses 65% fewer tokens on complex tasks despite higher base pricing, resulting in lower actual costs[4]. Always model costs on representative workloads, not advertised per-token rates.

Are there industry-specific versions of these models? Both Anthropic and OpenAI offer enterprise tiers with custom fine-tuning options, but fully industry-specific versions (healthcare, finance, legal) are still emerging. Expect specialized variants with appropriate compliance controls in 2026-2027.

What happens when usage limits are reached? Team tiers throttle requests or require manual approval for overages. Enterprise unlimited tiers remove this friction entirely. For business-critical applications, unlimited tiers prevent productivity bottlenecks during peak usage.

How do enterprises evaluate AI model security? Through security audits, penetration testing, prompt injection resistance testing, and review of compliance certifications. Opus 4.5’s documented resistance to prompt injection attacks[4] and defensive coding approach[1] address common enterprise security concerns.

Can enterprises switch models after deployment? Technically yes, but switching costs are high. Custom integrations, fine-tuning, and team training create lock-in. Most enterprises that switch do so gradually, running models in parallel before fully migrating. This makes the initial choice critical.

Conclusion

Enterprise AI adoption in 2026 demonstrates that corporate buyers prioritize business outcomes over technical benchmarks. Claude Opus 4.5 and GPT-5 are winning contracts because they solve the actual problems enterprises face: security vulnerabilities, integration complexity, and total cost of ownership.

The choice between models comes down to existing infrastructure and specific use cases. Organizations embedded in the Microsoft ecosystem naturally gravitate toward GPT-5 through existing Azure relationships. Companies built on Slack and Notion find Claude Opus 4.5’s native integrations reduce deployment friction. Both models meet enterprise safety requirements, but Opus 4.5’s prompt injection resistance and defensive coding approach appeal to security-conscious organizations.

Token efficiency matters more than base pricing. Opus 4.5’s ability to use 65% fewer tokens on complex tasks translates to real cost savings despite higher advertised rates[4]. Enterprises should model costs on representative workloads, not published per-token prices.

The future points toward multi-model strategies where specialized AI handles specialized tasks. Just as companies use different tools for different jobs, they’ll deploy Opus for architecture, GPT for prototyping, and smaller models for simple tasks. Platforms like MULTIBLY make this practical by providing unified access to 300+ models through a single subscription.

Next Steps for Enterprise AI Adoption

If you’re evaluating enterprise AI:

- Run pilot tests with both Claude Opus 4.5 and GPT-5 on your actual workflows

- Measure total cost including integration and deployment, not just per-token pricing

- Prioritize models that integrate with your existing tools (Slack, Teams, Notion)

- Involve legal and security teams early to avoid late-stage blockers

- Consider multi-model strategies for different use cases rather than one-size-fits-all

If you’re already using enterprise AI: 6. Audit token consumption to identify efficiency opportunities 7. Evaluate whether specialized models could reduce costs for specific workflows 8. Review safety and compliance requirements as models move to production 9. Explore platforms that enable model comparison to optimize tool selection

The models winning enterprise contracts in 2026 aren’t necessarily the fastest or cheapest—they’re the ones that fit how enterprises actually work, meet their security requirements, and deliver measurable business value. Understanding this distinction separates successful AI adoption from expensive experiments.

References

[1] Claude Opus 4 5 Vs Gpt 5 2 Codex Head To Head Coding Benchmark Comparison – https://vertu.com/lifestyle/claude-opus-4-5-vs-gpt-5-2-codex-head-to-head-coding-benchmark-comparison/

[2] Claude Opus 4 5 Benchmarks – https://www.vellum.ai/blog/claude-opus-4-5-benchmarks

[3] Item – https://news.ycombinator.com/item?id=46037637

[4] Claude Opus 4 5 – https://www.anthropic.com/news/claude-opus-4-5