OpenAI’s gpt-oss Revolution: Deploying 120B Open Reasoning Models for Enterprise Customization represents a fundamental shift in how organizations access GPT-class reasoning capabilities. Released in early 2026 under Apache 2.0 licensing, the gpt-oss-120b and gpt-oss-20b models deliver o3-level performance through open-weight architecture that enterprises can deploy, customize, and fine-tune on their own infrastructure without vendor lock-in[3][4].

This marks OpenAI’s first GPT-class weight release since GPT-2 in 2019, breaking seven years of proprietary-only deployment. For organizations constrained by data residency requirements, compliance mandates, or customization needs that proprietary APIs cannot satisfy, gpt-oss provides a viable alternative that matches or exceeds o4-mini performance across reasoning benchmarks while running on accessible hardware[3].

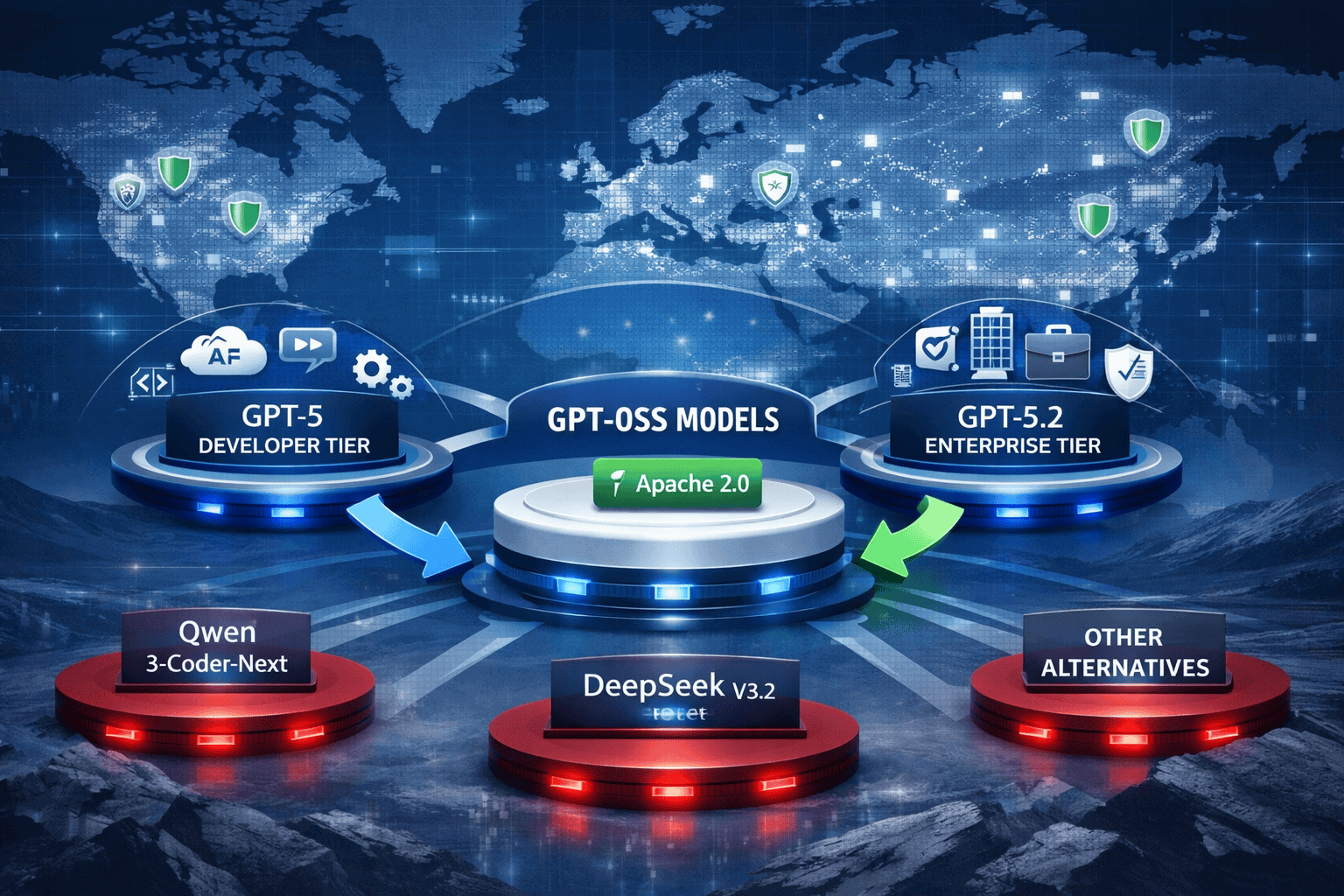

The release positions OpenAI within the open-source ecosystem alongside competitors like Meta’s Llama, Mistral, and Alibaba’s Qwen3, while maintaining differentiation through integrated safety tooling and enterprise-grade performance. Organizations can now choose between proprietary GPT-5 API access, enterprise GPT-5.2 contracts, or self-hosted gpt-oss deployment based on their specific infrastructure and compliance requirements[1].

- Key Takeaways

- Quick Answer

- What Makes gpt-oss-120b Different from OpenAI's Proprietary Models?

- How Does the Two-Tier gpt-oss Architecture Work?

- What Reasoning Performance Can Enterprises Expect from gpt-oss Models?

- How Do Organizations Deploy and Fine-Tune gpt-oss for Enterprise Use?

- What Are the Cost Implications of Self-Hosting gpt-oss vs Using Proprietary APIs?

- How Does gpt-oss Compare to Competing Open Models Like DeepSeek, Qwen3, and Llama?

- What Safety and Compliance Features Does gpt-oss Provide for Enterprise Deployment?

- How Does OpenAI's 2026 Strategy Position gpt-oss Against Proprietary Offerings?

- What Are the Practical Next Steps for Enterprises Considering gpt-oss Deployment?

- Frequently Asked Questions

- Conclusion

Key Takeaways

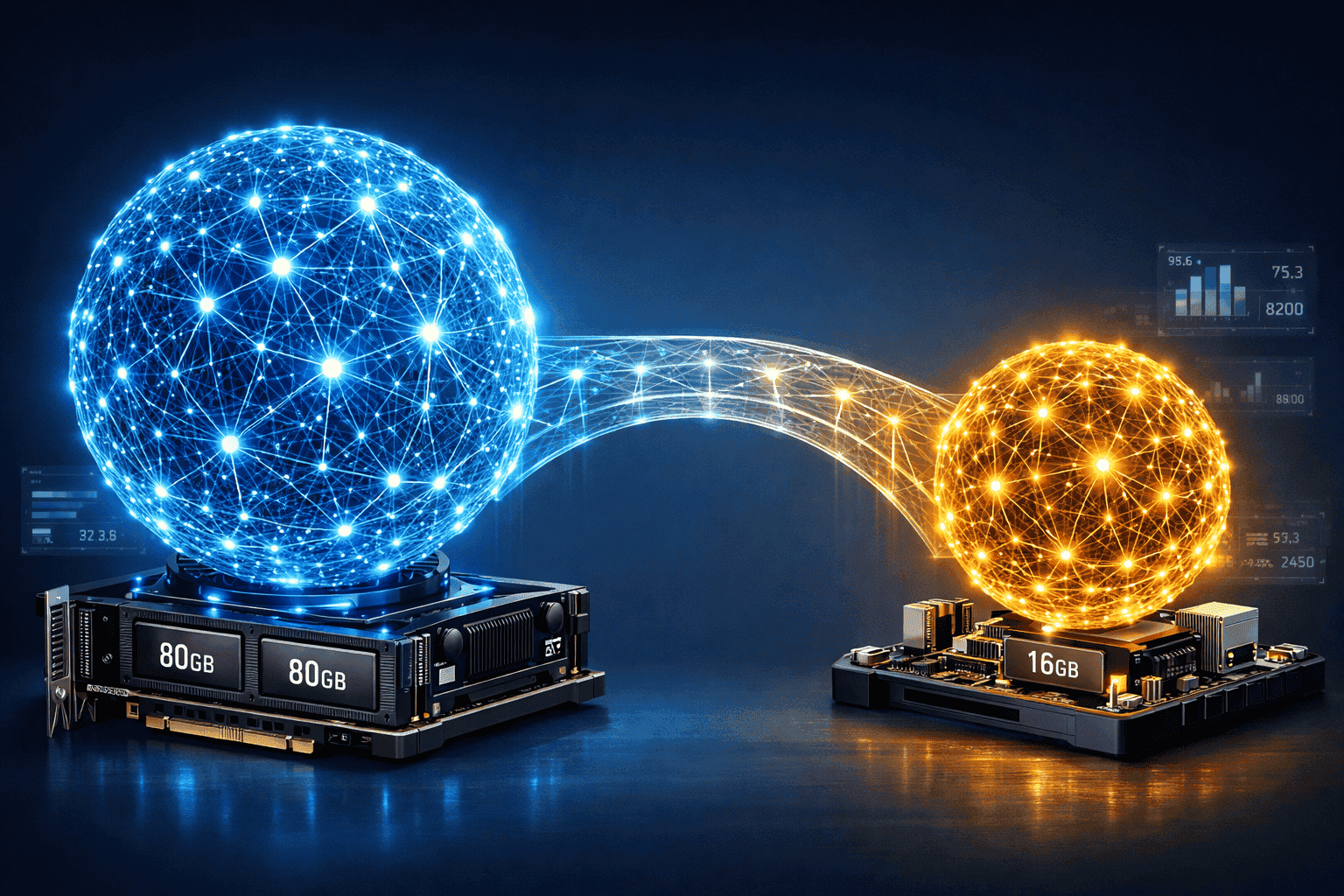

- Two-tier open-weight release: gpt-oss-120b runs on a single 80GB GPU with near o4-mini performance, while gpt-oss-20b operates on edge devices with just 16GB memory[3]

- Apache 2.0 licensing: Full commercial use rights with no restrictions on fine-tuning, modification, or redistribution for both model variants

- Reasoning performance parity: gpt-oss-120b matches o4-mini on core benchmarks and exceeds o3-mini across MMLU, HLE, Codeforces, and TauBench evaluations[3]

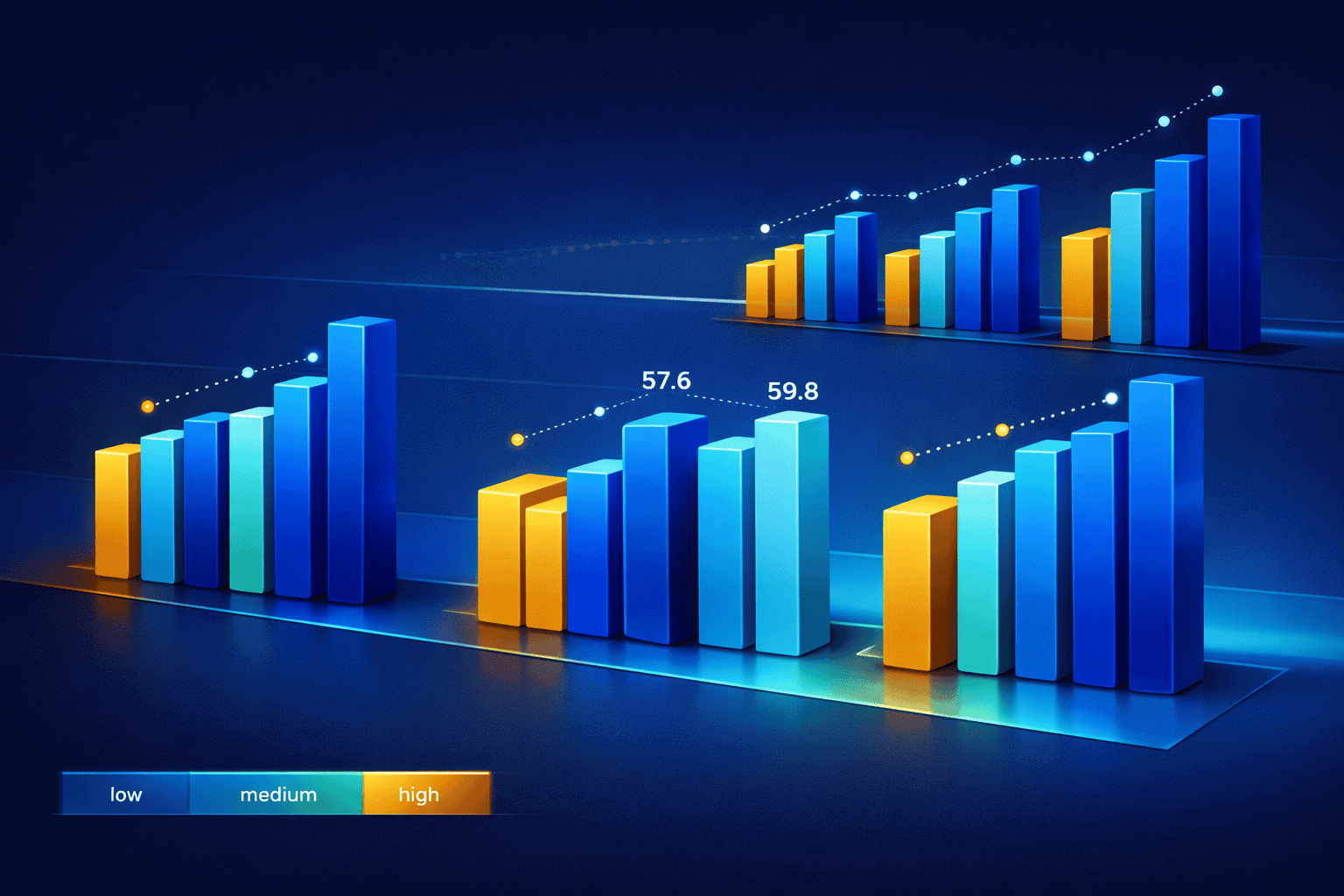

- Healthcare domain leadership: Scores 57.6 on HealthBench with high reasoning effort, approaching o3’s 59.8 and substantially outperforming GPT-4o’s 53.0[4]

- Three adjustable reasoning levels: Low, medium, and high effort modes toggle via system message, trading latency for deeper deliberation

- 128k context window: Both models support extended context, multi-step tool use, function calling, and structured outputs previously requiring multiple specialized models[4]

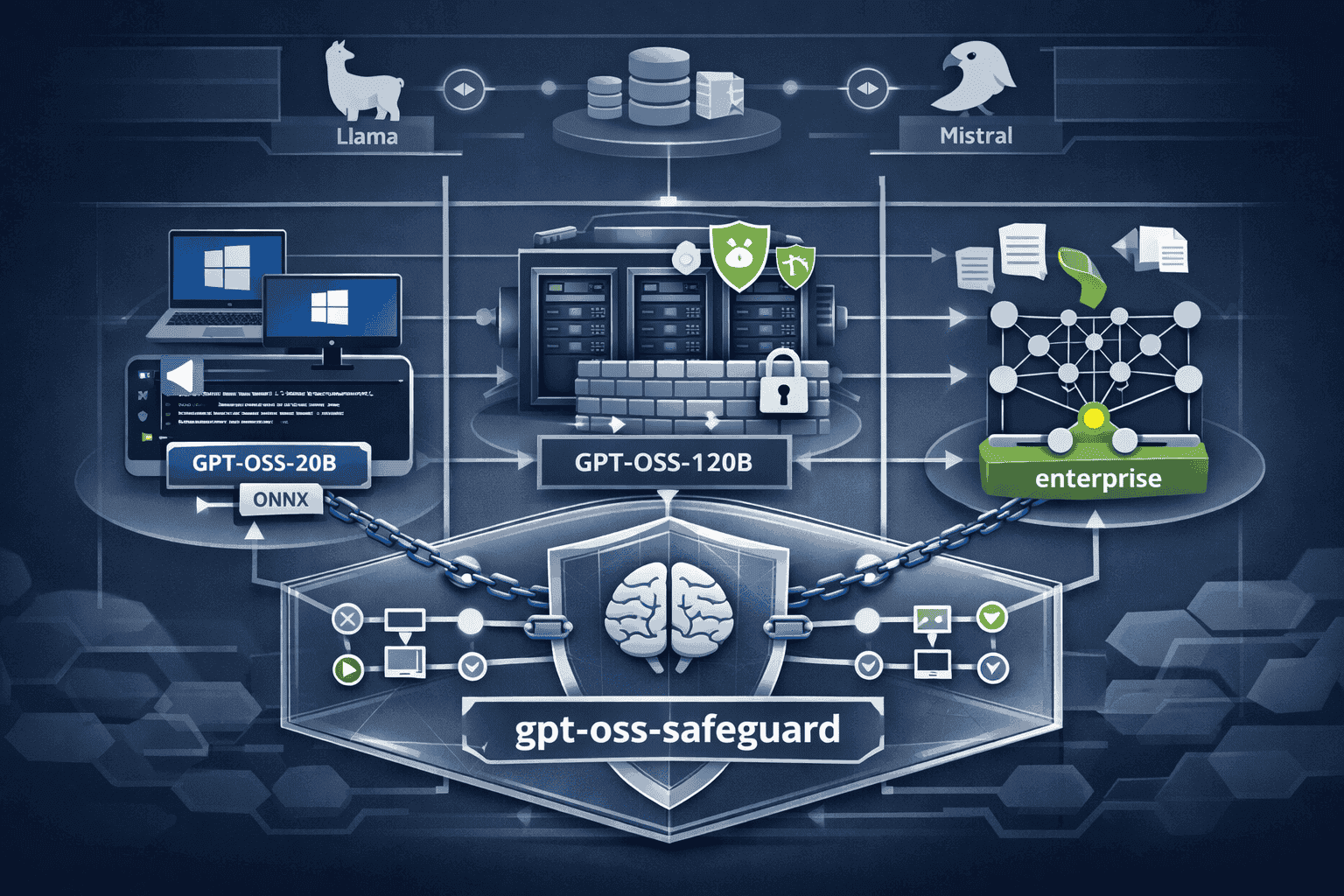

- Integrated safety framework: gpt-oss-safeguard models enable inference-time policy customization through chain-of-thought classification without retraining[4]

- Microsoft infrastructure integration: GPU-optimized gpt-oss-20b versions deployed to Windows devices via ONNX Runtime and VS Code AI Toolkit[3]

- Strategic positioning: Third prong of OpenAI’s 2026 roadmap alongside GPT-5 (developers) and GPT-5.2 (enterprise), targeting compliance-constrained organizations[1]

- Cost-effective scaling: Organizations avoid per-token API costs and data egress fees through local deployment while maintaining competitive performance

Quick Answer

OpenAI’s gpt-oss-120b delivers o3-level reasoning performance through open-weight architecture that enterprises can deploy on single 80GB GPUs, achieving near-parity with o4-mini across core benchmarks while enabling full customization under Apache 2.0 licensing. The smaller gpt-oss-20b variant runs on edge devices with 16GB memory, providing local inference without cloud dependencies. Both models support 128k context, adjustable reasoning effort, and integrated safety tooling, making them viable alternatives to proprietary APIs for organizations with data residency, compliance, or fine-tuning requirements.

What Makes gpt-oss-120b Different from OpenAI’s Proprietary Models?

gpt-oss-120b provides downloadable model weights under Apache 2.0 licensing, allowing organizations to run inference on their own infrastructure rather than calling OpenAI’s API. This architectural difference enables data residency compliance, eliminates per-token costs, and permits fine-tuning on proprietary datasets without sending training data to external servers.

The performance gap between gpt-oss-120b and OpenAI’s proprietary o4-mini is minimal across standard reasoning benchmarks. On MMLU (general knowledge), HLE (language evaluation), Codeforces (competition coding), and TauBench (tool-calling), gpt-oss-120b achieves near-parity results[3]. In healthcare-specific evaluations, gpt-oss-120b with high reasoning effort scores approximately 57.6 compared to o3’s 59.8, substantially exceeding GPT-4o’s 53.0[4].

Key architectural differences:

- Deployment model: Self-hosted on enterprise infrastructure vs. API-only access

- Licensing: Apache 2.0 with full commercial rights vs. proprietary terms of service

- Customization: Direct weight access for fine-tuning vs. limited prompt engineering and few-shot learning

- Cost structure: Upfront GPU infrastructure vs. per-token metered billing

- Data handling: All inference happens on-premise vs. data transmitted to OpenAI servers

- Version control: Organizations control model updates vs. automatic version deprecation

The hardware requirements remain accessible. gpt-oss-120b runs on a single 80GB GPU (such as NVIDIA A100 or H100), making it deployable on standard enterprise ML infrastructure without multi-GPU clusters[3]. Organizations already running open-source models like DeepSeek R1 or Qwen3 can adopt gpt-oss-120b using existing hardware.

Choose gpt-oss-120b over proprietary GPT-5 if:

- Data residency regulations prohibit cloud API usage

- Fine-tuning on proprietary domain data is required

- Per-token costs exceed self-hosting economics at scale

- Offline inference without internet connectivity is needed

- Full control over model versioning and deprecation is essential

How Does the Two-Tier gpt-oss Architecture Work?

OpenAI released two distinct model variants to serve different deployment scenarios. gpt-oss-120b targets data center and on-premise enterprise infrastructure, while gpt-oss-20b enables edge deployment on laptops, workstations, and embedded systems.

gpt-oss-120b specifications:

- Parameter count: 120 billion parameters

- Hardware requirement: Single 80GB GPU minimum

- Performance tier: Near-parity with o4-mini across reasoning benchmarks

- Use cases: Enterprise data centers, on-premise compliance environments, high-accuracy reasoning tasks

- Context window: 128k tokens

- Reasoning modes: Low, medium, high effort via system message configuration

gpt-oss-20b specifications:

- Parameter count: 20 billion parameters (estimated based on edge optimization)

- Hardware requirement: 16GB memory (CPU or GPU)

- Performance tier: Matches or exceeds o3-mini despite smaller size[3]

- Use cases: Edge devices, Windows laptops, offline environments, latency-sensitive applications

- Context window: 128k tokens

- Reasoning modes: Low, medium, high effort via system message configuration

The smaller gpt-oss-20b variant achieves competitive performance through distillation and optimization techniques. On competition mathematics (AIME 2024 and 2025) and health benchmarks, gpt-oss-20b actually outperforms o3-mini despite the parameter count difference[3]. This makes it suitable for scenarios where hardware constraints or latency requirements prevent deploying the larger model.

Microsoft integrated gpt-oss-20b into Windows devices through ONNX Runtime, making it accessible via Foundry Local and the VS Code AI Toolkit[3]. Windows developers can run local inference without cloud connectivity, useful for sensitive code analysis or offline development environments.

Deployment decision framework:

| Scenario | Recommended Model | Reasoning |

|---|---|---|

| Data center with 80GB+ GPUs | gpt-oss-120b | Maximum performance, full reasoning capability |

| Edge devices, laptops | gpt-oss-20b | Runs on 16GB memory, competitive accuracy |

| Healthcare compliance | gpt-oss-120b | Highest HealthBench scores (57.6 vs o3’s 59.8)[4] |

| Offline development | gpt-oss-20b | Windows integration via ONNX Runtime[3] |

| Cost-sensitive scaling | gpt-oss-20b | Lower infrastructure costs, sufficient for most tasks |

| Maximum accuracy | gpt-oss-120b | Near o4-mini parity across benchmarks[3] |

Both models support the same API surface: 128k context, function calling, structured outputs, and multi-step tool use. Organizations can develop applications against gpt-oss-20b for prototyping and testing, then deploy to gpt-oss-120b for production without code changes.

What Reasoning Performance Can Enterprises Expect from gpt-oss Models?

gpt-oss-120b delivers near-parity performance with OpenAI’s o4-mini across standard reasoning evaluations, while gpt-oss-20b matches or exceeds o3-mini despite its smaller parameter count. Both models demonstrate particular strength in healthcare, competition mathematics, and tool-calling benchmarks.

Core benchmark results for gpt-oss-120b:

- MMLU (general knowledge): Near-parity with o4-mini[3]

- HLE (language evaluation): Near-parity with o4-mini[3]

- Codeforces (competition coding): Near-parity with o4-mini[3]

- TauBench (tool-calling): Near-parity with o4-mini[3]

- HealthBench (high effort): 57.6 vs o3’s 59.8, substantially above GPT-4o’s 53.0[4]

- AIME 2024 & 2025 (mathematics): Outperforms o4-mini[3]

The three reasoning effort modes allow organizations to trade latency for accuracy based on task requirements. Low effort provides fast responses for straightforward queries, medium effort balances speed and deliberation, and high effort enables deep reasoning for complex problems. This configuration happens via system message rather than model switching, simplifying deployment.

Healthcare emerges as a particular strength. With high reasoning effort enabled, gpt-oss-120b scores approximately 57.6 on HealthBench evaluations, approaching o3’s 59.8 and substantially exceeding GPT-4o’s 53.0[4]. This positions gpt-oss as viable for medical documentation, clinical decision support, and health data analysis where data residency requirements prevent cloud API usage.

Common performance misconceptions:

- “Open-weight models always lag proprietary versions” – gpt-oss-120b achieves near-parity with o4-mini, not degraded performance[3]

- “Smaller models can’t compete” – gpt-oss-20b outperforms o3-mini on mathematics and health benchmarks despite fewer parameters[3]

- “Reasoning requires massive infrastructure” – gpt-oss-120b runs on a single 80GB GPU, not multi-node clusters[3]

- “Open models lack safety features” – gpt-oss-safeguard provides integrated policy enforcement[4]

Organizations requiring reasoning capabilities comparable to Claude Opus 4.5 or GPT-5 can evaluate gpt-oss-120b as a self-hosted alternative when compliance or customization requirements prohibit proprietary API usage.

How Do Organizations Deploy and Fine-Tune gpt-oss for Enterprise Use?

Deploying gpt-oss models requires standard ML infrastructure and follows familiar open-source model workflows. Organizations download model weights from OpenAI’s distribution channels, load them using compatible inference frameworks, and configure reasoning effort via system messages.

Basic deployment steps:

- Provision hardware: Single 80GB GPU for gpt-oss-120b or 16GB memory for gpt-oss-20b

- Download model weights: Access via OpenAI’s official distribution (Apache 2.0 license)[3]

- Select inference framework: Compatible with standard transformers libraries, ONNX Runtime for Windows[3]

- Configure reasoning effort: Set low/medium/high via system message based on use case

- Integrate safety tooling: Deploy gpt-oss-safeguard for policy enforcement if needed[4]

- Test performance: Validate latency, throughput, and accuracy against requirements

- Scale infrastructure: Add GPU instances based on concurrent user load

Fine-tuning enables customization for domain-specific tasks, terminology, or output formats. Unlike proprietary APIs where fine-tuning requires uploading training data to OpenAI’s servers, gpt-oss allows organizations to fine-tune locally on proprietary datasets without data egress.

Fine-tuning workflow:

- Prepare training data: Format domain-specific examples (medical records, legal documents, technical specifications)

- Select fine-tuning approach: Full fine-tuning for maximum customization or LoRA/QLoRA for efficiency

- Configure training parameters: Learning rate, batch size, epochs based on dataset size and task complexity

- Run training job: Execute on enterprise GPU infrastructure without external data transmission

- Evaluate custom model: Test against domain-specific benchmarks and production scenarios

- Deploy fine-tuned weights: Replace base model with customized version in inference pipeline

- Version control: Maintain multiple fine-tuned variants for different use cases or departments

Microsoft’s ONNX Runtime integration simplifies Windows deployment. Developers access gpt-oss-20b through VS Code AI Toolkit and Foundry Local, enabling local inference for code completion, documentation generation, and development assistance without cloud dependencies[3].

Common deployment mistakes:

- Underprovisioning memory: gpt-oss-120b requires full 80GB; partial loading degrades performance

- Ignoring reasoning effort configuration: Default settings may not match use case latency requirements

- Skipping safety integration: Deploy gpt-oss-safeguard before production to enforce content policies[4]

- Neglecting version control: Track model weights and fine-tuned variants to prevent configuration drift

Organizations already operating open-source models like Llama 4 or Qwen3 can integrate gpt-oss using existing infrastructure and workflows.

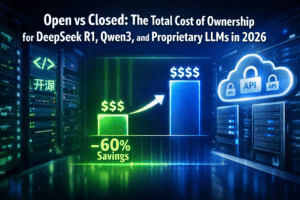

What Are the Cost Implications of Self-Hosting gpt-oss vs Using Proprietary APIs?

Total cost of ownership for gpt-oss depends on inference volume, infrastructure costs, and operational overhead. At high usage volumes, self-hosting typically costs less than metered API pricing, while low-volume scenarios favor pay-per-use APIs.

Cost components for self-hosted gpt-oss-120b:

- GPU infrastructure: $2-4 per hour for 80GB GPU instances (cloud) or capital expense for on-premise hardware

- Storage: Model weights require ~240GB storage (120B parameters at FP16)

- Networking: Data transfer costs for model download (one-time) and inference traffic (if cloud-hosted)

- Operations: DevOps time for deployment, monitoring, updates, and troubleshooting

- Fine-tuning: Additional GPU hours for training custom models on proprietary data

Cost components for proprietary API (GPT-5 or o4-mini):

- Per-token pricing: Input and output tokens charged separately

- Request overhead: Minimum cost per API call regardless of token count

- Data egress: Transfer costs when sending documents or context to API

- No fine-tuning control: Limited customization without dedicated enterprise contracts

Break-even analysis example:

Assume an organization processes 100 million tokens per month (roughly 75 million words or 3,000 documents). With proprietary API pricing around $0.03 per 1,000 input tokens and $0.06 per 1,000 output tokens (typical GPT-4 class pricing):

- Monthly API cost: (50M input × $0.03/1K) + (50M output × $0.06/1K) = $1,500 + $3,000 = $4,500

- Monthly self-hosted cost: 1 GPU × $3/hour × 730 hours = $2,190 (plus operational overhead)

At this volume, self-hosting saves approximately $2,300 per month before accounting for operational costs. The break-even point typically occurs between 20-50 million tokens per month, depending on infrastructure efficiency and labor costs.

Choose self-hosted gpt-oss if:

- Monthly inference volume exceeds 50 million tokens

- Data residency requirements prevent cloud API usage regardless of cost

- Fine-tuning on proprietary data is required

- Predictable infrastructure costs are preferred over variable metered billing

- Offline inference capability justifies infrastructure investment

Choose proprietary API if:

- Monthly inference volume is under 20 million tokens

- No compliance restrictions prevent cloud usage

- Operational overhead of self-hosting exceeds API cost savings

- Automatic model updates and deprecation management are valued

- Development speed matters more than long-term cost optimization

Organizations can compare costs across multiple deployment options using platforms like MULTIBLY, which provides access to 300+ AI models including both proprietary and open-source options for side-by-side evaluation.

How Does gpt-oss Compare to Competing Open Models Like DeepSeek, Qwen3, and Llama?

OpenAI’s gpt-oss-120b enters a competitive open-source landscape where models like DeepSeek R1, Alibaba’s Qwen3, and Meta’s Llama 4 already offer strong reasoning capabilities. Each model brings different strengths in performance, efficiency, and specialized domains.

Competitive positioning:

| Model | Parameter Count | Key Strength | Hardware Requirement | Licensing |

|---|---|---|---|---|

| gpt-oss-120b | 120B | Healthcare reasoning, o4-mini parity | Single 80GB GPU | Apache 2.0 |

| gpt-oss-20b | 20B | Edge efficiency, Windows integration | 16GB memory | Apache 2.0 |

| DeepSeek R1 | 671B (MoE) | Cost-effective reasoning, Chinese language | Multi-GPU | MIT |

| Qwen3-Coder-Next | 80B total, 3B active | Code generation, multilingual | Single GPU | Apache 2.0 |

| Llama 4 | 405B (largest) | Long context (10M tokens), multimodal | Multi-GPU cluster | Llama 3 license |

gpt-oss-120b differentiates through healthcare performance and integrated safety tooling. The 57.6 HealthBench score approaches proprietary o3 levels[4], making it particularly suitable for medical applications where competitors have less specialized performance data.

DeepSeek R1 offers stronger cost-effectiveness for general reasoning tasks through its mixture-of-experts architecture, activating only 37B parameters per forward pass while maintaining 671B total capacity. Organizations prioritizing cost-effective reasoning at scale may prefer DeepSeek, while those requiring healthcare specialization favor gpt-oss.

Qwen3 excels in multilingual scenarios and code generation, particularly for non-English languages where OpenAI models historically underperform. Qwen3’s multilingual capabilities make it preferable for global enterprises operating in Chinese, Arabic, or other non-Western languages.

Llama 4’s 10M token context window enables document processing use cases that gpt-oss’s 128k context cannot address. Organizations working with ultra-long documents or extensive code repositories require Llama 4’s extended context, while gpt-oss suits standard reasoning tasks within 128k token limits.

Selection criteria:

- Healthcare/medical: gpt-oss-120b (57.6 HealthBench score)[4]

- Cost-effective reasoning: DeepSeek R1 (MoE efficiency)

- Multilingual/code: Qwen3-Coder-Next (non-English strength)

- Long context: Llama 4 (10M token window)

- Edge deployment: gpt-oss-20b (16GB memory requirement)[3]

- Windows integration: gpt-oss-20b (ONNX Runtime support)[3]

Organizations can evaluate multiple models side-by-side using MULTIBLY’s comparison platform, testing the same prompts across gpt-oss, DeepSeek, Qwen3, and Llama to determine which performs best for specific use cases.

What Safety and Compliance Features Does gpt-oss Provide for Enterprise Deployment?

OpenAI released gpt-oss-safeguard models alongside the main gpt-oss weights to address enterprise safety and compliance requirements. These safeguard models use chain-of-thought reasoning to classify content against developer-provided policies, enabling inference-time customization without retraining the base model.

gpt-oss-safeguard capabilities:

- Policy-based classification: Evaluate inputs and outputs against custom content policies

- Chain-of-thought reasoning: Transparent decision process for why content violates or passes policies

- Inference-time configuration: Update policies without model retraining or fine-tuning

- No data transmission: All safety evaluation happens on-premise alongside inference

- Developer control: Organizations define policies rather than relying on OpenAI’s default safety filters

The safeguard models were released in October 2025, providing several months of production testing before the main gpt-oss-120b and gpt-oss-20b launch[4]. This separate release allowed organizations to integrate safety tooling into existing workflows before adopting the reasoning models.

Common enterprise compliance scenarios:

- Healthcare data protection: Classify outputs to prevent PHI (Protected Health Information) leakage

- Financial regulation: Detect potential disclosure of material non-public information

- Legal privilege: Identify attorney-client privileged content in document processing

- Industry-specific terminology: Flag outputs containing restricted technical specifications or trade secrets

- Geographic compliance: Apply different content policies based on deployment region (GDPR, CCPA, etc.)

Unlike proprietary APIs where safety filtering happens server-side using OpenAI’s policies, gpt-oss-safeguard allows organizations to implement custom rules. A healthcare organization might permit medical terminology that OpenAI’s default filters would flag, while a financial institution might enforce stricter disclosure controls than general-purpose safety models provide.

Integration workflow:

- Define content policies: Document prohibited content categories and boundary cases

- Configure safeguard model: Provide policy definitions to gpt-oss-safeguard

- Implement filtering pipeline: Route inputs through safeguard before inference, outputs after generation

- Review chain-of-thought: Audit safety decisions to validate policy enforcement

- Update policies: Modify rules based on production experience without model retraining

- Monitor compliance: Track safety filter activation rates and false positive/negative patterns

Organizations operating in regulated industries should deploy gpt-oss-safeguard before production use, even if initial testing suggests low safety risk. Compliance requirements often mandate documented safety controls regardless of empirical violation rates.

How Does OpenAI’s 2026 Strategy Position gpt-oss Against Proprietary Offerings?

OpenAI’s 2026 roadmap includes three distinct model tiers: GPT-5 for developers, GPT-5.2 for enterprise contracts, and gpt-oss for organizations requiring open-weight deployment. This strategy addresses different customer segments while preventing defection to competitors like Meta, Mistral, or Alibaba.

Three-tier positioning:

- GPT-5: API-first for developers, optimized for speed and general-purpose tasks

- GPT-5.2: Enterprise contracts with dedicated capacity, custom fine-tuning, and support

- gpt-oss: Open-weight models for data residency, compliance, and self-hosting requirements[1]

The gpt-oss release targets organizations that might otherwise adopt Llama, Mistral, or DeepSeek due to compliance constraints. By offering competitive open-weight models, OpenAI retains these customers within its ecosystem rather than losing them entirely to alternatives. Organizations can start with gpt-oss for compliance-constrained workloads while using proprietary GPT-5 APIs for other applications.

This mirrors strategies from other AI providers. Mistral offers both API access and open-weight models like Magistral Medium for enterprise reasoning. Google provides both Gemini API access and open variants. The hybrid approach acknowledges that no single deployment model satisfies all enterprise requirements.

Complementary proprietary offerings:

OpenAI launched ChatGPT Health in January 2026, a proprietary health-focused experience integrating medical records and wellness apps[4]. This complements gpt-oss-120b’s strong HealthBench performance by providing a consumer-facing health AI product while offering enterprises the option to deploy gpt-oss locally for patient data processing.

The strategy prevents customer segmentation where organizations choose either open-source or proprietary. Instead, enterprises can use gpt-oss for regulated workloads requiring local deployment while accessing proprietary GPT-5 APIs for general-purpose applications where cloud usage is permitted.

Competitive response context:

The gpt-oss release came as competitors advanced in early February 2026. Alibaba’s Qwen3-Coder-Next (80B total, 3B active parameters) and DeepSeek V3.2 both launched around the same time[4], intensifying competition in the open-source reasoning model space. OpenAI’s entry with gpt-oss-120b establishes presence in this segment rather than ceding it entirely to Chinese AI labs.

Organizations evaluating the open vs closed model decision can now include OpenAI in the open-source comparison rather than treating it as proprietary-only.

What Are the Practical Next Steps for Enterprises Considering gpt-oss Deployment?

Organizations should start with small-scale evaluation before committing to full production deployment. This approach validates performance, cost assumptions, and operational requirements without large upfront investment.

Evaluation roadmap:

Phase 1: Technical validation (2-4 weeks)

- Provision single 80GB GPU instance (cloud or on-premise)

- Download gpt-oss-120b weights and deploy using standard inference framework

- Test reasoning performance on representative production queries

- Measure latency, throughput, and accuracy against requirements

- Compare results to proprietary API baseline (GPT-5, Claude, etc.)

- Evaluate gpt-oss-20b on edge devices if relevant to use case

Phase 2: Cost analysis (1-2 weeks)

- Calculate current monthly API costs across all AI workloads

- Estimate self-hosting infrastructure costs (GPU, storage, networking, operations)

- Project break-even volume based on usage patterns

- Account for fine-tuning costs if custom models are required

- Compare total cost of ownership over 12-24 month horizon

Phase 3: Compliance validation (2-3 weeks)

- Review data residency and regulatory requirements with legal/compliance teams

- Deploy gpt-oss-safeguard and configure enterprise content policies

- Test safety filtering against representative content samples

- Document compliance controls for audit purposes

- Validate that self-hosted deployment satisfies regulatory constraints

Phase 4: Fine-tuning pilot (3-6 weeks)

- Prepare domain-specific training dataset (1,000-10,000 examples)

- Execute fine-tuning job on gpt-oss-120b base weights

- Evaluate custom model against base model and proprietary alternatives

- Measure improvement in domain-specific accuracy or output quality

- Assess whether fine-tuning justifies operational complexity

Phase 5: Production deployment (4-8 weeks)

- Scale infrastructure to handle production load (multiple GPU instances)

- Implement monitoring, logging, and alerting for inference pipeline

- Deploy fine-tuned models if pilot demonstrated value

- Integrate gpt-oss-safeguard into production workflow

- Establish operational runbooks for troubleshooting and updates

- Train internal teams on model management and version control

Common evaluation mistakes:

- Testing only on synthetic benchmarks: Use real production queries, not academic datasets

- Ignoring operational overhead: Account for DevOps time, not just infrastructure costs

- Skipping compliance review: Involve legal/compliance teams early, not after technical validation

- Underestimating fine-tuning effort: Custom models require significant data preparation and experimentation

- Comparing different reasoning effort levels: Ensure gpt-oss high-effort mode is compared to proprietary model equivalents

Organizations can accelerate evaluation by using MULTIBLY’s platform to compare gpt-oss against 300+ other models side-by-side, identifying which performs best for specific use cases before committing to infrastructure investment.

Decision framework:

Deploy gpt-oss if:

- Data residency or compliance requirements prohibit cloud API usage

- Monthly inference volume exceeds 50 million tokens

- Fine-tuning on proprietary data is required

- Healthcare/medical reasoning is primary use case

- Offline inference capability is needed

Continue with proprietary APIs if:

- Monthly inference volume is under 20 million tokens

- No compliance restrictions prevent cloud usage

- Operational simplicity outweighs cost optimization

- Automatic model updates are valued

- Development speed matters more than long-term cost

Frequently Asked Questions

What is the difference between gpt-oss-120b and gpt-oss-20b?

gpt-oss-120b contains 120 billion parameters and runs on a single 80GB GPU, delivering near-parity performance with o4-mini across reasoning benchmarks. gpt-oss-20b contains 20 billion parameters and runs on edge devices with just 16GB memory, matching or exceeding o3-mini despite its smaller size[3].

Can I use gpt-oss models commercially?

Yes. Both gpt-oss-120b and gpt-oss-20b are released under Apache 2.0 licensing, which permits commercial use, modification, distribution, and fine-tuning without restrictions[3].

How much does it cost to run gpt-oss-120b?

Cloud GPU instances with 80GB memory typically cost $2-4 per hour. At continuous operation (730 hours per month), this ranges from $1,460 to $2,920 monthly before accounting for storage, networking, and operational overhead. On-premise hardware requires upfront capital expense but eliminates recurring cloud costs.

What hardware do I need to run gpt-oss models?

gpt-oss-120b requires a single GPU with at least 80GB memory (such as NVIDIA A100 or H100). gpt-oss-20b runs on devices with 16GB memory, including laptops and workstations with either CPU or GPU inference[3].

How does gpt-oss-120b compare to DeepSeek R1?

gpt-oss-120b achieves near-parity with OpenAI’s o4-mini and excels in healthcare reasoning (57.6 HealthBench score)[4]. DeepSeek R1 uses mixture-of-experts architecture for cost-effective general reasoning and stronger Chinese language performance. Choose gpt-oss for healthcare applications and DeepSeek for cost-sensitive multilingual reasoning.

Can I fine-tune gpt-oss on my own data?

Yes. Apache 2.0 licensing permits fine-tuning on proprietary datasets. Organizations can customize gpt-oss models for domain-specific terminology, output formats, or specialized reasoning without sending training data to external servers.

What is gpt-oss-safeguard and do I need it?

gpt-oss-safeguard provides chain-of-thought content classification against custom policies, enabling inference-time safety controls without model retraining[4]. Organizations operating in regulated industries (healthcare, finance, legal) should deploy safeguard models before production use to enforce compliance policies.

How long is the context window for gpt-oss models?

Both gpt-oss-120b and gpt-oss-20b support 128k token context windows, sufficient for most enterprise applications but shorter than models like Llama 4 (10M tokens) or Kimi K2 (256k tokens)[4].

What are the three reasoning effort modes?

gpt-oss models support low, medium, and high reasoning effort levels configured via system message. Low effort provides fast responses, medium balances speed and deliberation, and high effort enables deep reasoning for complex problems. This allows organizations to trade latency for accuracy based on task requirements[4].

Can gpt-oss run offline without internet connectivity?

Yes. Once model weights are downloaded, gpt-oss operates entirely on local infrastructure without requiring internet access. This makes it suitable for air-gapped environments, offline development, and scenarios where connectivity is unreliable.

How does gpt-oss integrate with Windows devices?

Microsoft provides GPU-optimized versions of gpt-oss-20b deployed via ONNX Runtime, accessible through Foundry Local and the VS Code AI Toolkit[3]. Windows developers can run local inference for code completion, documentation generation, and development assistance.

When should I choose gpt-oss over GPT-5 API?

Choose gpt-oss when data residency requirements prohibit cloud API usage, when monthly inference volume exceeds 50 million tokens making self-hosting more cost-effective, when fine-tuning on proprietary data is required, or when offline inference capability is needed. Choose GPT-5 API for lower volumes, faster development cycles, or when operational simplicity outweighs cost optimization.

Conclusion

OpenAI’s gpt-oss Revolution: Deploying 120B Open Reasoning Models for Enterprise Customization fundamentally changes the open-source AI landscape by bringing GPT-class reasoning performance to self-hosted infrastructure. The gpt-oss-120b model delivers near-parity with o4-mini across core benchmarks while running on a single 80GB GPU, making advanced reasoning accessible to organizations with data residency, compliance, or customization requirements that proprietary APIs cannot satisfy[3].

The two-tier architecture addresses different deployment scenarios. gpt-oss-120b targets enterprise data centers with strong healthcare reasoning performance (57.6 HealthBench score approaching o3’s 59.8)[4], while gpt-oss-20b enables edge deployment on devices with just 16GB memory, matching or exceeding o3-mini despite its smaller size[3]. Both models support 128k context, adjustable reasoning effort, and integrated safety tooling through gpt-oss-safeguard.

For enterprises, the decision between self-hosted gpt-oss and proprietary APIs depends on inference volume, compliance constraints, and operational capabilities. Organizations processing over 50 million tokens monthly typically find self-hosting more cost-effective, while lower-volume scenarios favor pay-per-use APIs. The Apache 2.0 licensing enables fine-tuning on proprietary data without sending training examples to external servers, addressing a key limitation of API-only models.

The competitive landscape includes strong alternatives like DeepSeek R1 for cost-effective reasoning, Qwen3 for multilingual capabilities, and Llama 4 for long-context applications. Organizations should evaluate multiple models against specific use cases rather than assuming one model satisfies all requirements.

Actionable next steps:

- Evaluate technical fit: Deploy gpt-oss-120b on a single GPU and test against representative production queries to validate performance

- Calculate economics: Compare current API costs to self-hosting infrastructure expenses at projected usage volumes

- Assess compliance: Review data residency and regulatory requirements with legal teams to determine if self-hosting is required

- Test safety controls: Deploy gpt-oss-safeguard and configure enterprise content policies before production use

- Compare alternatives: Use platforms like MULTIBLY to evaluate gpt-oss alongside DeepSeek, Qwen3, Llama, and proprietary models

- Plan fine-tuning: If custom models are required, prepare domain-specific training datasets and budget GPU time for experimentation

Organizations that successfully deploy gpt-oss gain control over model versioning, eliminate per-token costs at scale, and enable fine-tuning on proprietary data while maintaining reasoning performance competitive with proprietary alternatives. The open-weight release positions OpenAI within the open-source ecosystem while preserving differentiation through integrated safety tooling and healthcare-specific optimization.