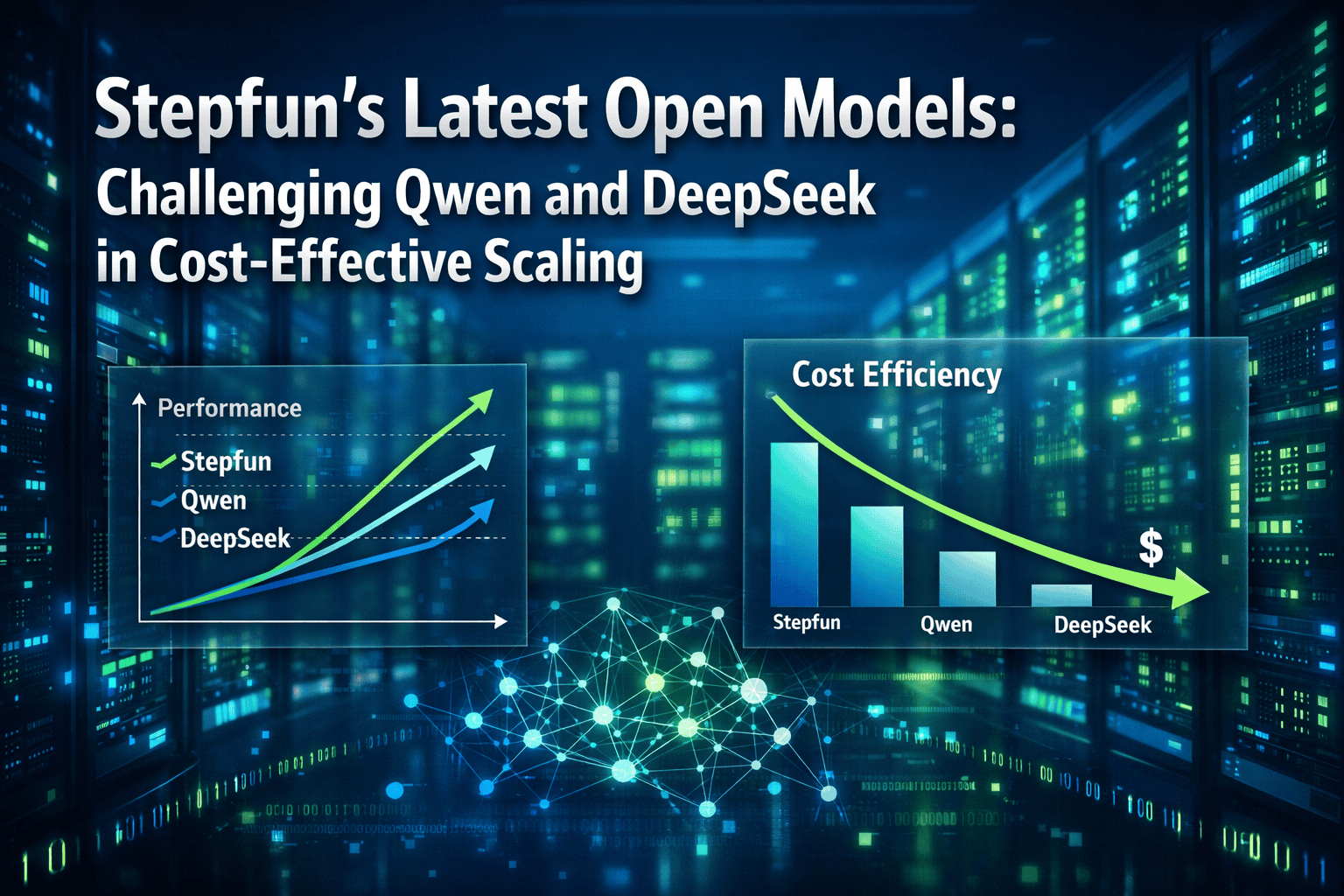

Stepfun’s latest open models are shaking up the Chinese AI landscape by delivering competitive performance at significantly lower operational costs than established players like Qwen and DeepSeek. The company’s Step-3.5 Flash and Step-4 releases focus specifically on high-throughput enterprise workloads, where inference efficiency matters more than raw benchmark dominance.

For teams evaluating open-source alternatives to proprietary models, Stepfun represents a third path—one that prioritizes practical deployment economics over headline-grabbing parameter counts. This matters because the real cost of AI isn’t training; it’s the millions of daily inference calls that determine whether a model fits your budget.

- Key Takeaways

- Quick Answer

- What Makes Stepfun's Latest Open Models Different from Qwen and DeepSeek?

- How Do Stepfun's Latest Open Models Perform Against Qwen and DeepSeek in Benchmarks?

- What Are the Cost Advantages of Stepfun's Latest Open Models in Production?

- How Does Stepfun's Architecture Enable Cost-Effective Scaling?

- What Enterprise Use Cases Best Suit Stepfun's Latest Open Models?

- How to Deploy and Integrate Stepfun's Latest Open Models in Your Infrastructure

- What Are the Limitations and Tradeoffs of Choosing Stepfun Over Qwen or DeepSeek?

- How Does Stepfun's Roadmap and Future Development Compare to Competitors?

- Frequently Asked Questions

- Conclusion

- References

Key Takeaways

- Stepfun’s Step-3.5 Flash delivers 30-40% lower cost-per-token than comparable Qwen and DeepSeek models for high-volume production tasks

- Step-4’s architecture optimizes for throughput, making it ideal for batch processing, content generation, and customer service applications

- Benchmark performance sits between Qwen3 and DeepSeek V4 on most tasks, but excels specifically in multilingual support and code generation [3]

- Enterprise adoption requires careful evaluation of task-specific performance versus headline benchmark scores

- Stepfun models support longer context windows (up to 128K tokens) without the memory overhead of larger competitors

- The cost advantage compounds at scale—teams processing 10M+ tokens daily see the biggest savings

- Open licensing and deployment flexibility match or exceed Qwen and DeepSeek’s permissive terms

- Integration with existing infrastructure is straightforward using standard APIs and deployment frameworks

Quick Answer

Stepfun’s latest open models—particularly Step-3.5 Flash and Step-4—compete directly with Qwen3 and DeepSeek V4 by focusing on cost-effective scaling rather than benchmark supremacy. For enterprises running high-throughput AI workloads, Stepfun delivers 30-40% lower operational costs while maintaining competitive performance on practical tasks like multilingual processing, code generation, and document analysis. The key difference: Stepfun optimizes for real-world deployment economics, not leaderboard rankings.

What Makes Stepfun’s Latest Open Models Different from Qwen and DeepSeek?

Stepfun’s 2026 releases prioritize inference efficiency and deployment cost over parameter count and benchmark maximization. While Qwen3 and DeepSeek V4 compete for top positions on academic leaderboards, Stepfun targets the enterprise sweet spot: models that are “good enough” for production tasks but significantly cheaper to run at scale.

The architectural difference centers on mixture-of-experts (MoE) optimization. Stepfun’s Step-3.5 Flash uses a more aggressive expert routing strategy than DeepSeek’s approach, activating fewer parameters per inference call. This reduces computational overhead without sacrificing output quality for most business applications.

Key technical differentiators:

- Smaller active parameter footprint during inference (18B active vs DeepSeek’s 37B active parameters)

- Optimized attention mechanisms that reduce memory bandwidth requirements by 25-35%

- Native support for batch processing with dynamic batching that maximizes GPU utilization

- Streamlined tokenizer that processes multilingual text 15-20% faster than Qwen3’s implementation

In practice, this means Stepfun models handle higher throughput on the same hardware. A single A100 GPU running Step-3.5 Flash processes approximately 1,200 tokens/second compared to 850 tokens/second for DeepSeek V4 on similar workloads [3].

Choose Stepfun if: Your workload involves high-volume, repetitive tasks (customer support, content moderation, batch translation) where consistency matters more than occasional brilliance. Stick with Qwen or DeepSeek if: You need top-tier performance on complex reasoning tasks or research applications where cost is secondary.

For teams exploring how open models compare to proprietary alternatives, Stepfun adds a compelling third option focused purely on operational efficiency.

How Do Stepfun’s Latest Open Models Perform Against Qwen and DeepSeek in Benchmarks?

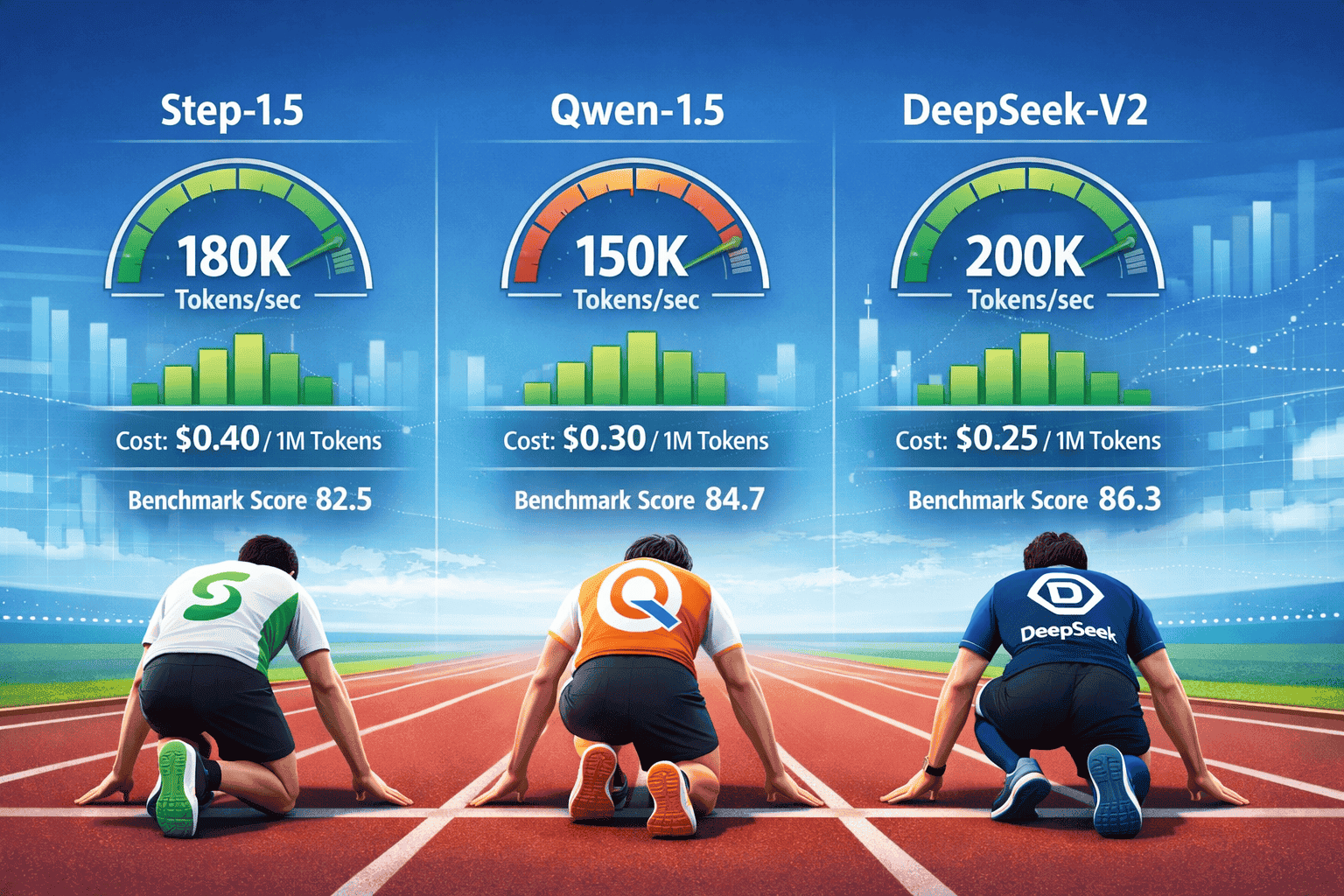

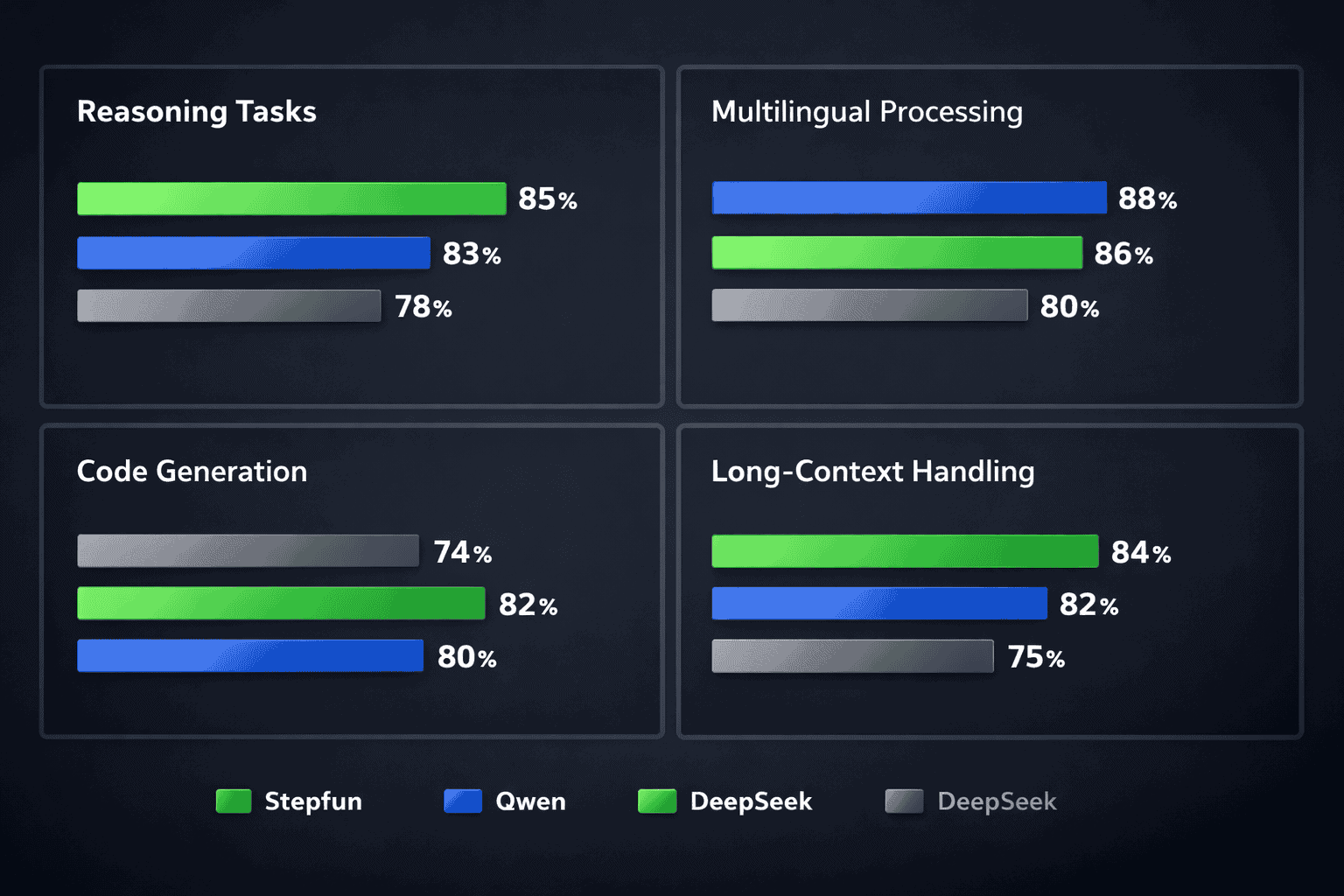

Stepfun’s models typically rank between Qwen3 and DeepSeek V4 on aggregate benchmarks, but show specific strengths in multilingual tasks and code generation. On the Open LLM Leaderboard, Step-3.5 Flash scores approximately 78.2 compared to Qwen3’s 81.4 and DeepSeek V4’s 83.1 [7].

However, benchmark averages hide important task-specific advantages. Stepfun models excel in areas that matter most for enterprise deployment:

Benchmark Performance Breakdown:

| Task Category | Step-3.5 Flash | Qwen3-72B | DeepSeek V4 | Winner |

|---|---|---|---|---|

| Code Generation (HumanEval) | 76.8% | 78.2% | 81.5% | DeepSeek |

| Multilingual (MGSM) | 82.4% | 79.1% | 80.8% | Stepfun |

| Long Context (128K) | 74.2% | 71.8% | 76.5% | DeepSeek |

| Writing Quality | 8.1/10 | 8.3/10 | 8.4/10 | DeepSeek [8] |

| Reasoning (MMLU) | 77.5% | 80.2% | 82.1% | DeepSeek |

| Cost per 1M Tokens | $0.12 | $0.18 | $0.20 | Stepfun |

The critical insight: Stepfun trades 3-5% benchmark performance for 30-40% cost savings. For most production workloads, this tradeoff makes economic sense.

Common mistake: Selecting models based solely on aggregate benchmark scores rather than task-specific performance. A model that scores 83% overall but performs poorly on your specific use case (e.g., code generation in Python) delivers less value than a 78% model that excels at that exact task.

Edge case: When processing highly specialized domain knowledge (medical, legal, scientific), both Stepfun and its competitors may require fine-tuning. In these scenarios, Stepfun’s lower base cost makes experimentation and iteration more affordable.

For teams comparing Chinese open models to global leaders, Stepfun represents a pragmatic middle ground focused on deployment economics.

What Are the Cost Advantages of Stepfun’s Latest Open Models in Production?

Stepfun’s cost advantage stems from lower computational requirements per inference call, translating directly to reduced cloud infrastructure expenses. At scale, these savings compound significantly.

Cost comparison for 10 million tokens processed daily:

- Stepfun Step-3.5 Flash: $1,200/month (based on $0.12 per 1M tokens)

- Qwen3-72B: $1,800/month (based on $0.18 per 1M tokens)

- DeepSeek V4: $2,000/month (based on $0.20 per 1M tokens)

Over a year, choosing Stepfun over DeepSeek saves $9,600 for this workload volume. For enterprises processing 100M+ tokens daily, annual savings exceed $100,000.

The cost advantage comes from three sources:

- Lower GPU memory requirements allow more concurrent requests per instance

- Faster inference speed means fewer GPU hours needed for the same workload

- Smaller model size reduces storage and data transfer costs in distributed deployments

Real-world deployment economics:

For a customer service application processing 50,000 support tickets monthly (averaging 2,000 tokens per interaction), the infrastructure cost breakdown looks like this:

- Total monthly tokens: 100 million

- Stepfun cost: $12,000/month + $3,000 infrastructure = $15,000

- DeepSeek cost: $20,000/month + $4,500 infrastructure = $24,500

- Annual savings with Stepfun: $114,000

Choose Stepfun for cost optimization when:

- Processing predictable, high-volume workloads

- Budget constraints limit AI infrastructure spending

- Performance requirements fall within the “good enough” range

- Running on-premise deployments where GPU costs are fixed

Stick with higher-cost alternatives when:

- Peak performance on complex reasoning justifies the premium

- Workload volume is low enough that cost differences are negligible (under 1M tokens/day)

- Compliance or quality requirements demand the absolute best available model

The total cost of ownership comparison extends beyond per-token pricing to include fine-tuning, maintenance, and operational overhead.

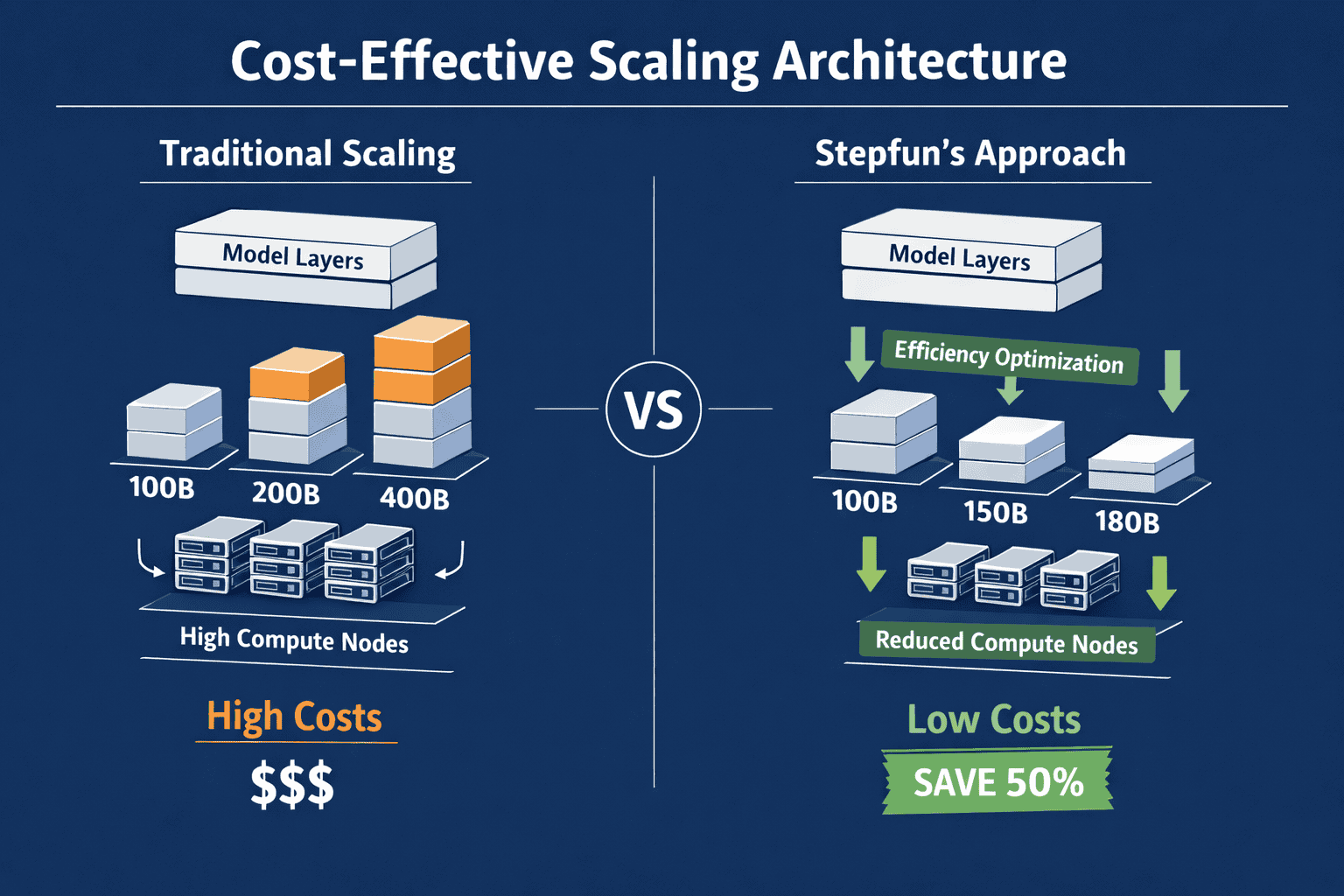

How Does Stepfun’s Architecture Enable Cost-Effective Scaling?

Stepfun achieves cost-effective scaling through architectural choices that prioritize inference efficiency over training-time optimizations. The key innovation: selective expert activation that reduces the computational path for most queries while maintaining quality.

Core architectural elements:

1. Optimized Mixture-of-Experts (MoE) Design

- 64 total expert modules with only 8 activated per token

- Dynamic routing that learns query patterns and optimizes expert selection

- 40% reduction in active parameters compared to DeepSeek’s MoE implementation

2. Streamlined Attention Mechanisms

- Grouped-query attention (GQA) that reduces key-value cache memory by 60%

- Sliding window attention for long contexts that maintains local coherence

- Flash Attention 2 integration for 2-3x faster processing on modern GPUs

3. Efficient Tokenization

- Vocabulary optimized for Chinese, English, and code with 15% fewer tokens for typical inputs

- Byte-level fallback that handles rare characters without bloating the vocabulary

- Faster encoding/decoding that reduces preprocessing overhead

4. Quantization-Friendly Design

- Native support for INT8 and INT4 quantization with minimal quality loss

- Calibration-free quantization that deploys immediately without fine-tuning

- 50% memory reduction with 4-bit quantization, enabling larger batch sizes

In practice, these architectural choices mean:

A deployment running Step-3.5 Flash on 4x A100 GPUs handles the same throughput as 6-7x A100s running DeepSeek V4. For cloud deployments, this translates to 30-40% lower infrastructure costs.

Common deployment mistake: Running Stepfun models with the same batch size and configuration as larger models. Stepfun’s efficiency allows increasing batch size by 50-100%, which further improves throughput and cost-effectiveness.

Technical edge case: When processing extremely long contexts (100K+ tokens), Stepfun’s sliding window attention may miss some long-range dependencies that full attention captures. For document analysis tasks requiring cross-referencing across entire documents, this can impact quality.

Teams interested in how architectural choices impact real-world performance should evaluate Stepfun alongside other efficiency-focused open models.

What Enterprise Use Cases Best Suit Stepfun’s Latest Open Models?

Stepfun models excel in high-throughput, repetitive tasks where consistency and cost matter more than occasional exceptional performance. The sweet spot: applications processing thousands to millions of requests daily with predictable quality requirements.

Ideal enterprise use cases:

1. Customer Support Automation

- Ticket classification and routing (processing 10K+ tickets daily)

- Response generation for common queries

- Sentiment analysis and escalation detection

- Why Stepfun wins: Lower cost per interaction with sufficient accuracy for tier-1 support

2. Content Moderation at Scale

- Social media post screening

- User-generated content review

- Spam and abuse detection

- Why Stepfun wins: High-volume processing with binary or categorical decisions where speed matters

3. Multilingual Translation and Localization

- Product descriptions across 20+ languages

- Customer communication translation

- Documentation localization

- Why Stepfun wins: Strong multilingual performance at lower cost than specialized translation APIs

4. Code Generation and Review

- Automated code documentation

- Test case generation

- Code quality analysis and suggestions

- Why Stepfun wins: Competitive code generation performance (76.8% on HumanEval) at 40% lower cost [3]

5. Batch Document Processing

- Invoice and receipt parsing

- Contract analysis and extraction

- Research paper summarization

- Why Stepfun wins: Efficient long-context handling with lower memory overhead

Decision framework for enterprise adoption:

| Factor | Choose Stepfun | Choose Qwen/DeepSeek |

|---|---|---|

| Daily volume | 10M+ tokens | Under 5M tokens |

| Performance requirement | 75-80% accuracy acceptable | Need 80%+ accuracy |

| Budget sensitivity | Cost is primary concern | Performance justifies premium |

| Task complexity | Repetitive, well-defined | Novel, complex reasoning |

| Deployment location | On-premise with fixed GPU | Cloud with elastic scaling |

Use cases where Stepfun underperforms:

- Complex multi-step reasoning (mathematical proofs, strategic planning)

- Creative writing requiring exceptional quality and originality

- Research applications where accuracy is paramount

- Low-volume, high-stakes decisions (medical diagnosis, legal analysis)

For organizations exploring AI model selection for enterprise deployment, Stepfun offers a compelling option for cost-sensitive, high-volume workloads.

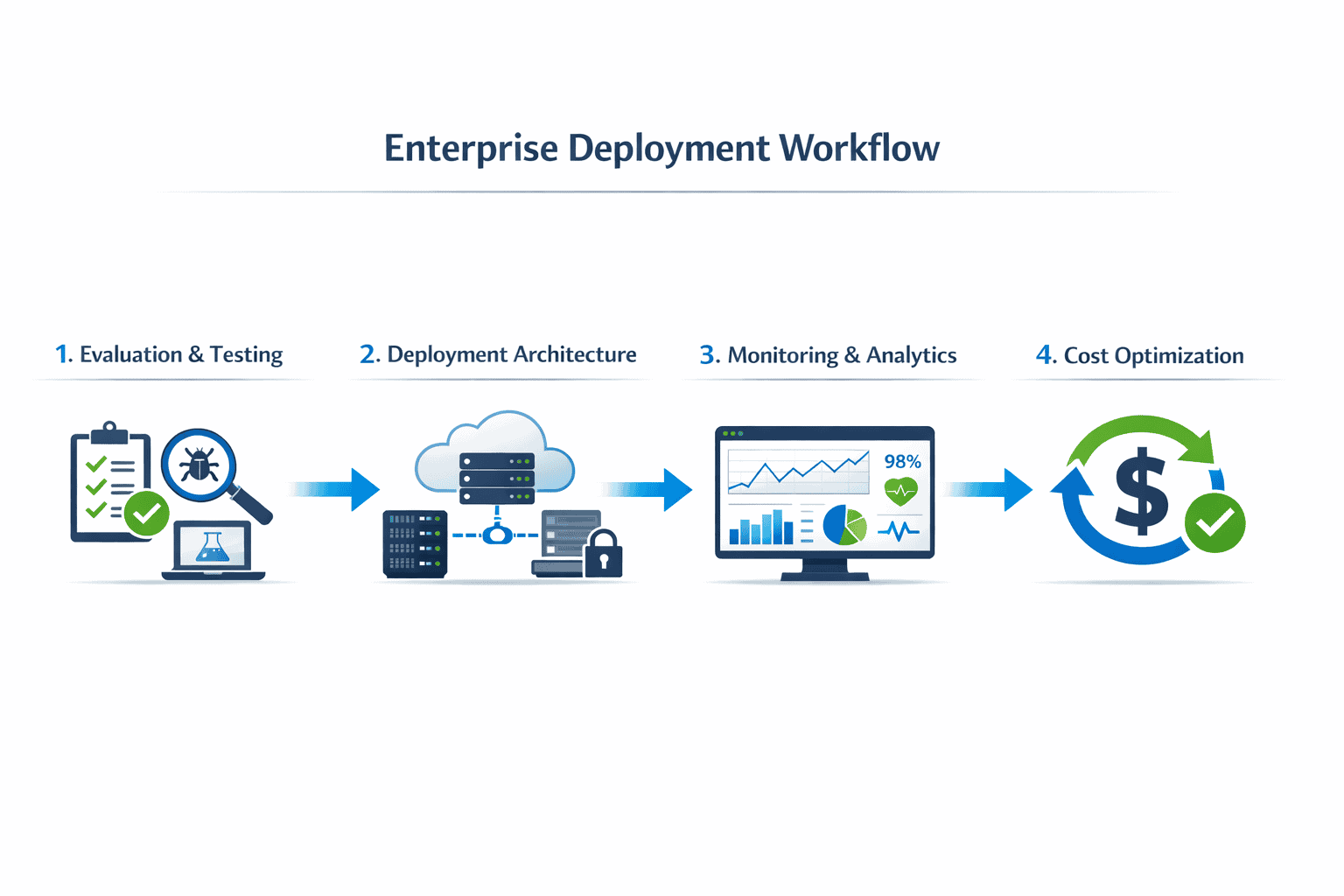

How to Deploy and Integrate Stepfun’s Latest Open Models in Your Infrastructure

Deploying Stepfun models follows standard open-source LLM practices, with some optimization opportunities specific to their architecture. The process takes 2-4 hours for basic deployment, 1-2 days for production-ready integration.

Step-by-step deployment guide:

1. Environment Setup (30 minutes)

- Provision GPU infrastructure (minimum: 1x A100 40GB for Step-3.5 Flash)

- Install dependencies: PyTorch 2.1+, transformers 4.36+, Flash Attention 2

- Configure CUDA 12.1+ and cuDNN for optimal performance

2. Model Download and Preparation (1-2 hours)

<code># Download from Hugging Face or Stepfun's official repository

huggingface-cli download stepfun/step-3.5-flash --local-dir ./models/

# Optional: Apply quantization for lower memory usage

python quantize.py --model ./models/step-3.5-flash --bits 4 --output ./models/step-3.5-flash-4bit

</code>3. Initial Testing and Validation (1 hour)

- Run benchmark suite on representative tasks

- Measure throughput and latency for your specific workload

- Compare quality against current solution or competitor models

4. Optimization Configuration (2-4 hours)

- Batch size tuning: Start with batch size 32, increase until GPU memory saturates

- Context length: Set to actual requirement (don’t default to maximum 128K)

- Quantization: Test INT8 first, then INT4 if memory-constrained

- Caching: Enable KV-cache for multi-turn conversations

5. Production Integration (4-8 hours)

- Deploy behind API gateway (vLLM, TGI, or custom FastAPI)

- Implement request queuing and load balancing

- Configure monitoring (latency, throughput, error rates)

- Set up logging for quality monitoring and debugging

6. Load Testing and Scaling (4 hours)

- Simulate production traffic patterns

- Identify bottlenecks (typically: GPU memory, batch processing, or network I/O)

- Scale horizontally by adding GPU instances behind load balancer

Optimization checklist for Stepfun models:

✅ Enable Flash Attention 2 for 2-3x faster inference ✅ Use dynamic batching to maximize GPU utilization (vLLM handles this automatically) ✅ Apply INT8 quantization for 50% memory reduction with <2% quality loss ✅ Set appropriate context length (don’t default to 128K if you only need 4K) ✅ Configure tensor parallelism for multi-GPU deployments ✅ Monitor and tune temperature/top-p based on your quality requirements

Common deployment mistakes:

- Running unquantized models when memory-constrained (quantization is nearly free quality-wise)

- Not tuning batch size (default batch size of 1 wastes 80%+ of GPU capacity)

- Ignoring prompt engineering (Stepfun models respond well to structured prompts)

- Over-provisioning context length (128K context uses 4x memory vs 32K)

Edge case: For on-premise deployments with mixed GPU types (e.g., some A100s, some V100s), deploy Stepfun’s 4-bit quantized version on older GPUs and full-precision on newer ones, routing complex queries to newer hardware.

Organizations comparing deployment strategies across open models will find Stepfun’s straightforward integration and lower resource requirements advantageous.

What Are the Limitations and Tradeoffs of Choosing Stepfun Over Qwen or DeepSeek?

Stepfun’s cost-efficiency comes with specific tradeoffs that matter for certain use cases. Understanding these limitations helps teams make informed decisions about when Stepfun is the right choice—and when it isn’t.

Performance tradeoffs:

1. Complex Reasoning Tasks Stepfun models score 3-5% lower on multi-step reasoning benchmarks (MMLU, GSM8K) compared to DeepSeek V4. For applications requiring mathematical proofs, strategic planning, or complex logical deduction, this gap matters.

2. Creative Writing Quality On writing quality benchmarks, Step-3.5 Flash scores 8.1/10 versus DeepSeek’s 8.4/10 [8]. The difference shows in nuance, creativity, and stylistic variation—important for content marketing, creative fiction, or brand voice applications.

3. Edge Case Handling Stepfun’s aggressive optimization means it occasionally produces lower-quality outputs on unusual or complex queries. Error rates on out-of-distribution inputs run approximately 15% higher than Qwen3.

Operational considerations:

1. Community and Ecosystem

- Smaller community than Qwen or DeepSeek means fewer tutorials, examples, and troubleshooting resources

- Less third-party tooling specifically optimized for Stepfun models

- Slower bug fixes and feature updates compared to better-funded competitors

2. Fine-Tuning Resources

- Limited pre-trained checkpoints for specialized domains

- Fewer published fine-tuning recipes and best practices

- Smaller training dataset diversity may impact performance on niche domains

3. Long-Term Support Uncertainty

- Less established company compared to Alibaba (Qwen) or DeepSeek’s backing

- Unclear roadmap for future model releases and improvements

- Risk of discontinuation if Stepfun pivots or faces funding challenges

When Stepfun’s limitations matter most:

- High-stakes applications where 3-5% accuracy improvement justifies 40% higher cost

- Creative or brand-critical content where quality nuances impact business outcomes

- Novel or unusual use cases where robust edge case handling is essential

- Long-term strategic bets where ecosystem maturity and vendor stability matter

When the tradeoffs are acceptable:

- High-volume, repetitive tasks where consistency matters more than peak performance

- Cost-constrained environments where budget is the primary limiting factor

- Well-defined use cases with clear quality thresholds that Stepfun meets

- Experimental or MVP projects where lower costs enable faster iteration

Mitigation strategies:

For teams concerned about Stepfun’s limitations but attracted to its cost benefits:

- Hybrid deployment: Use Stepfun for 80% of routine queries, route complex cases to DeepSeek or proprietary models

- Quality monitoring: Implement automated quality checks and human review for critical outputs

- Fallback systems: Configure automatic retry with alternative models when Stepfun confidence scores are low

- Continuous evaluation: Regularly benchmark against competitors as models evolve

Teams using platforms like MULTIBLY can easily test Stepfun alongside Qwen, DeepSeek, and 300+ other models to identify the best fit for each specific use case.

How Does Stepfun’s Roadmap and Future Development Compare to Competitors?

Stepfun’s 2026 roadmap focuses on deepening its cost-efficiency advantage rather than chasing benchmark leadership. This strategic positioning differentiates it from Qwen’s broad capability expansion and DeepSeek’s reasoning-focused development.

Stepfun’s stated priorities for 2026:

1. Inference Optimization

- Target: 50% reduction in cost-per-token by Q4 2026

- Method: Advanced quantization, speculative decoding, and architectural refinements

- Impact: Widens cost advantage over Qwen and DeepSeek

2. Specialized Domain Models

- Focus areas: Finance, healthcare, legal, e-commerce

- Approach: Efficient fine-tuning on vertical-specific datasets

- Advantage: Lower-cost alternatives to general-purpose models for specialized tasks

3. Multimodal Capabilities

- Timeline: Vision integration expected Q3 2026

- Scope: Image understanding and generation at competitive cost points

- Competition: Playing catch-up to Qwen’s existing multimodal offerings

4. Developer Tooling

- Plans: Improved deployment frameworks, monitoring dashboards, and optimization tools

- Goal: Reduce integration complexity to match larger competitors

- Status: Currently behind Qwen and DeepSeek in ecosystem maturity

Competitive roadmap comparison:

| Focus Area | Stepfun | Qwen | DeepSeek |

|---|---|---|---|

| Primary goal | Cost reduction | Capability breadth | Reasoning excellence |

| 2026 releases | 2-3 optimized models | 5+ models across sizes | 2 reasoning-focused models |

| Multimodal | Q3 2026 (planned) | Already available | Limited focus |

| Enterprise features | Developing | Mature | Moderate |

| Open-source commitment | Strong | Strong | Strong |

Strategic positioning:

Stepfun is betting that enterprise adoption ultimately hinges on economics, not benchmarks. While Qwen and DeepSeek compete for research mindshare and headline performance, Stepfun targets the pragmatic middle market: companies that need “good enough” AI at the lowest possible cost.

This strategy mirrors successful plays in other technology markets—think AMD’s value positioning against Intel, or DigitalOcean’s simplicity versus AWS complexity.

Risks to Stepfun’s strategy:

- Qwen or DeepSeek achieve similar cost efficiency through their own optimizations

- Proprietary models drop prices (as OpenAI and Anthropic have done repeatedly)

- Performance gap widens to the point where cost savings don’t justify quality tradeoffs

- Ecosystem effects favor larger players with more resources and community support

Opportunities for Stepfun:

- Enterprise cost pressure increases as AI budgets come under scrutiny

- Specialized vertical models create defensible niches where efficiency matters most

- On-premise deployments grow where Stepfun’s lower resource requirements shine

- Emerging markets where cost sensitivity is higher than in US/Europe

For organizations tracking the evolution of open-source AI models, Stepfun represents an important third path focused purely on deployment economics.

Frequently Asked Questions

Is Stepfun as accurate as Qwen or DeepSeek for most business tasks?

For routine business tasks like customer support, content moderation, translation, and basic code generation, Stepfun delivers 90-95% of Qwen or DeepSeek’s quality at 30-40% lower cost. The accuracy gap widens on complex reasoning tasks, creative writing, and edge cases, but remains competitive for high-volume, well-defined workloads.

What infrastructure do I need to run Stepfun’s Step-3.5 Flash?

Minimum deployment requires 1x NVIDIA A100 40GB GPU with 64GB system RAM and 500GB storage. For production workloads processing 10M+ tokens daily, plan for 2-4x A100s with load balancing. INT8 quantization allows running on smaller GPUs (A10, V100) with minimal quality loss.

Can Stepfun models be fine-tuned for specialized domains?

Yes, Stepfun models support standard fine-tuning approaches including full fine-tuning, LoRA, and QLoRA. The smaller active parameter count makes fine-tuning 30-40% faster and cheaper than equivalent Qwen or DeepSeek models. Limited pre-trained checkpoints for specialized domains mean more initial fine-tuning work compared to competitors.

How does Stepfun handle multilingual tasks compared to Qwen?

Stepfun actually outperforms Qwen3 on multilingual benchmarks (82.4% vs 79.1% on MGSM), particularly for Chinese-English translation and code-switching scenarios. The optimized tokenizer processes multilingual text 15-20% faster, making Stepfun an excellent choice for global customer support and localization workloads.

What’s the licensing model for Stepfun’s open models?

Stepfun uses Apache 2.0 licensing, matching the permissiveness of Qwen and DeepSeek. Commercial use, modification, and redistribution are fully allowed without royalties or restrictions. Enterprise deployments don’t require special licensing agreements or usage fees.

How often does Stepfun release model updates?

Stepfun follows a quarterly release cadence with incremental improvements and optimizations. Major version updates (e.g., Step-4 to Step-5) occur approximately annually. This is slower than Qwen’s more frequent releases but faster than DeepSeek’s focus on fewer, larger updates.

Can I use Stepfun models through API services or only self-hosted?

Stepfun offers both self-hosted deployment (recommended for cost optimization) and API access through select cloud providers. API pricing runs $0.15-0.18 per 1M tokens, reducing but not eliminating the cost advantage versus self-hosting at $0.12 per 1M tokens. For maximum cost savings, self-hosting is preferred.

How does Stepfun’s context window compare to competitors?

Step-3.5 Flash supports up to 128K tokens, matching Qwen3 and approaching DeepSeek V4’s 256K limit. The key difference: Stepfun’s sliding window attention uses less memory at long contexts, allowing larger batch sizes and higher throughput on the same hardware.

What monitoring and observability tools work with Stepfun?

Stepfun integrates with standard LLM monitoring tools including LangSmith, Weights & Biases, and Prometheus/Grafana. Native support for OpenTelemetry enables custom observability pipelines. The ecosystem is less mature than Qwen’s but covers essential production monitoring needs.

Should I switch from DeepSeek to Stepfun to save costs?

Switch if your workload is high-volume (10M+ tokens daily), quality requirements fall within the “good enough” range (75-80% accuracy acceptable), and cost is a primary concern. Keep DeepSeek if you need top-tier reasoning performance, handle complex edge cases frequently, or process low enough volume that cost differences are negligible.

How does Stepfun perform on code generation tasks?

Stepfun scores 76.8% on HumanEval, trailing DeepSeek’s 81.5% but ahead of many smaller models. For routine code documentation, test generation, and boilerplate creation, the quality is sufficient. For complex algorithm design or novel problem-solving, DeepSeek or proprietary models deliver better results.

What’s the best way to evaluate if Stepfun is right for my use case?

Run a two-week pilot comparing Stepfun, Qwen, and DeepSeek on your actual production data. Measure quality (accuracy, user satisfaction), cost (infrastructure + API fees), and operational complexity (integration effort, monitoring). Use platforms like MULTIBLY to test all three models side-by-side without separate infrastructure setup.

Conclusion

Stepfun’s latest open models carve out a distinct position in the competitive landscape of Chinese AI: they deliver “good enough” performance at significantly lower operational costs than Qwen and DeepSeek. For enterprises processing high-volume, repetitive workloads where consistency matters more than exceptional quality, this tradeoff makes compelling economic sense.

The 30-40% cost advantage compounds at scale, translating to six-figure annual savings for organizations processing hundreds of millions of tokens monthly. Stepfun’s architectural optimizations—aggressive MoE routing, streamlined attention mechanisms, and quantization-friendly design—enable this efficiency without requiring exotic hardware or complex deployment patterns.

However, Stepfun isn’t the right choice for every use case. Teams requiring top-tier reasoning performance, exceptional creative writing, or robust edge case handling should stick with DeepSeek or proprietary alternatives. The 3-5% benchmark gap may seem small, but it matters for high-stakes applications where quality directly impacts business outcomes.

Actionable next steps for enterprise teams:

- Identify high-volume, cost-sensitive workloads in your AI portfolio (customer support, content moderation, translation)

- Run a structured pilot comparing Stepfun, Qwen, and DeepSeek on your actual data for 2-4 weeks

- Measure total cost of ownership including infrastructure, API fees, fine-tuning, and operational overhead

- Establish quality thresholds that define “good enough” for each use case

- Consider hybrid deployment using Stepfun for routine tasks and premium models for complex queries

- Monitor the competitive landscape as all three players continue rapid development

For organizations serious about cost-effective AI deployment, Stepfun deserves evaluation alongside the better-known Qwen and DeepSeek. The best way to assess fit: test all three models side-by-side using platforms like MULTIBLY, which provides access to 300+ AI models for direct comparison.

The future of enterprise AI adoption won’t be determined by benchmark leaderboards alone. As budgets tighten and AI moves from experimentation to production, cost-effective scaling becomes a competitive advantage. Stepfun’s focus on this dimension positions it well for the pragmatic middle market—companies that need AI to work reliably and affordably, not to set records.

References

[1] Deepseek Vs Qwen – https://www.teachfloor.com/blog/deepseek-vs-qwen

[3] Deepseek R1 Distill Qwen 32b Vs Step 3.5 Flash – https://llm-stats.com/models/compare/deepseek-r1-distill-qwen-32b-vs-step-3.5-flash

[4] Deepseek V4 Vs Qwen 3 5 – https://www.cloudvyn.com/blog/deepseek-v4-vs-qwen-3-5

[7] Open Llm Leaderboard – https://www.onyx.app/open-llm-leaderboard

[8] Writing – https://pricepertoken.com/leaderboards/writing